Apply getPerspectiveTransform and warpPerspective for bird-eye view (Python).

Hi, I'm following some tutorials to change an image of a golf green with balls to bird-eye view to measure distances in a later step.

Now when I apply the transformation to an image with some text on paper it seems to work, but when applied to the outdoor image the results are not as expected.

Here an example with the outdoor image coords and dimensions:

# targeted rectangle on original image which needs to be transformed

tl = [689, 892]

tr = [2518, 892]

br = [2518, 2071]

bl = [689, 2071]

corner_points_array = np.float32([tl,tr,br,bl])

# original image dimensions

width = 4128

height = 2322

# Create an array with the parameters (the dimensions) required to build the matrix

imgTl = [0,0]

imgTr = [width,0]

imgBr = [width,height]

imgBl = [0,height]

img_params = np.float32([imgTl,imgTr,imgBr,imgBl])

# Compute and return the transformation matrix

matrix = cv2.getPerspectiveTransform(corner_points_array,img_params)

img_transformed = cv2.warpPerspective(image,matrix,(width,height))

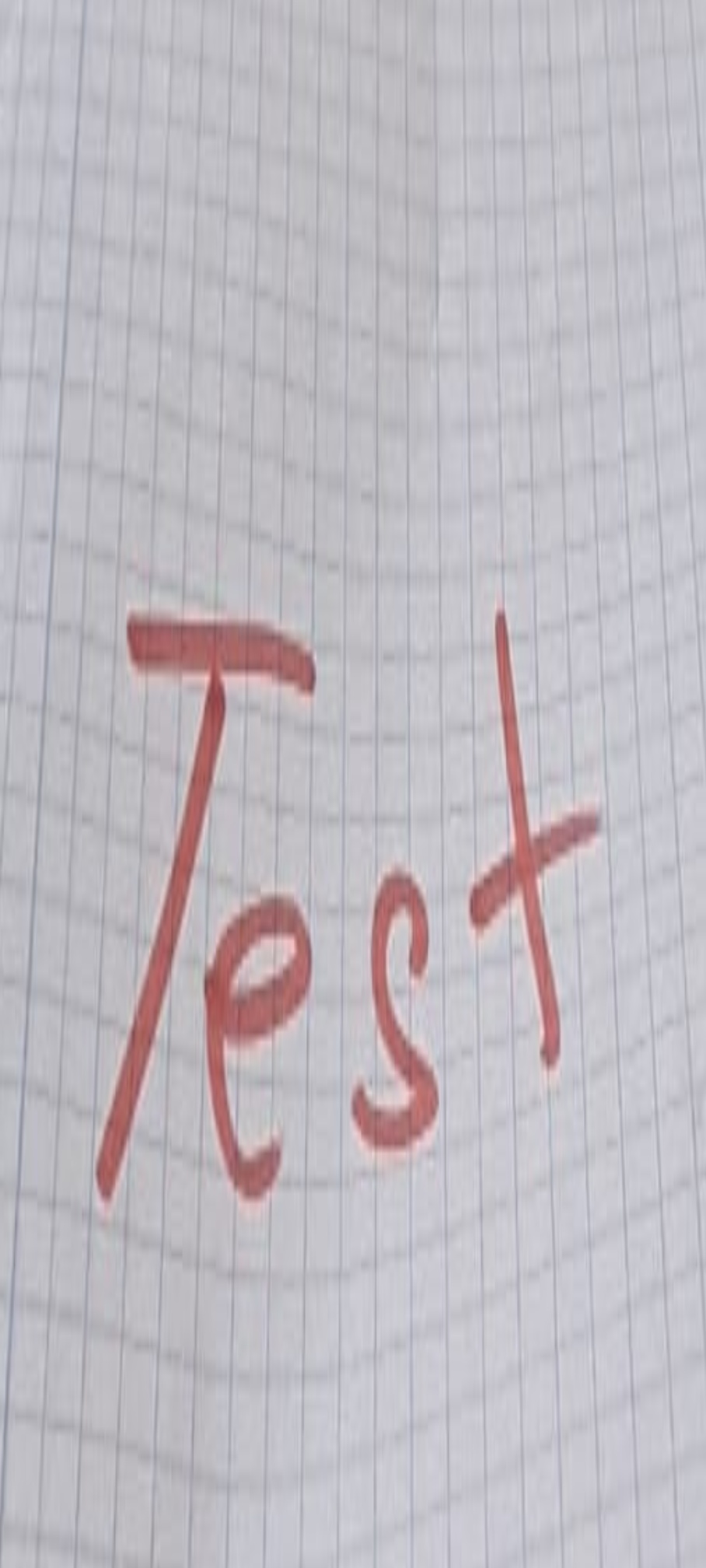

And here are my results for the golf image:

input

output

As you can see I don't get a nice bird-eye view.

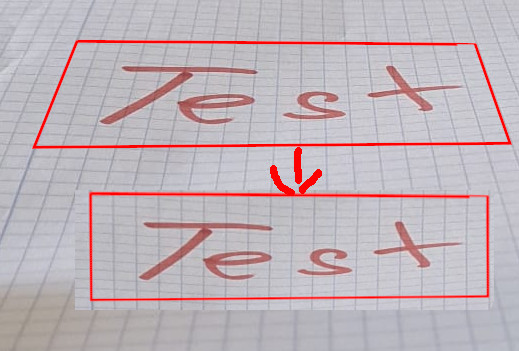

This is the result I get with text and paper using the same script just with different coords and dimensions:

Input

output

So what am I doing wrong with the first golf example? Any help would be greatly appreciated.