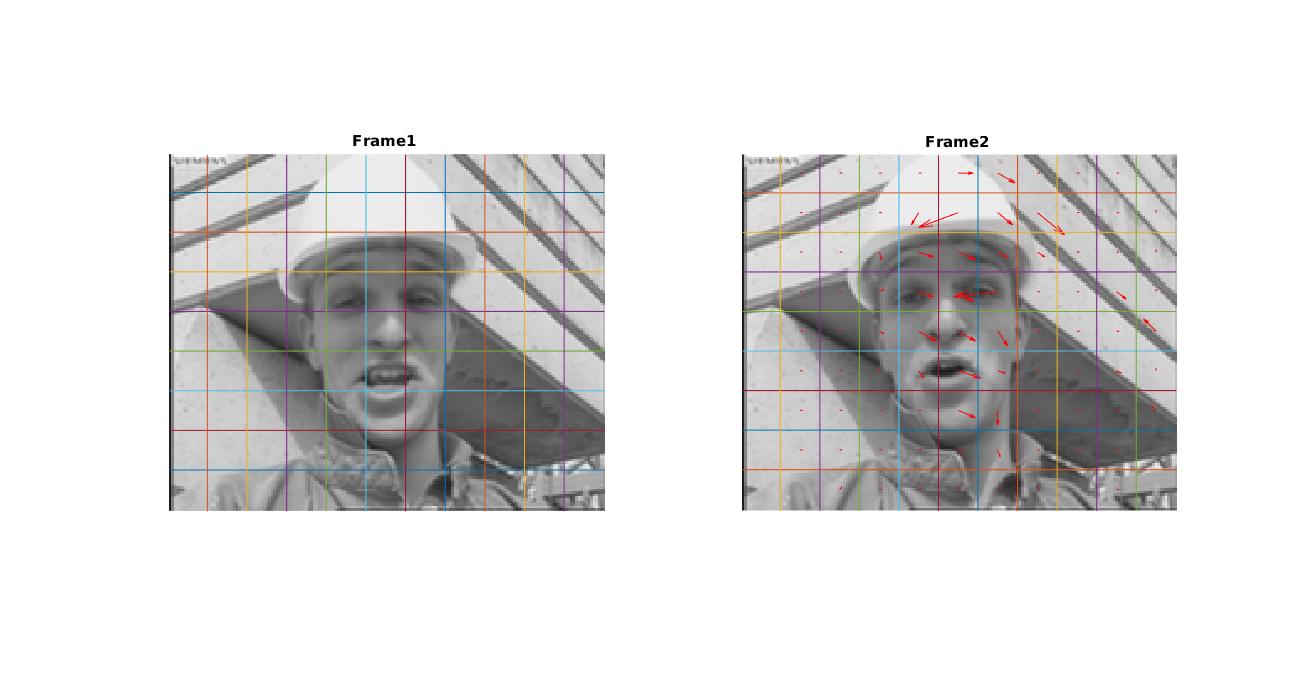

I understood that you want to indicate the areas on frame which have motion.

You may use motion history approach or contour approach for this work.

Here is a motion history approach used sample method (optimized through forking the OpenCV sample - update_mhi) written with Java. You may call this method in a loop through passing the retrieved video frame's Mat reference to img parameter of method.

// Fields...

Mat motion, mhi, orient, mask, segmask;

private void update_mhi(Mat img, Mat dst, int diff_threshold) {

if (videoSignalOkay) {

double timestamp = (System.nanoTime() - startTime) / 1e9;

int idx1 = last, idx2;

Mat silh = Mat.zeros(size, CvType.CV_32FC1);

cvtColor(img, buf[last], COLOR_BGR2GRAY);

double angle, count;

idx2 = (last + 1) % Constants.fps; // index of (last - (N-1))th frame

last = idx2;

silh = buf[idx2];

if (silh == null || silh.empty()) {

silh = Mat.zeros(size, CvType.CV_32FC1);

}

absdiff(buf[idx1], buf[idx2], silh);

threshold(silh, silh, diff_threshold, 1, THRESH_BINARY);

updateMotionHistory(silh, mhi, timestamp, Constants.mhiDuration);

mhi.convertTo(mask, mask.type(), 255.0 / Constants.mhiDuration, (Constants.mhiDuration - timestamp) * 255.0 / (Constants.mhiDuration));

dst.setTo(new Scalar(0));

List<Mat> list = new ArrayList<Mat>(3);

list.add(mask);

list.add(Mat.zeros(mask.size(), mask.type()));

list.add(Mat.zeros(mask.size(), mask.type()));

merge(list, dst);

calcMotionGradient(mhi, mask, orient, Constants.maxTimeDelta, Constants.minTimeDelta, 3);

MatOfRect roi = new MatOfRect();

segmentMotion(mhi, segmask, roi, timestamp, Constants.maxTimeDelta);

int total = roi.toArray().length;

Rect[] rois = roi.toArray();

Rect comp_rect;

Scalar color;

for (int i = -1; i < total; i++) {

if (i < 0) {

comp_rect = new Rect(0, 0, videoWidth, videoHeight);

color = new Scalar(255, 255, 255);

magnitude = 100;

} else {

comp_rect = rois[i];

if (comp_rect.width >= videoWidth/2 || comp_rect.height >= videoHeight/2 ||

comp_rect.width < Constants.recfactorx || comp_rect.height < Constants.recfactory ||

comp_rect.width + comp_rect.height < (Constants.recfactorx*Constants.recfactory)) // reject very small things

continue;

color = new Scalar(0, 0, 255);

magnitude = 30;

}

Mat silhROI = silh.submat(comp_rect);

Mat mhiROI = mhi.submat(comp_rect);

Mat orientROI = orient.submat(comp_rect);

Mat maskROI = mask.submat(comp_rect);

angle = calcGlobalOrientation(orientROI, maskROI, mhiROI, timestamp, Constants.mhiDuration);

angle = 360.0 - angle;

count = Core.norm(silhROI, NORM_L1);

silhROI.release();

mhiROI.release();

orientROI.release();

maskROI.release();

if (count < comp_rect.height * comp_rect.width * Constants.pixelFactor ||

comp_rect.width == videoWidth || comp_rect.height == videoHeight) {

continue;

} else {

isAnyTrack = true;

}

Point center = new Point((comp_rect.x + comp_rect.width / 2), (comp_rect.y + comp_rect.height / 2));

// Optimizer part! Compare the tracked thing in previous list to control empty area movement detection...

/*if (isAnyTrack) {

TrackingPojo trackPojo = new TrackingPojo(comp_rect, center, null);

if (notNeededTracking(trackPojo)) {

isAnyTrack = false;

continue;

} else {

trackList.add(trackPojo);

// Show the warning icon...

ControlManager.getTheInstance().toggleAlert(true);

}

}*/

circle(img, center, (int) Math.round(magnitude * 1.2), color, 3, LINE_AA, 0);

Point thePoint = new Point(

Math.round(center.x - magnitude * Math.sin(angle * Math.PI / 180)),

Math.round(center.y + magnitude * Math.cos(angle * Math.PI / 180)));

Point thePoint2 = new Point(thePoint.x, thePoint.y - (comp_rect.height / 2 + 10));

Point thePoint3 = new Point(thePoint2.x, thePoint2.y - 15);

Core.putText(img, "(" + center.x + ", " + center.y + ")", thePoint, 16, 0.50, new Scalar(255, 0, 0 ...

(more)