Rotate Landmarks

Hello. I have various files with 2D coordinates describing faces of people. In OpenCV I'm using the circle function to draw each of these coordinates in a black background (Mat::zeros), so:

In the program, the points are in a 2 x 70 matrix, where 70 is the number of landmarks used. The first row stores the X's, and second row stores the Y's

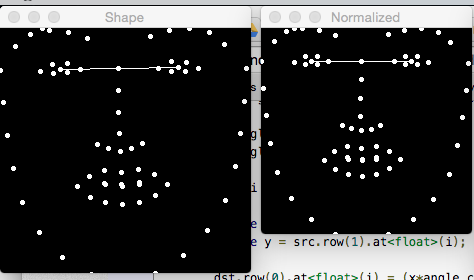

I have the centroid of each eye and, as can be seen in the image, a line linking these centroids. My objective is turn the angle of this line to 0 (in the example image it is ~ 1.9 degrees) and rotate the rest of the shape using the same rotation applied on the line, i.e. for this case for example, rotate all the shape -1.9, I guess.

I was using warpAffine to do the rotation, but it apply the operation in the image (object Mat), not in the coordinates and when I tried to use my Mat with the coordinates (the 2x70 matrix) it didn't worked. My question is: any way to perform the rotation (and then, scaling and translating) in the points, not in the image?

I've not seen a function that rotates a point, but you could easily write it yourself. cv::gemm does the multiplication, so you'd have to create a homogeneous vector for each point (x,y,1), and multiply it with your affine matrix (e.g. from getRotationMatrix2D).

Hi @FooBar , the idea of make the direct multiplication was the first thing I thought, actually I already confirmed (calculating manually) that it solves the problem if I simply multiply my 2x70 matrix by [cos angle sin angle; sin angle, cos angle] (where ";" means row division). Could you provide some code example for that? I didn't have much luck implementing it until now.