Cascade Classifier HAAR / LBP Advice

Hi,

I am using OpenCV and python to train HAAR and LBP classifiers to detect white blood cells in video frames. Since the problem is essentially 2D it should be easier than developing other object classifiers and there is great consistency between video frames.

So far I have been using this tutorial: http://coding-robin.de/2013/07/22/tra...

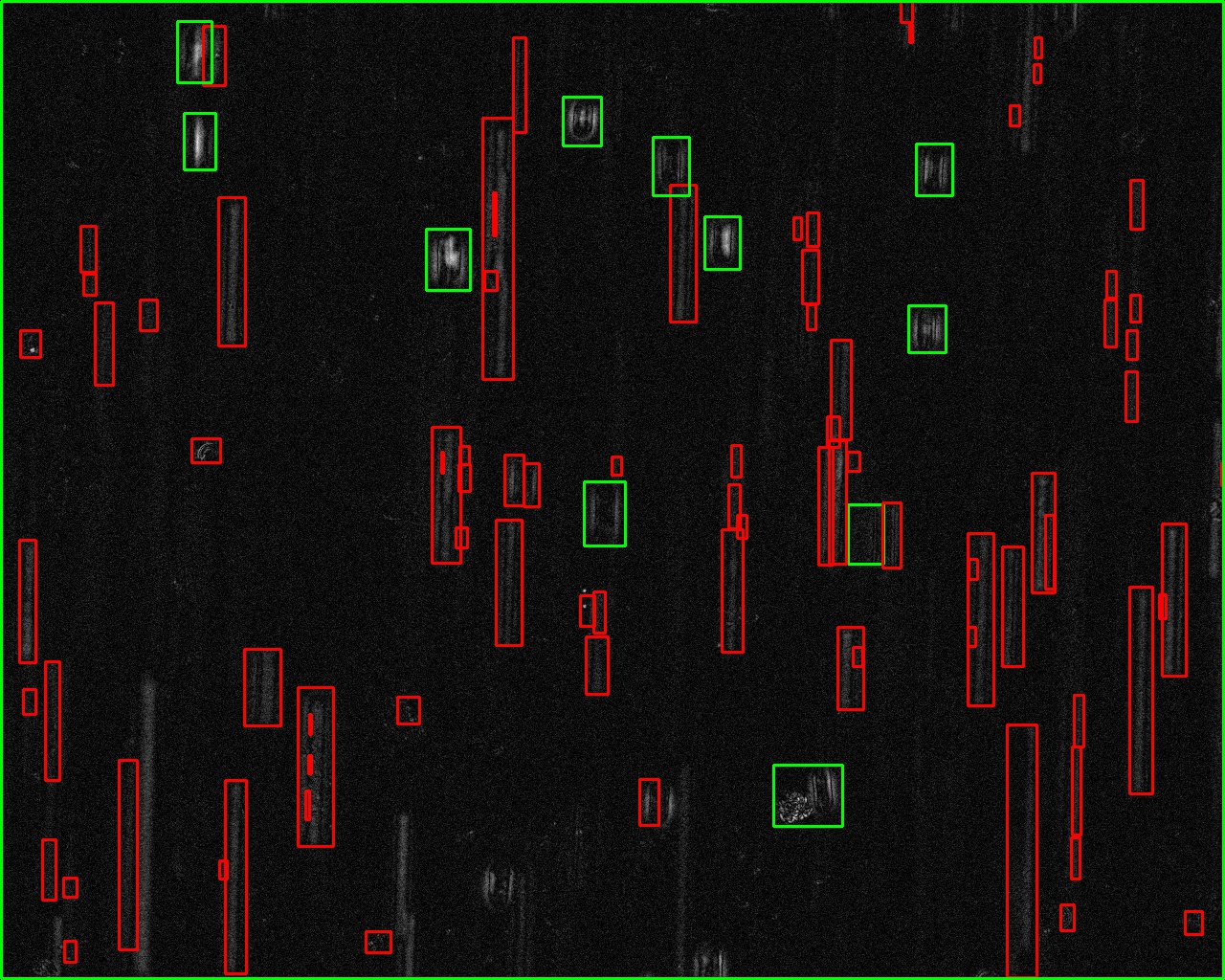

This is an example frame from the video, where I am trying to detect the smaller bright objects:

Positive Images: -> nubmer=60 -> filetype=JPG -> width = 50 -> height = 80

->

-> ->

-> -> etc

-> etc

Negative Images: -> number= 600 -> filetype=JPG -> width = 50 -> height = 80 -> ->

-> ->

-> -> etc

-> etc

N.B. negative image were extracted as random boxes throughout all frames in the video, I then simply deleted any that I considered contained a cell i.e. a positive image.

Having set-up the images for the problem I proceed to run the classifier following the instructions on coding robin:

find ./positive_images -iname "*.jpg" > positives.txt

find ./negative_images -iname "*.jpg" > negatives.txt

perl bin/createsamples.pl positives.txt negatives.txt samples 1500 "opencv_createsamples -bgcolor 0 -bgthresh 0 -maxxangle 0.1 -maxyangle 0.1 maxzangle 0.1 -maxidev 40 -w 50 -h 80"

find ./samples -name '*.vec' > samples.txt

./mergevec samples.txt samples.vec

opencv_traincascade -data classifier -vec samples.vec -bg negatives.txt\

-numStages 20 -minHitRate 0.999 -maxFalseAlarmRate 0.5 -numPos 60\

-numNeg 600 -w 50 -h 80 -mode ALL -precalcValBufSize 16384\

-precalcIdxBufSize 16384

This throws an error:

Train dataset for temp stage can not be filled. Branch training terminated.

But if I try with different parameters the file 'cascade.xml' is generated, using both HAAR and LBP, changing the minHitRate and maxFalseAlarmRate.

To test the classifier on my image I have a python script

import cv2

imagePath = "./examples/150224_Luc_1_MMImages_1_0001.png"

cascPath = "../classifier/cascade.xml"

leukocyteCascade = cv2.CascadeClassifier(cascPath)

image = cv2.imread(imagePath)

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

leukocytes = leukocyteCascade.detectMultiScale(

gray,

scaleFactor=1.2,

minNeighbors=5,

minSize=(30, 70),

maxSize=(60, 90),

flags = cv2.cv.CV_HAAR_SCALE_IMAGE

)

print "Found {0} leukocytes!".format(len(leukocytes))

# Draw a rectangle around the leukocytes

for (x, y, w, h) in leukocytes:

cv2.rectangle(image, (x, y), (x+w, y+h), (0, 255, 0), 2)

cv2.imwrite('output_frame.png',image)

This is not finding the objects I want, when I have run it with different parameters sometimes it has found 67 objects other times 0, but not the ones that I am trying to detect. Can anyone help me adjust the code to find the objects correctly. Many thanks

I would suggest you to start by removing the noise in your image (where you detect); then try another approach, like threshold (binary image) -> find contours -> fit ellipse -> filter long ellipses (ellipses with high elongation). Doing a cascade classifier combined with another classifier for eliminating the false positives, seems to be too complex for your case (IMHO)

@thdrksdfthmn you suggested the best and easy solution (IMHO)

Thanks, the thresholding approach was the first method I tried, but the results were not accurate enough.

i am trying an experimental approach. what is the aimed accuracy

Aimed at accuracy is 90 to 95%, but the more accurate the better obviously. Actually I have had good results with a classifier, but I am now trying to better optimise the training. I have reduced the size of the positive and negative images from 100 x 100 to 50 x 80, now I cannot get as good a result with Haar features.

What was the accuracy in the threshold manner? And what is now? Have you asked for ideas in the first approach; maybe you can very easily improve it?

@WillyWonka1964 i want to share my approach as draft code if you permit.

Yes, please any help is appreciated