Different results of gpu::HOGDescriptor and cv::HOGDescriptor

Hi,

we evaluate the performance improvement of the GPU implementation of the HOGDescriptor against the CPU implementation. During the tests, we compared the computed Descriptors of the GPU and the CPU implementation and saw that they are different. Why the Descriptors are different? Is this a bug?

The following code show our test application. We use the default people detector with default parameters on an frame from the OpenCV example video. The OpenCV version is opencv-2.4.10 and CUDA 6.5. The descriptor was computed at Rect(130, 80, 64, 128).

#include <iostream>

#include <opencv2/opencv.hpp>

#include <opencv2/gpu/gpu.hpp>

using namespace std;

using namespace cv;

int main()

{

//load image and upload to gpu

Mat img(imread("frame_7.png", CV_LOAD_IMAGE_GRAYSCALE), Rect(130, 80, 64, 128));

gpu::GpuMat gpu_img; gpu_img.upload(img);

//initialize detector and HOGDescriptor

vector<float> detector = HOGDescriptor::getDefaultPeopleDetector();

gpu::HOGDescriptor gpu_hog(Size(64, 128), Size(16, 16), Size(8, 8), Size(8, 8),9);

HOGDescriptor cpu_hog(Size(64, 128), Size(16, 16), Size(8, 8), Size(8, 8), 9);

gpu_hog.setSVMDetector(detector); cpu_hog.setSVMDetector(detector);

//get descriptor vector -- cpu

vector<float> cpu_descriptor, gpu_descriptor_vec;

cpu_hog.compute(img, cpu_descriptor, Size(8, 8), Size(0, 0));

//get descriptor vector -- gpu

gpu::GpuMat gpu_descriptor_temp;

gpu_hog.getDescriptors(gpu_img, Size(8, 8), gpu_descriptor_temp);

Mat gpu_descriptor(gpu_descriptor_temp);

gpu_descriptor.copyTo(gpu_descriptor_vec);

//compare cpu_descriptor <--> gpu_descriptor

for(int i=0;i<cpu_descriptor.size();i++){

cout << i << " : " << cpu_descriptor[i] << " <--> " << gpu_descriptor_vec[i] << endl;

}

return 0;

}

Here is the test frame.

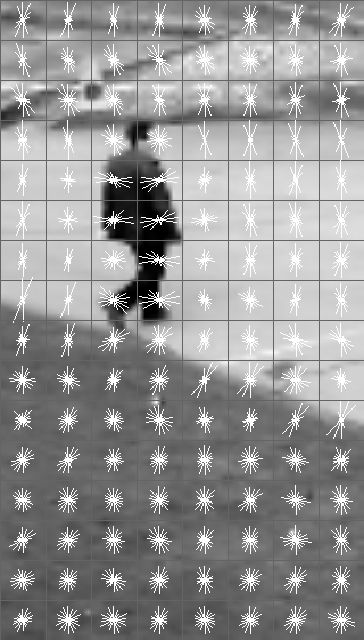

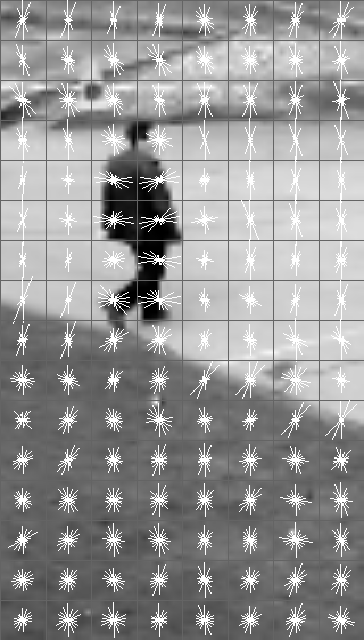

If we visualize the descriptors with the method from here. They look very similar but if you take a look at block (0,0) you see differences in magnitude and in block (3,5) different orientations.

CPU HOGDescriptor visualization

GPU HOGDescriptor visualization

Could anyone give me some hints why the descriptors are different?

Best regards,

Siegfried

I don't have much to add, but here's an image of the difference. The errors look relatively minor. I wonder if it's simply floating point error. That might explain why the errors in angle appear smaller than the errors in magnitude

Your are right, the differences are minor. In the OpenCV HoG example its possible to switch between the CPU and the GPU implementation and both use the same model (default people detector). The detection results looks quite similar. So there could be no big differences, but I wondering what is the reason for the "small" difference in the descriptor. Probably your right and its an floating point issue (for example 16bit float vs. 32bit float).