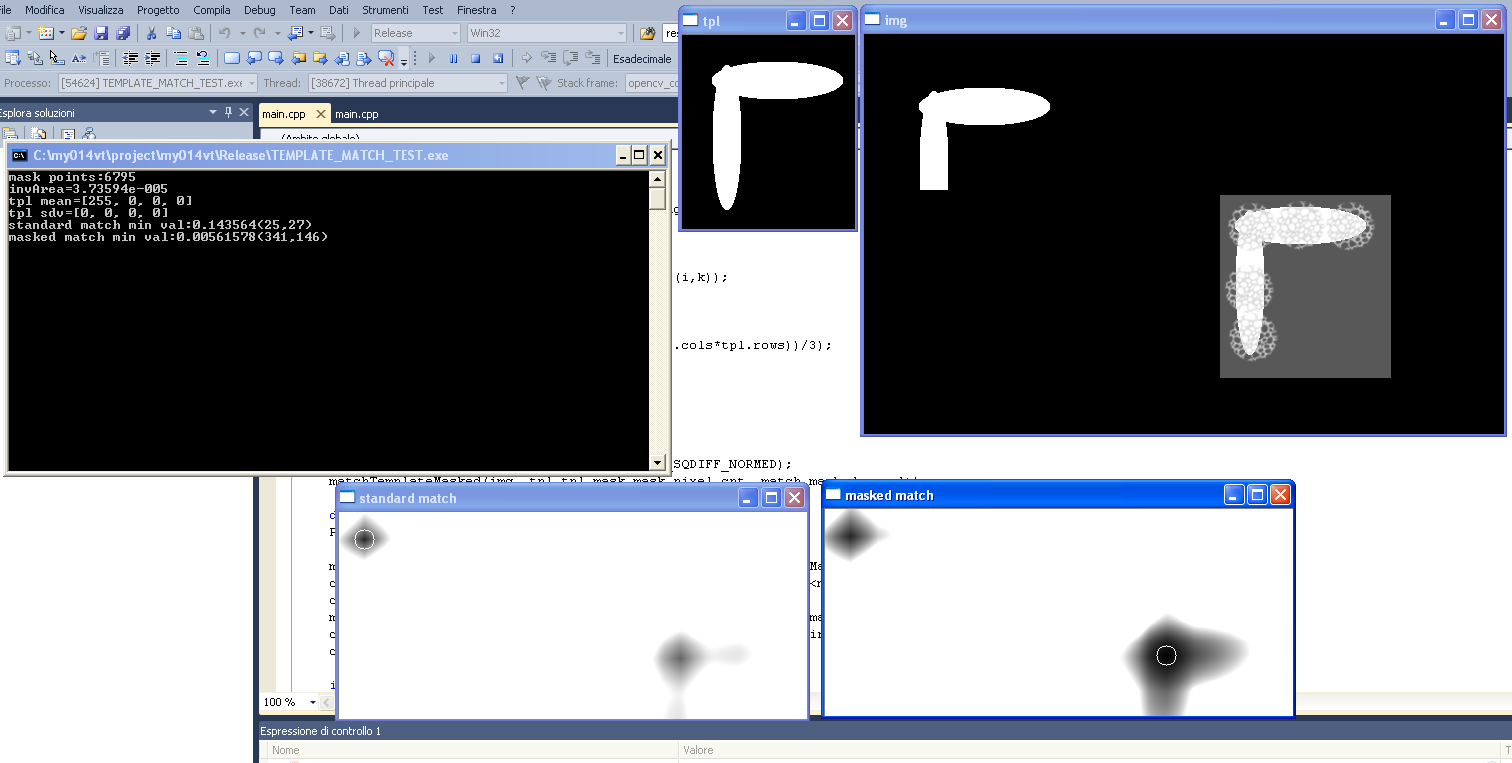

If you're using simple cross correlation, you could use a mask to set those excluded areas to 0, which would prevent them from contributing to the cross correlation sum. This tactic isn't going to work with any sort of normalization, and probably not with coefficient correlation, as they use a mean of all template pixels - which is going to be affected by "0" value pixels.

I'm working on a warpPerspective -> matchTemplate setup, and was about ready to try and rewrite matchTemplate to be able to handle the quadrilaterals I was getting from warpPerspective (inscribed in rectangles with padded 0's), until I figured out I could just use the inverse transform matrix to warpPerspective of the larger image rather than the template. (I'm more interested in the center of the image, so I can assume the match will be somewhere in the interior of the image)