depth from disparity

Hello everyone,

I have computed a disparity map using OpenCV on Python, but my goal is to get the real depth from this disparity map.

Steps accomplished :

- Take 40 calibration pictures with 2 side by side cameras

- Calibrate each camera individually using the cv2.calibrateCamera() function

- Stereo calibration with the cv2.stereoCalibrate() function

- Compute rotation matrix with the cv2.stereoRectify() function

- Undistort both of the cameras with the cv2.initUndistortRectifyMap() function

- Use Stereo SGBM algorithm to create a disparity map

- Ajust Stereo SGBM parameters to get the best possible result for the disparity map

- Use cv2.reprojectImageTo3D() function to get real 3D coordinates (depth map)

All my results until point 7) seem to be correct (see images below).

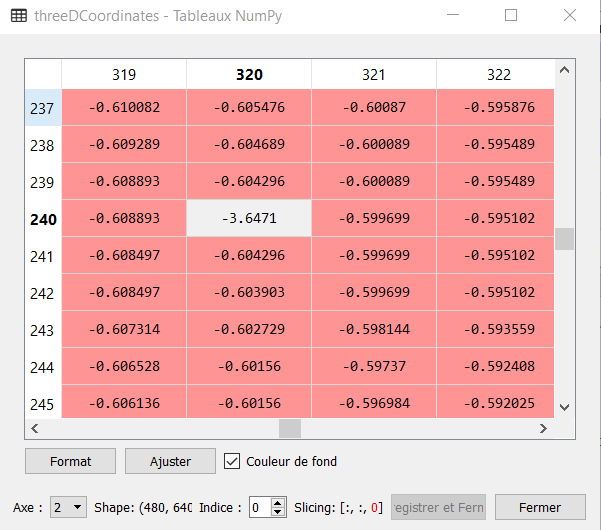

However, the result given by cv2.reprojectImageTo3D() is totally incorrect, and I am unable to understand where is the problem. The calibration has been done with units in [cm], so for an object placed at 50 cm, the result should be near 50, but the function returns -0.6. Any idea what could be the problem ?

My results are the following, for a bottle placed at 50cm of the 2 cameras :

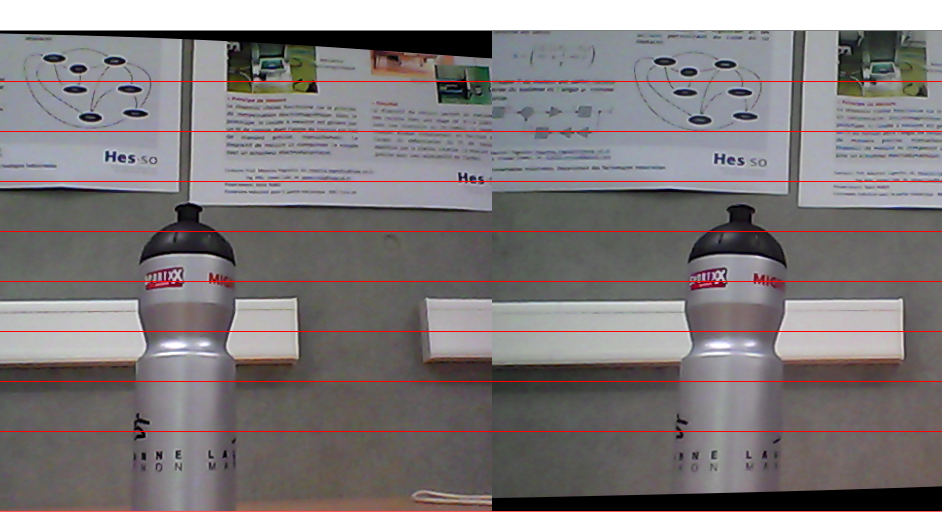

Images after undistortion :

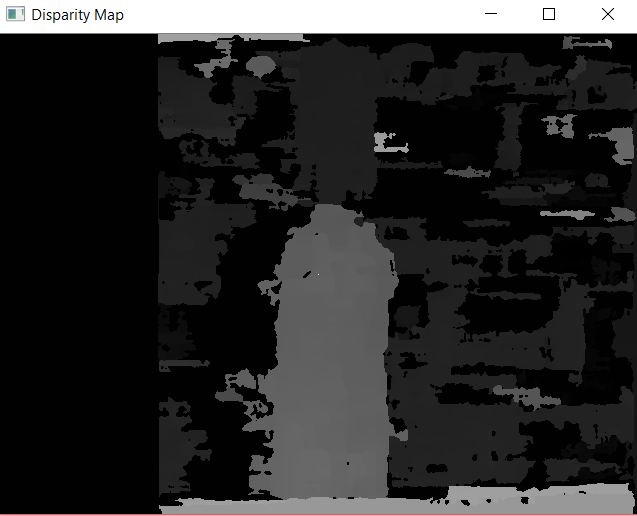

Disparity map

Depth Map (-3.64 represents a white pixel, manually added in the disparity map for visualization purposes, as you can see on the above image. Every pixels near the value should be around 50, but it gives -0.6) :

Any help would be very welcome !

Can you try to calculate the depth value manually once? If not, post focal length from the camera matrix and baseline for your stereo camera setup, image size and actual disparity value at that pixel?

Yes! I have tried that after posting this, and I am actually able to calculate a pretty good depth by doing the matrix multiplication of [Q] * {x, y, d, 1}T and then dividing all results by W, as suggested here

So what is wrong with the cv2.reprojectImageTo3D() function ??

thank you.

UP! does no one know the answer to my question ? Why does the reprojectImageTo3D() function not return correct {x, y, z} coordinates ?

Unit in the reprojectImageTo3D are unit used in calibration process. You can fin full example in C++ here

yes I know that, and it is exactly where the problem is. If I do the matrix calculation by hand (with the actual values of the Q matrix and the value of the disparity map, I get good results but the function reprojectImageTo3D() doesn't do it well.