is it possible to calculate a points 3D X and Y coordinate by inverting camera matrix

I have read camera calibration explained.

I was able to do it with and just to test it i used this and everything is perfectly working.

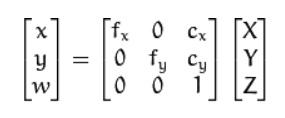

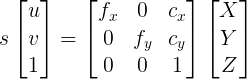

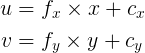

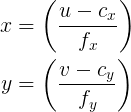

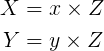

I am just curious given the below equation and assuming I know the Z of a 3D point and its x,y pixel coordinates in the image, would I be able to assess the X, and Y coordinates of the 3D point?

In other words if I multiple the two sides of the equation by inv of camera matrix, and assuming I know the Z = w of a 3D point, then can I get the 3D X and Y of the point in 3D space?

![image description] (https://docs.opencv.org/2.4/_images/m...)

Any comments is much appreciate it.