Calculate robot coordinates from measured chessbaord corners ( hand-eye calibration)

Hello guys,

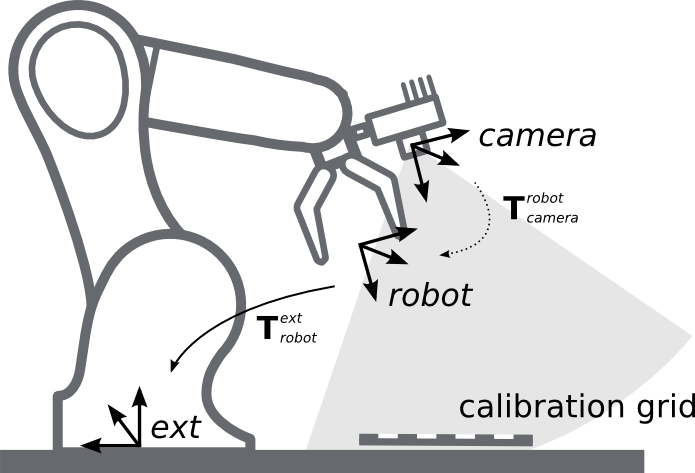

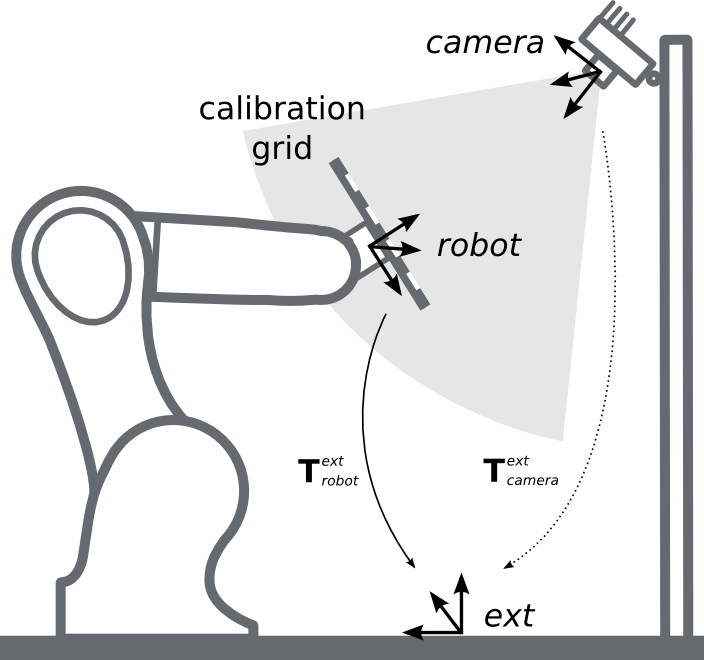

for my project I am trying to calibrate my depth camera with my robot-arm (hand-eye calibration). Therefore, I measured 8 corners of the cheassboard and got 8 pixel vectors and their corresponding robot 3D coordinates (using the gripper to point exactly at the corner). Then, I tried to calculate the transformation matrix. But the result is way off. Where's the mistake? Thank you very much!

Edit: Added the camera matrix

img_pts = np.array(((237., 325.),

(242., 245.),

(248., 164.),

(318., 330.),

(322., 250.),

(328., 170.),

(398., 336.),

(403., 255.)), dtype=np.float32)

objectPoints = np.array(((-437., -491., -259.),

(-402., -457., -259.),

(-360., -421., -259.),

(-393., -534., -259.),

(-362., -504., -259.),

(-332., -476., -259.),

(-298., -511., -259.),

(-334., -546., -259.)), dtype=np.float32)

camera_matrix = np.array(((542.5860286838929, 0., 310.4749867256299),

(0., 544.2396851407029, 246.7577402755397),

(0., 0., 1.)), dtype=np.float32)

distortion = np.array([0.03056762860315778,

-0.1824835906443329,

-0.0007936359893356936,

-0.001735795279032343,

0])

ret, rvec, tvec = cv2.solvePnP(objectPoints, img_pts, camera_matrix, distortion)

rmtx, _ = cv2.Rodrigues(rvec)

T_gripper_cam = np.array([[rmtx[0][0], rmtx[0][1], rmtx[0][2], tvec[0]],

[rmtx[1][0], rmtx[1][1], rmtx[1][2], tvec[1]],

[rmtx[2][0], rmtx[2][1], rmtx[2][2], tvec[2]],

[0.0, 0.0, 0.0, 1.0]])

T_cam_gripper = np.linalg.inv(T_gripper_cam)

print(T_cam_gripper)

p = np.array((237., 325., 0.), dtype=np.float32)

p_new = np.array([p[0], p[1], p[2], 1])

objectPoint = np.dot(p_new, T_gripper_cam)[:3]

print(objectPoint)

# should be (-437., -491., -259.)

# --> actual = [ 33.57 -395.62 -64.46]

------------------------------------- Edit ---------------------------------------------------------- I think I might be on to something. According to this: Stackoverflow

I have to calculate the scaling factor. So i tried to do this in python:

uv = np.array(([237.], [325.], [1.]), dtype=np.float32)

rotInv = np.linalg.inv(rmtx)

camMatInv = np.linalg.inv(camera_matrix)

leftSideMat = np.dot(rotInv, np.dot(camMatInv, uv))

rightSideMat = np.dot(rotInv, tvec)

s = (295 + leftSideMat[2:] / rightSideMat[2:])

temp = (s * np.dot(camMatInv, uv))

tempSub = np.array(temp - tvec)

print(np.dot(rotInv, tempSub))

# should be (-437., -491., -259.)

# --> actual = [ 437.2 -501.3 -266.2]

Looks like I am pretty close. But its still way off in the Y direction. Is this the correct approach? Thank you!

might not change much (and we cannot reproduce w/o your intrinsics Mat) but imho, it shouild be:

(homogeneous 2d coords, see formulas here)

Thank you first of all! I think that's what i did in:

p should just be a pixel vector but i added the zero to multiply it with my transformation matrix.