This forum is disabled, please visit https://forum.opencv.org

| 1 | initial version |

You should add a figure of your setup to better let other people understand what you want to do. If I have understood correctly, you are doing the following:

img_pts)objectPoints)solvePnPThis looks not correct for hand-eye calibration:

solvePnP should be used to compute the transformation between the camera frame and the object frameobjectPoints are expressed in some reference frameimg_pts, the camera has movedThis topic is well covered in the literature:

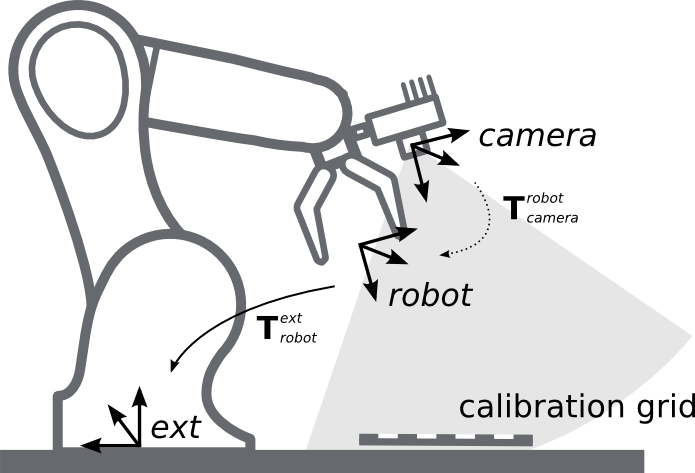

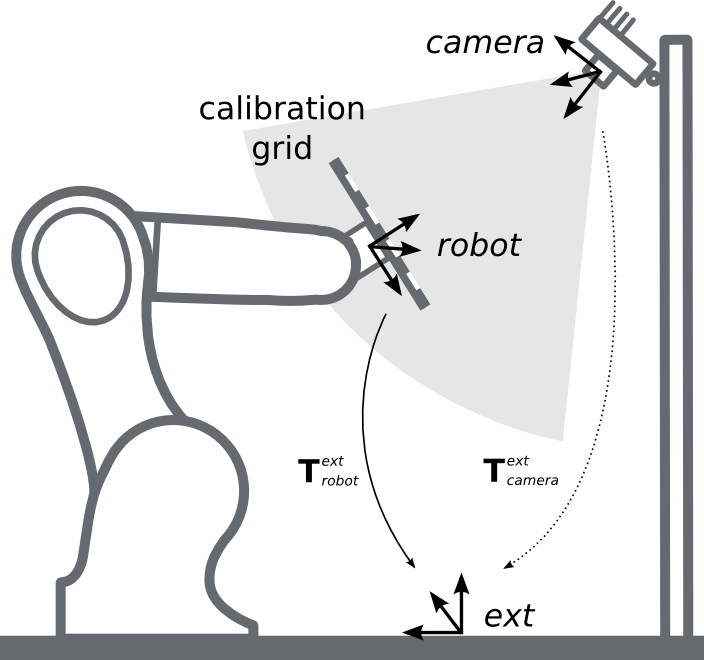

Normally the setup should be:

T_robot_camera

T_ext_camera

In the first case, the calibration procedure is:

findChessboardCorners() + solvePnP())Then, you can estimate the transformation between the end-effector frame and the camera frame using a standard hand-eye calibration method (e.g. Tsai method).

You should be able to find some code that implements the Tsai method or some similar methods, for instance maybe this one: ROS + CamOdoCal Hand Eye Calibration.

My advice is to use ViSP to do the Tsai calibration since I have already used this library: