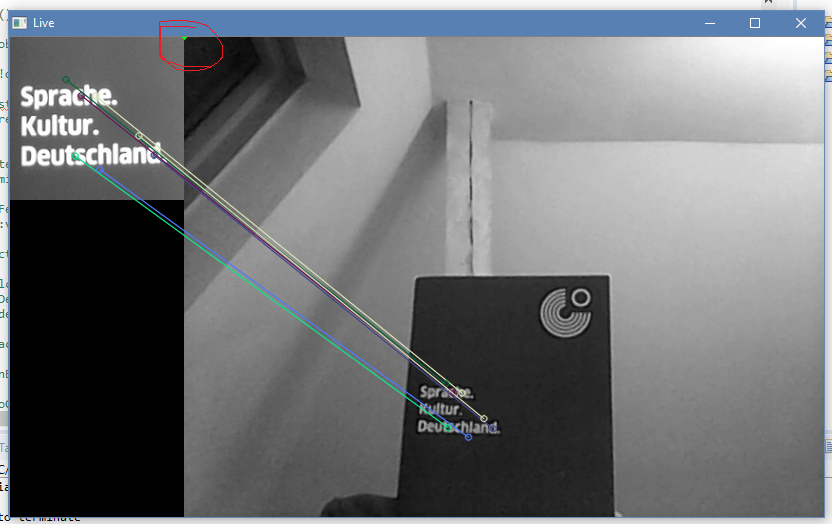

bounding box around the detected Object in real time video

link:[followed the same code] (https://docs.opencv.org/2.4/doc/tutor...)

During real time video, the bounding box is becoming a point at the joining of the montage as marked in red shape, even though the matches are good.

" even though the matches are good." -- however, the homography is bad, as you can see.

it only found 6 matching points, that's probably not enough (not enough texture in your object image ?)

also: be careful there, H might be empty(), if it could nout build a homography at all, you have to check, before proceeding !

btw, it does not detect "objects" at all, only keypoints

H is not empty. With more texture, obviously the number of key points increased, now the

good_matches.size() is varying between 40-60 with a few outliers. But still there is no bouding box around the keypoints of the object in real time. It is still at the corner as depicted in the above figure and sometimes with weird polygon shapes out of the bounds of keypoints. what could be the possible reason? Is there any minimum limit to number of matching points?all i'm saying is, that H can be empty, and your program will crash then, so check.

also, the boundingbox is not around the keypoints (it does not know about any kp), it's only the original rect, projected by H

"minimum limit " -- something like 5, iirc. below, it won't find any H

the quality of your matches unfortunaly does not say anything about how good/bad H will be

thank you :) I will check.

@berak link: same link as above does this code detects objects in real-time video? or In real-time, are we supposed to make a tracker object to successfully detect and track the object?

"does this code detects objects" -- NO. NEVER.

it detects keypoints, that's all it ever does. don't be mislead.

IF you're lucky to have enough good matches (you arent, above), you may try to find the homography from the matches, and try to project the initial boundingbox with that, to find a new position of "some part of the scene". but that's not "object detection" at all. it does not know about any "objects", only "there's 1 small part of the original scene", and "another image of the whole scene" (which might be scaled, rotated)

you have to strike out the word "object" anywhere in this context, it's only misleading.