Stereosystem triangulate points

Hi guys,

currently I am working on a program that takes 3d coordinates from non rectified stereo images with triangulate. First I give you a short insight into my program.

I tried it on this way (OpenCV, C++):

1.

Calibrate the cameras with several chessboard images from various positions. I create the 3D points of my chessboard in its own coordinate system as follow

vector<Point3f> obj;

for (int j = 0; j<sChess.board_n; j++)

{

obj.push_back(Point3f(0.02377*(j % sChess.board_w), 0.02377*(j / sChess.board_w), 0));

}

2.

To get the camera intrinsic I use stereoCalibrate and the extrinsic I calculate with solvePnP. So i get the rotation and translation vector of each camera.

double rms3 = stereoCalibrate(xObjektPoints, xImageCam1, xImageCam2,

intrinsic, distCoeffs[0],

intrinsic2, distCoeffs[1],

Size(3000, 4000), R, T, E, F,

TermCriteria(CV_TERMCRIT_ITER + CV_TERMCRIT_EPS, 100, 1e-5),

CV_CALIB_USE_INTRINSIC_GUESS + CV_CALIB_ZERO_TANGENT_DIST

);

for (int i=0; i<xObjektPoints.size(); i++)

{

solvePnP(xObjektPoints[i], xImageCam1[i], intrinsic, distCoeffs[0], rVec1, tVec1);

solvePnP(xObjektPoints[i], xImageCam2[i], intrinsic2, distCoeffs[1], rVec2, tVec2);

}

3.

Next I create two Projection Matrices. I convert the rotation vector with Rodrigues to a rotation matrix and chain the rotation matrix with the translation vector to a 3x4 matrix. Last but not least I multiply both 3x4 matrices with the respective camera matrix (intrinsic).

Rodrigues(rVec1, mRot1); // mRot1 is 3x3

Rodrigues(rVec2, mRot2); // mRot2 is 3x3

Mat rt_a, rt_b;

hconcat(mRot1, tVec1, rt_a);

hconcat(mRot2, tVec2, rt_b);

Mat mProjection1, mProjection2;

mProjection1 = intrinsic * rt_a;

mProjection2 = intrinsic2* rt_b;

4.

As last step I triangulate the images points

double ImagePoints1[3] = { points1[i].x, points1[i].y, 1 };

double ImagePoints2[3] = { points2[i].x, points2[i].y, 1 };

Mat mPoint1 = Mat(3, 1, CV_64FC1, ImagePoints1);

Mat mPoint2 = Mat(3, 1, CV_64FC1, ImagePoints2);

Mat test = triangulate_Linear_LS(mP1, mP2, mPoint1, mPoint2);

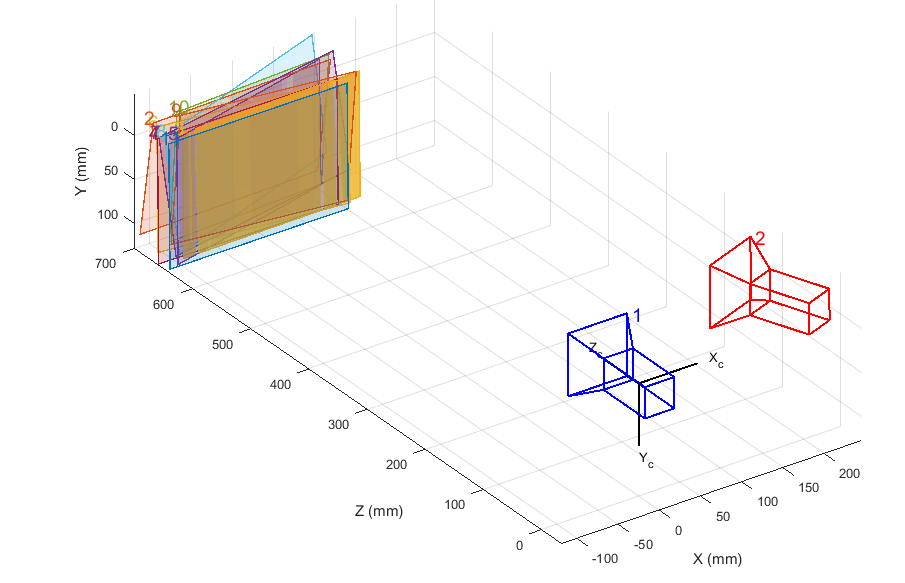

Thats the Stereo-System, Image is from Matlab

Now my question:

To test the program I use the first chessboard images from the stereosystem, because I know all 3D coordinates (object points from 1), find the corners and triangulate them.

My results look good because the calculated 3D points are the same as the object points from 1

The Z-Value is near to 0, I tkink the chessboard is probably the origin. But I want that the z value reflects the distance from the stereo camera system to object for example the first corner top left X: 0 ; Y: 0 ; Z: 64.

Do you know what I mean?

I hope someone can help me....thanks.