This forum is disabled, please visit https://forum.opencv.org

| 1 | initial version |

@diegomez_86 as I commented you were not applying some pre-, post-processing which would make your life easier. For example applying the following code:

#include <iostream>

#include <opencv2/opencv.hpp>

using namespace std;

using namespace cv;

int main()

{

// load image

Mat src = imread("d.jpg");

// check that it is loaded correctly

if(!src.data || src.empty())

{

cerr << "Problem loading image!!!" << endl;

return EXIT_FAILURE;

}

imshow("src", src);

// transform to grayscale

Mat gray;

cvtColor(src, gray, CV_BGR2GRAY);

// imshow("gray", gray);

// get the binary version

Mat bin;

threshold(gray, bin, 50, 255, CV_THRESH_BINARY/* | CV_THRESH_OTSU*/);

imshow("bin", bin);

Mat kernel = Mat::ones(3, 3, CV_8UC1);

dilate(~bin, bin, kernel);

imshow("dilate", bin);

waitKey();

return 0;

}

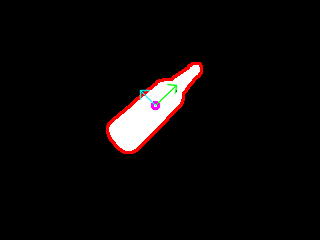

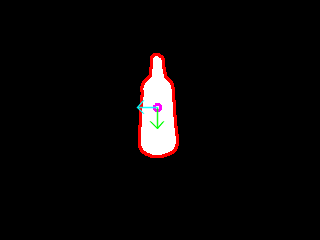

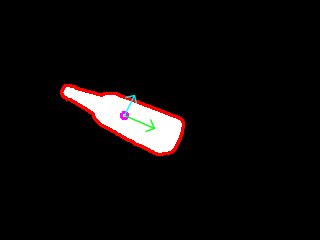

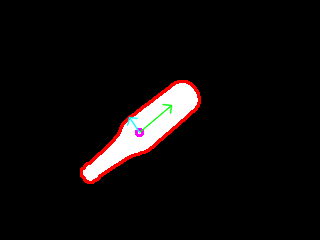

I managed to get the following results:

which would make easy to apply the code from the example that you are suggesting and you can also find it here with some more goodies. Which comes up with the following result:

I guess also it would help to get better lines from houghTransformp (I did not try that). Also I do not know the parameters of your environment (e.g. illumination, shadows, camera angle and perspective, etc...) and your setup, so I cannot say how dynamic or not this solution would perform. I think this is something that you should discover.