This forum is disabled, please visit https://forum.opencv.org

| 1 | initial version |

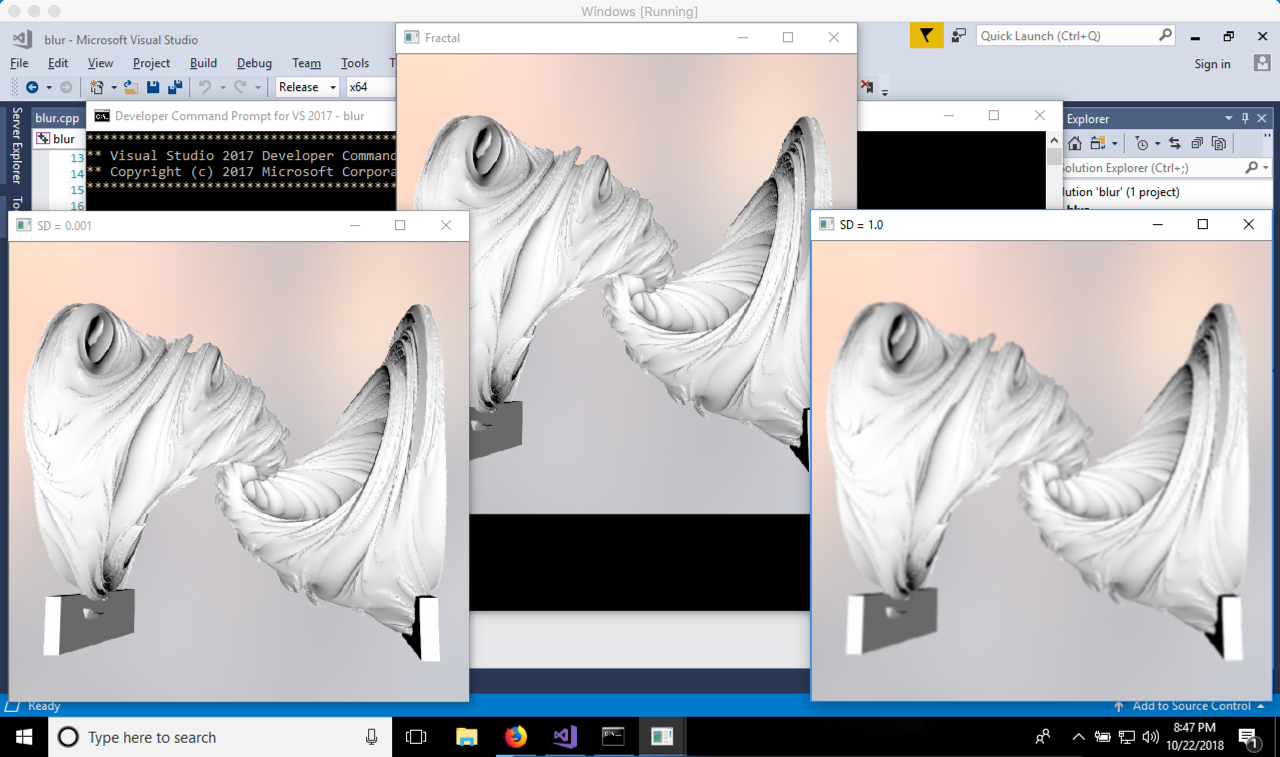

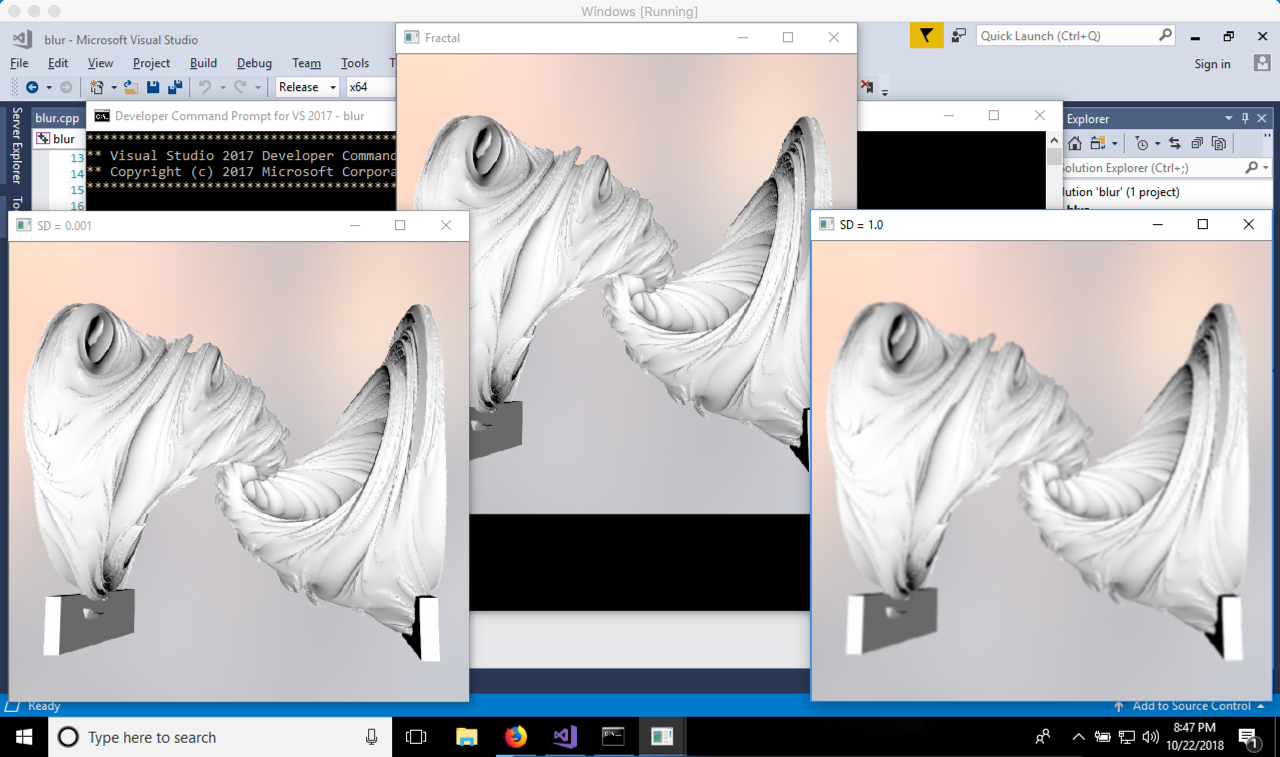

The following code, in C++, shows the difference between a standard deviation of 1.0 and 0.001.

GaussianBlur(image, blurred_2, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_1, Size(5, 5), 1.0, 1.0);

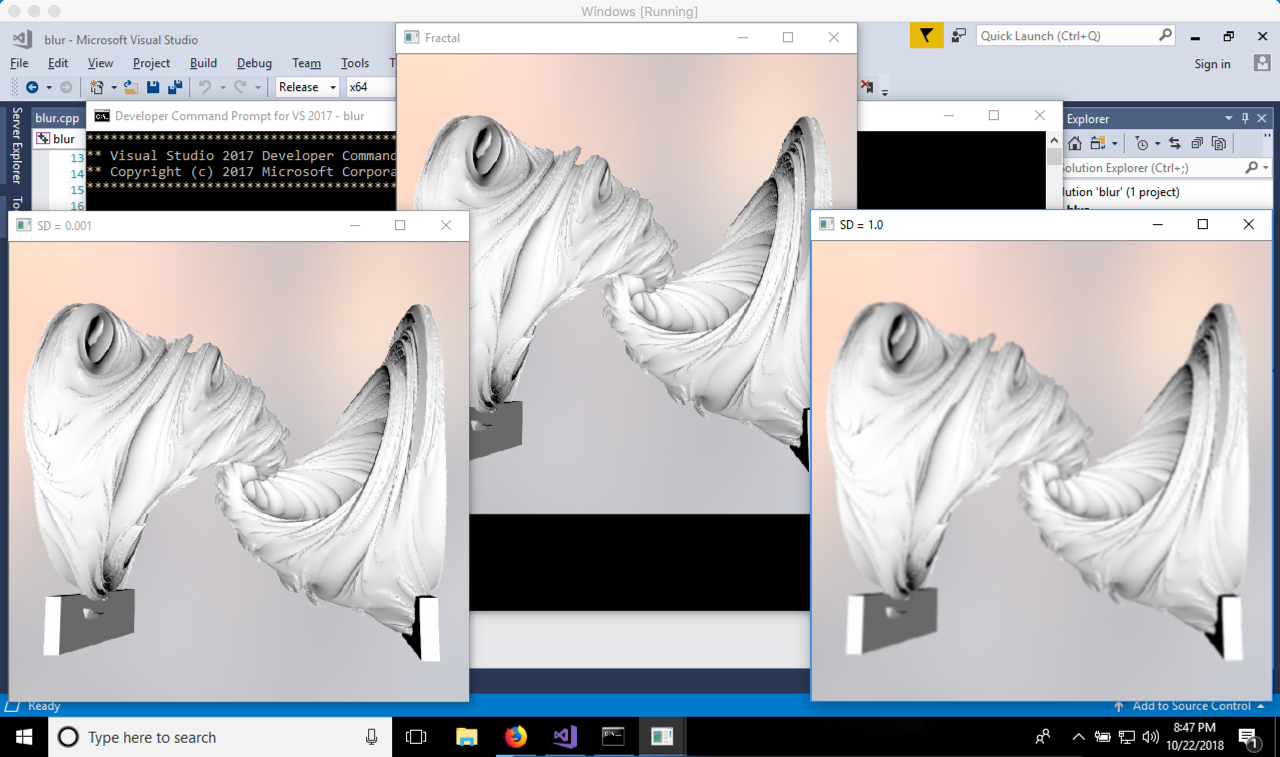

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable.

| 2 | No.2 Revision |

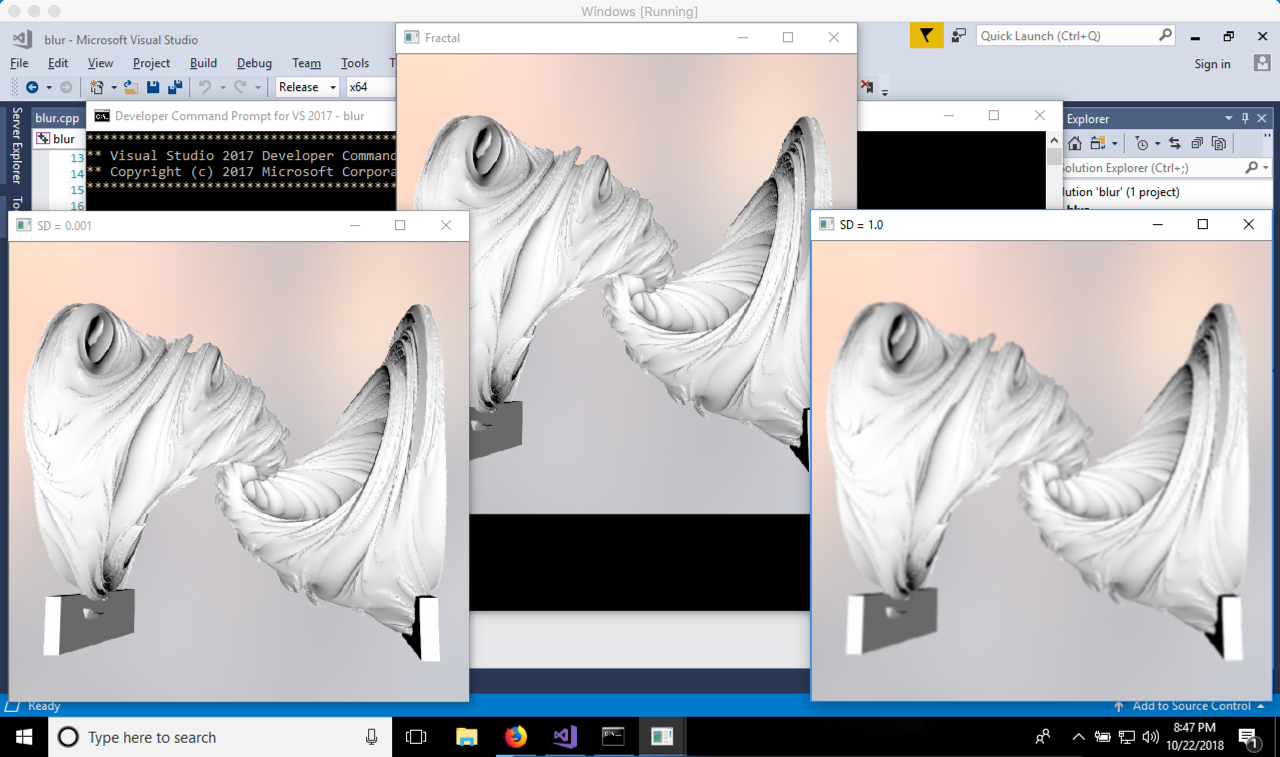

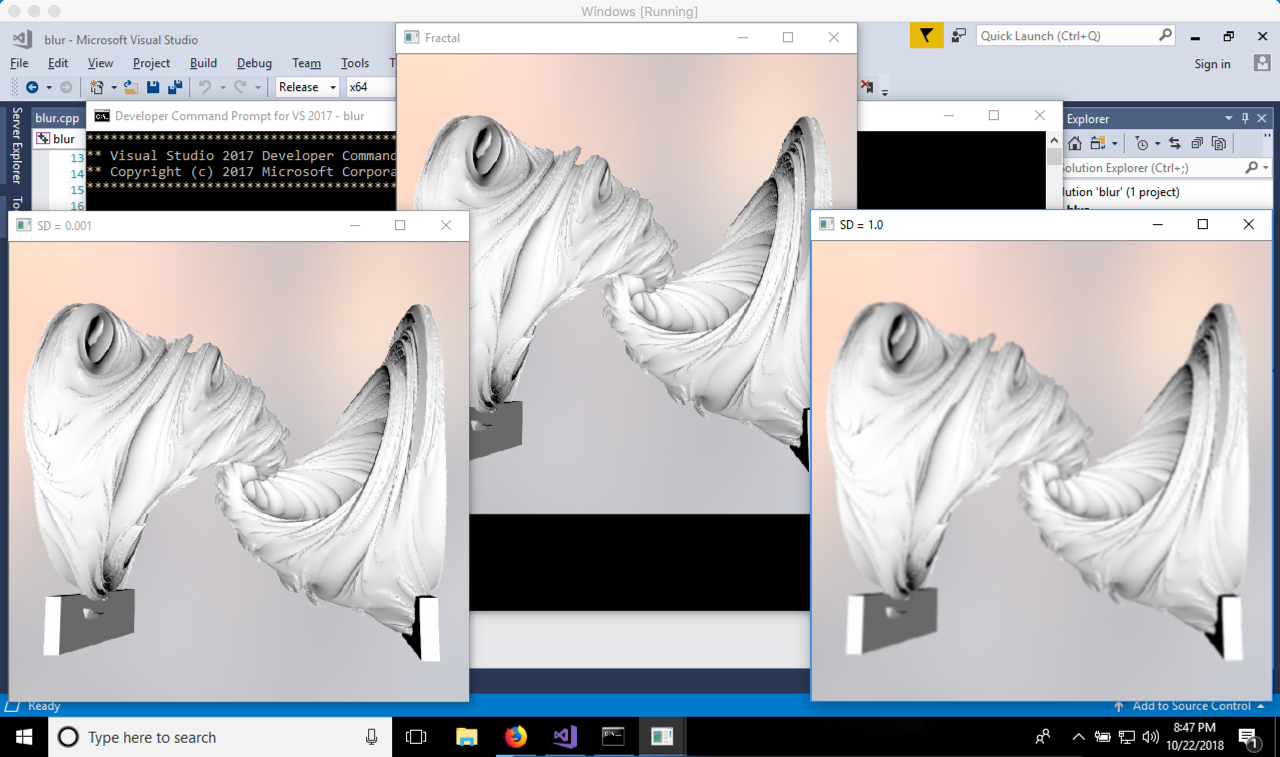

The following code, in C++, shows the difference between a standard deviation of 1.0 and 0.001.

GaussianBlur(image, blurred_2, blurred_1, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_1, blurred_2, Size(5, 5), 1.0, 1.0);

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable.

| 3 | No.3 Revision |

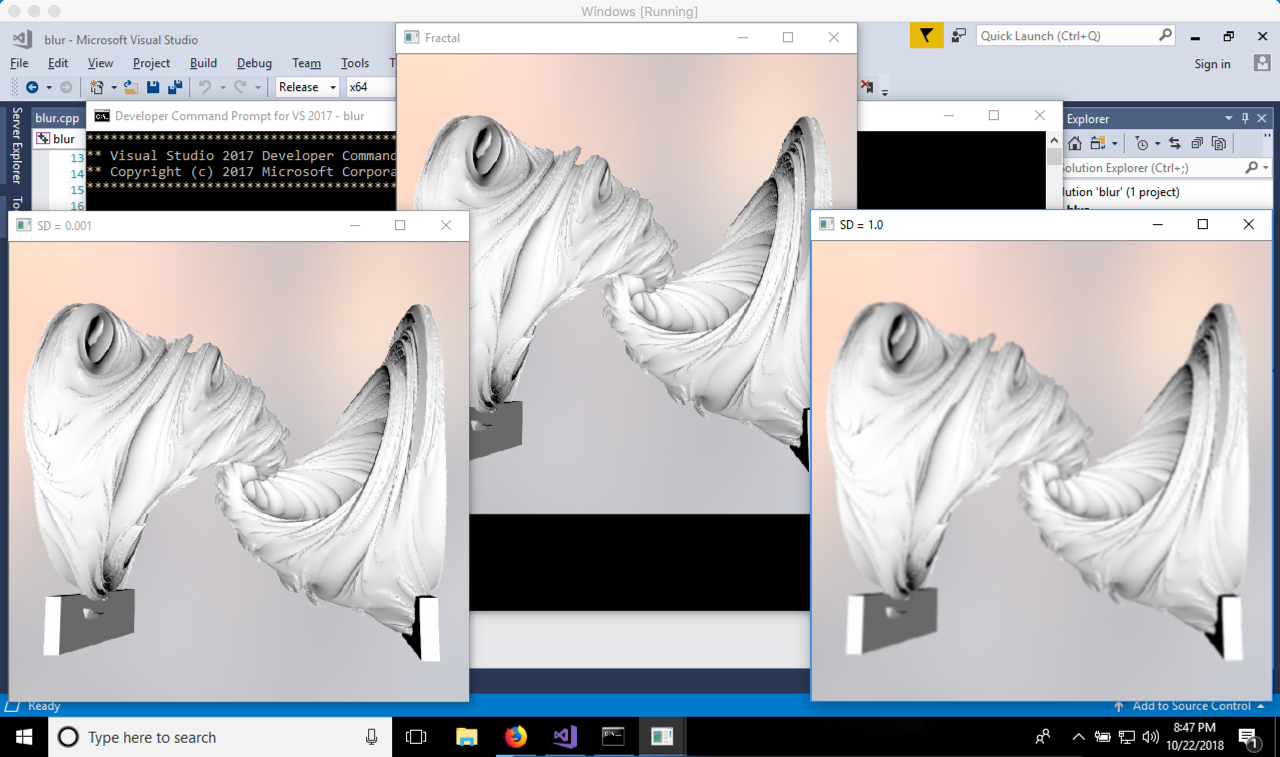

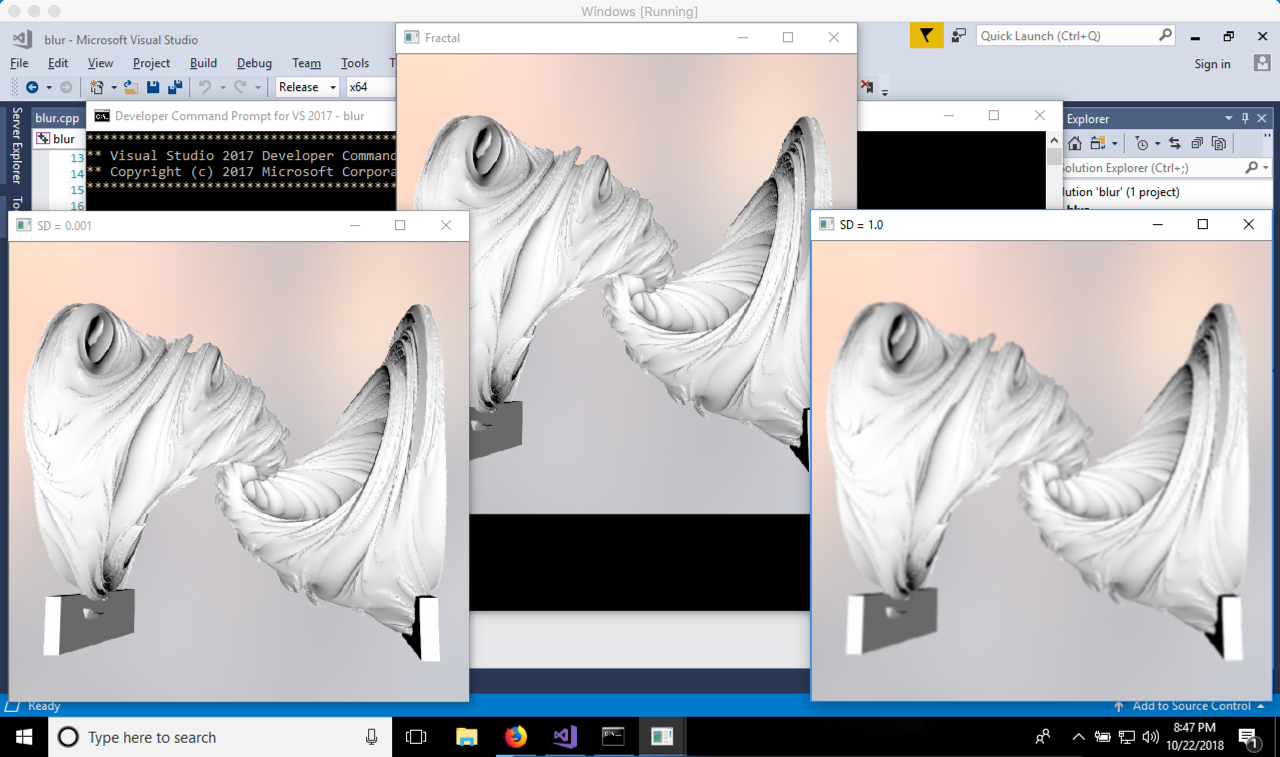

The following code, in C++, shows the difference between a standard deviation of 1.0 0.001 and 0.001.1.0.

GaussianBlur(image, blurred_1, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_2, Size(5, 5), 1.0, 1.0);

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable.

| 4 | No.4 Revision |

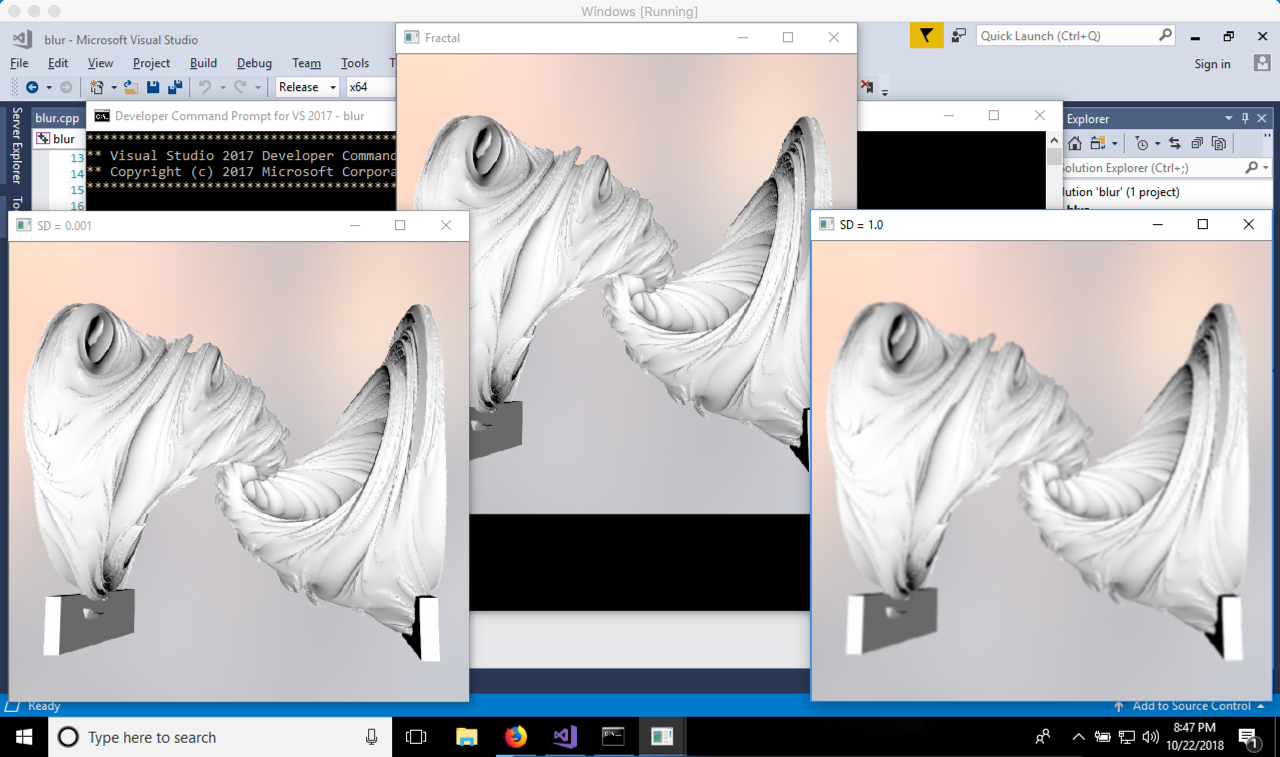

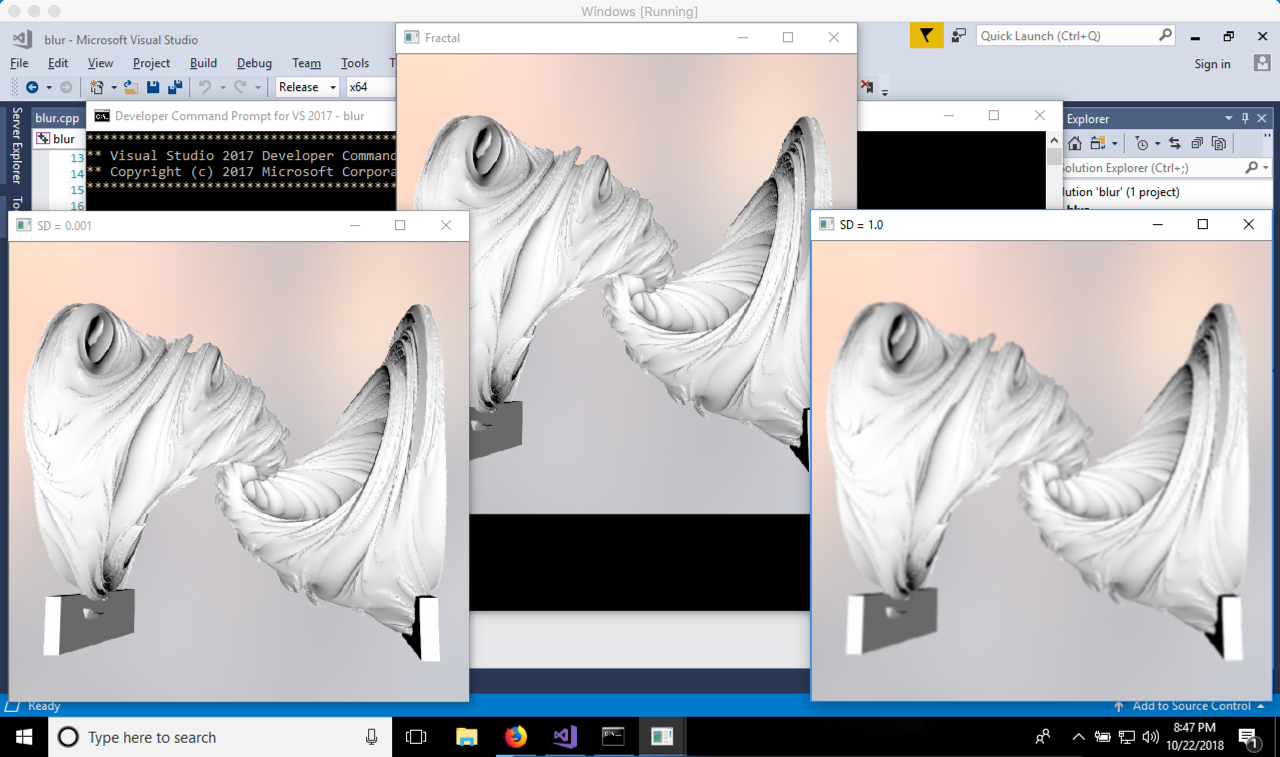

The following code, in C++, shows the difference between a standard deviation of 0.001 and 1.0.

GaussianBlur(image, blurred_1, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_2, Size(5, 5), 1.0, 1.0);

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so tall and narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable.noticeable, because the hill is so short and wide.

| 5 | No.5 Revision |

The following code, in C++, shows the difference between a standard deviation of 0.001 and 1.0.

GaussianBlur(image, blurred_1, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_2, Size(5, 5), 1.0, 1.0);

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so tall and narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable, because the hill is so short much shorter and wide.wider.

| 6 | No.6 Revision |

The following code, in C++, shows the difference between a standard deviation of 0.001 and 1.0.

GaussianBlur(image, blurred_1, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_2, Size(5, 5), 1.0, 1.0);

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so tall and narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable, because the hill is so much shorter and wider.

For very large standard deviation, the hill is practically zero in height, which makes for a blur based on the average of the pixel and its surrounding neighbours. So there's your limiting case.

| 7 | No.7 Revision |

The following code, in C++, shows the difference between a standard deviation of 0.001 and 1.0.

GaussianBlur(image, blurred_1, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_2, Size(5, 5), 1.0, 1.0);

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so tall and narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable, because the hill is so much shorter and wider.

wider. For very large standard deviation, the hill is practically zero in height, which makes for a blur based on the average of the pixel and its surrounding neighbours. So there's your limiting case.case, which is met when standard deviation goes to infinity.

| 8 | No.8 Revision |

The following code, in C++, shows the difference between a standard deviation of 0.001 and 1.0.

GaussianBlur(image, blurred_1, Size(5, 5), 0.001, 0.001);

GaussianBlur(image, blurred_2, Size(5, 5), 1.0, 1.0);

Here is an image of the output. Notice that where the standard deviation of 0.001, there is practically no blur. This is because the hill is so tall and narrow that the only sample that counts is the one in the centre of the kernel -- which leaves that pixel practically untouched. Where the standard deviation is 1.0, the blur is noticeable, because the hill is so much shorter and wider. For very large standard deviation, the hill is practically zero in height, which makes for a blur based on the average of the pixel and its surrounding neighbours. neighbours (that is, neighbours defined by the kernel size). So there's your limiting case, which is met when standard deviation goes to infinity.