|

2019-11-13 06:23:07 -0600

| received badge | ● Famous Question

(source)

|

|

2017-12-11 05:39:36 -0600

| received badge | ● Notable Question

(source)

|

|

2017-04-17 19:31:54 -0600

| received badge | ● Popular Question

(source)

|

|

2016-09-30 07:09:05 -0600

| commented question | Running Clandmark webcam example I have tried alot , and I'm stuck in getting it run : after compiling it this is the usage command : /clandmark/examples/examples$ ./video_input ./ cam 0 output_file.avi

cannot open file cam but I'm getting errors , finally not everyone is expert like you

Thanks |

|

2016-01-07 08:39:03 -0600

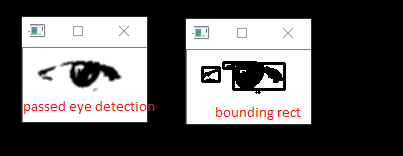

| commented answer | bounding rect around detected eye region How the width and height of the _boundingRect can be changed ?

I tried to change values of Rect eyeRect( 5, 5, mSource_Bgr.cols - 10, mSource_Bgr.rows -10 );

the result was not so different |

|

2016-01-07 06:01:36 -0600

| commented answer | bounding rect around detected eye region @sturkmen thanks very much for great answer. I will try it and update you thanks again |

|

2016-01-06 03:50:02 -0600

| asked a question | bounding rect around detected eye region Hi I'm trying to do the following : 1.detect face and then eyes

2. after doing somw threshol to the detected eye region obtain morphologyEx (open) of eye region .

3. pass the result of morphologyEx (open) so that I can bound the eye region with a rectangle like this image .

using function below I'm not getting this result .

how can this be done ? Thanks for help void find_contour(Mat image)

{

Mat src_mat, gray_mat, canny_mat;

Mat contour_mat;

Mat bounding_mat;

contour_mat = image.clone();

bounding_mat = image.clone();

cvtColor(image, gray_mat, CV_GRAY2BGR);

// apply canny edge detection

Canny(gray_mat, canny_mat, 30, 128, 3, false);

//3. Find & process the contours

//3.1 find contours on the edge image.

vector< vector< cv::Point> > contours;

findContours(canny_mat, contours, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE);

//3.2 draw contours & property value on the source image.

double area, length;

drawContours(contour_mat, contours, -1, cv::Scalar(0), 2); //draw contours on the image

//3.3 find bounding of each contour, and draw it on the source image.

for (int i = 0; i < contours.size(); ++i)

{

Rect rect;

//compute the bounding rect, rotated bounding rect, minum enclosing circle.

rect = boundingRect(contours[i]);

//draw them on the bounding image.

cv::rectangle(bounding_mat, rect, Scalar(255, 0, 0), 2);

}

imshow("Bounding ", bounding_mat);

}

|

|

2016-01-05 09:39:23 -0600

| commented answer | find contours in an image Thanks for answer , I have made some changes to the code in the tutorial : code the out was like this image how can I remove the pink border around the image and the black pixels in the original image showing only borders |

|

2016-01-05 08:57:14 -0600

| asked a question | find contours in an image Hi I want to detect contours in this image below , so that the output is like the second image shuold I use canny detection , how can I get this output Thanks

|

|

2015-12-30 12:43:45 -0600

| commented answer | unhandled memory exception problem @sturkment , thanks very much for you efforts it works now I have two questions : what the following coordinates mean : enter code here const float EYE_SX = 0.10f;

const float EYE_SY = 0.19f;

const float EYE_SW = 0.40f;

const float EYE_SH = 0.36f;

If I want to width of the of searchedRightEye like this : image because it sometimes show hair (if women video ) or background when head moves , how this can be done ? |

|

2015-12-28 09:35:26 -0600

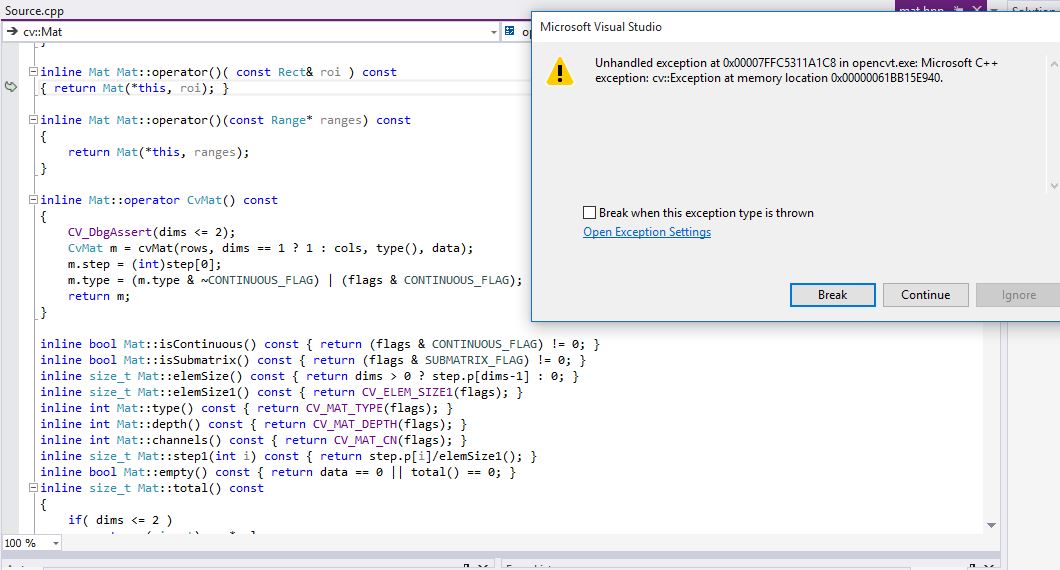

| commented question | (cv::Exception at memory location ) problem |

|

2015-12-28 08:14:42 -0600

| asked a question | (cv::Exception at memory location ) problem hi When I try to run face and eye detection on a video file unhandled exception occurs I don't know why this happeing can any one help me to solve this Thanks

|

|

2015-11-20 17:20:23 -0600

| asked a question | detecting the exact eye location I used haar cascade to detect face and then I detected eye region using ROI and without using eye cascade the out put is this image: for (;;)

{

capture >> frame;

std::vector<Rect> faces;

std::vector<Rect> eyes;

Mat frame_gray;

cvtColor(frame, frame_gray, COLOR_BGR2GRAY);

equalizeHist(frame_gray, frame_gray);

face_cascade.detectMultiScale(frame_gray, faces, 1.1, 4, CASCADE_SCALE_IMAGE, Size(20, 20));

size_t i = 0;

for (i = 0; i < faces.size(); i++) // Iterate through all current elements (detected faces)

{

Point pt1(faces[i].x, faces[i].y); // Display detected faces on main window - live stream from camera

Point pt2((faces[i].x + faces[i].height), (faces[i].y + faces[i].width));

rectangle(frame, pt1, pt2, Scalar(0, 255, 0), 2, 8, 0);

// set ROI for the eyes

Rect Roi = faces[i];

Roi.height = Roi.height / 4;

Roi.y = Roi.y + Roi.height;

Mat eye_region = frame(Roi).clone();

Mat eye_gray = frame(Roi).clone();

I want to to detect eye location only like this : image or this Image how can I do that ? |

|

2015-11-19 03:17:10 -0600

| received badge | ● Enthusiast

|

|

2015-11-18 17:02:34 -0600

| commented answer | frame difference correct method Sorry about that , I wanted to show you the code .

how can I have ( current frame and previous frame ) to be grayscale and then calculate the difference |

|

2015-11-18 12:22:00 -0600

| answered a question | frame difference correct method using your code , I used cvtColor to convery video frames to gray and calculte the frame difference , when I run it it throws cv exception at memory location . what is the problem ? int main(void)

{

cv::Mat frameCurrent, framePrev, frame_gray;

cv::Mat badDiff, frameAbsDiff, frameDif, frameChangeMask;

VideoCapture cap("1.mp4");

cap >> frameCurrent;

framePrev = frameCurrent;

frameAbsDiff = 0;

while (1)

{

if (frameCurrent.empty())

{

std::cout << "Frame1Message->End of sequence" << std::endl;

break;

}

cvtColor(frameCurrent, frame_gray, CV_RGB2GRAY);

cv::absdiff(frame_gray, framePrev, frameAbsDiff);

imshow("frameCurrent", frameCurrent);

imshow("frameAbsDiff", frameAbsDiff);

if (waitKey(90) == 27)

break;

frameCurrent.copyTo(framePrev);

cap >> frameCurrent;

}

return 0;

}

|

|

2015-11-18 07:54:35 -0600

| commented answer | frame difference correct method @pklab ,If I convert the the the video frames to grayscale and

I want to calculate the difference as :

current_frame - previous_frame . which one I need to use absdiff or subtract , I know the difference between them but which one should be used . in framePrev = cv::Mat::zeros(frameCurrent.size(), frameCurrent.type());

here you are creating empty Mat filled with zeros that equals to (frame current rows , columns and data type ) right ? |

|

2015-11-18 07:54:15 -0600

| commented answer | frame difference correct method @pklab ,If I convert the the the video frames to grayscale and

I want to calculate the difference as :

current_frame - previous_frame .

1. which one I need to use absdiff or subtract , I know the difference between them but which one should be used . - in framePrev = cv::Mat::zeros(frameCurrent.size(), frameCurrent.type());

here you are creating empty Mat filled with zeros that equals to (frame current rows , columns and data type ) right ? |

|

2015-11-15 15:45:27 -0600

| received badge | ● Student

(source)

|

|

2015-11-15 13:52:40 -0600

| commented answer | frame difference correct method thanks for answer , Is the way that I'm getting current frame and previous frame is correct ? how can I get current frame and previous frame |

|

2015-11-15 13:15:30 -0600

| edited question | frame difference correct method I want to do basic frame difference like this : diff = current frame - previous frame is this correct way to so this ? I"m not sure how to get current and next frame #include <highgui.h>

#include <iostream>

using namespace cv;

int main()

{

VideoCapture cap("Camouflage/b%05d.bmp");

if(!cap.isOpened())

{

std::cout<<"failed to open image sequence";

return 1;

}

char c;

Mat frame1, frame2, frame3;

namedWindow("Original Frames",1);

namedWindow("Frame Difference",1);

while(1)

{

cap>>frame1;

if(frame1.empty())

{

std::cout<<"Frame1Message->End of sequence"<<std::endl;

break;

}

cap>>frame2;

if(frame2.empty())

{

std::cout<<"Frame2Message->End of sequence"<<std::endl;

break;

}

frame3=frame1.clone();

frame3=frame3-frame2;

imshow("Frame Difference",frame3);

c=waitKey(90);

if(c==27)

break;

imshow("Original Frames",frame1);

c=waitKey(90);

if(c==27)

break;

}

}

|