Hi guys!

From my understanding the keypoint matching process with FLANN or BruteForce are somewhat transformation independent, so it should be able to easily match a pattern that is slightly transformed in space. I did a test using both FLANN and BruteForce matcher in conjunction with Surf or FREAK with various settings in terms of the hessian and the minimum distance of the key points to get the "good results". However when testing matching a QR code found in some photos in which the location, distance and rotation just changed slightly, the results have been pretty inconsistent.

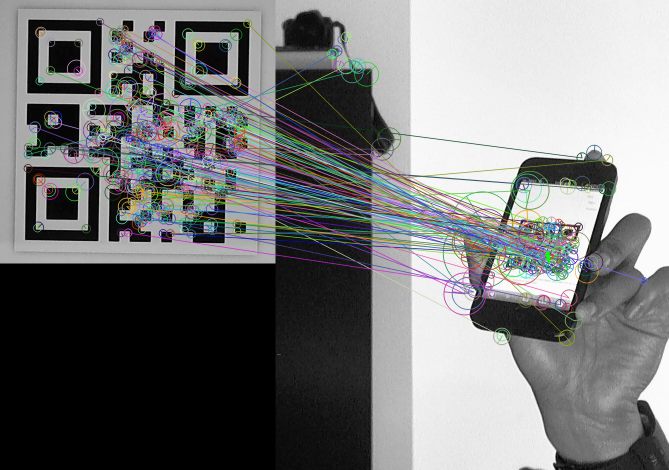

In rare cases it finds a match like this one (woohoo!):

Just placing the pattern somewhere else and slightly changing the rotation already caused troubles:

So right now I'm looking for some guidance how to improve detection of pattern like this, if I should continue looking into different feature detectors and extractors or if I should start looking into training samples.

I'd appreciate any help!

Here's my code:

using namespace cv;

void readme();

/** @function main */

int main( int argc, char** argv )

{

if( argc != 3 )

{ readme(); return -1; }

Mat img_object = imread( argv[1], CV_LOAD_IMAGE_GRAYSCALE);

Mat img_scene = imread( argv[2], CV_LOAD_IMAGE_GRAYSCALE);

if( !img_object.data || !img_scene.data )

{ std::cout<< " --(!) Error reading images " << std::endl; return -1; }

//-- Step 1: Detect the keypoints using SURF Detector

int minHessian =2000;

SurfFeatureDetector detector( minHessian );

std::vector<KeyPoint> keypoints_object, keypoints_scene;

detector.detect( img_object, keypoints_object );

detector.detect( img_scene, keypoints_scene );

//-- Step 2: Calculate descriptors (feature vectors)

FREAK extractor;

Mat descriptors_object, descriptors_scene;

extractor.compute( img_object, keypoints_object, descriptors_object );

extractor.compute( img_scene, keypoints_scene, descriptors_scene );

//-- Step 3: Matching descriptor vectors

BFMatcher matcher(cv::NORM_HAMMING, true);

std::vector< DMatch > matches;

matcher.match( descriptors_object, descriptors_scene, matches );

double max_dist = 0; double min_dist = 100;

//-- Quick calculation of max and min distances between keypoints

for( int i = 0; i < descriptors_object.rows; i++ )

{ double dist = matches[i].distance;

if( dist < min_dist ) min_dist = dist;

if( dist > max_dist ) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist );

printf("-- Min dist : %f \n", min_dist );

//-- Draw only "good" matches (i.e. whose distance is less than 3*min_dist )

std::vector< DMatch > good_matches;

float nndrRatio = 1;

for (size_t i = 0; i < matches.size(); ++i)

{

//if (matches[i].size() < 2)

// continue;

const DMatch &m1 = matches[i];

const DMatch &m2 = matches[i];

if(m1.distance <= nndrRatio * m2.distance)

good_matches.push_back(m1);

}

Mat img_matches(img_scene.clone());

drawMatches( img_object, keypoints_object, img_scene, keypoints_scene,

good_matches, img_matches, Scalar::all(-1), Scalar::all(-1),

vector<char>(), DrawMatchesFlags::DRAW_RICH_KEYPOINTS);

//-- Localize the object

std::vector<Point2f> obj;

std::vector<Point2f> scene;

for( unsigned int i = 0; i < good_matches.size(); i++ )

{

//-- Get the keypoints from the good matches

obj.push_back( keypoints_object[ good_matches[i].queryIdx ].pt );

scene.push_back( keypoints_scene[ good_matches[i].trainIdx ].pt );

}

Mat H = findHomography( obj, scene, CV_RANSAC );

//-- Get the corners from the image_1 ( the object to be "detected" )

std::vector<Point2f> obj_corners(4);

obj_corners[0] = cvPoint(0,0); obj_corners[1] = cvPoint( img_object.cols, 0 );

obj_corners[2] = cvPoint( img_object.cols, img_object.rows ); obj_corners[3] = cvPoint( 0, img_object.rows );

std::vector<Point2f> scene_corners(4);

perspectiveTransform( obj_corners, scene_corners, H);

//-- Draw lines between the corners (the mapped object in the scene - image_2 )

line( img_matches, scene_corners[0] + Point2f( img_object.cols, 0), scene_corners[1] + Point2f( img_object.cols, 0), Scalar(0, 255, 0), 4 );

line( img_matches, scene_corners[1] + Point2f( img_object.cols, 0), scene_corners[2] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[2] + Point2f( img_object.cols, 0), scene_corners[3] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[3] + Point2f( img_object.cols, 0), scene_corners[0] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

//-- Show detected matches

Mat img_out(img_matches.clone());

resize(img_matches,img_out,Size(img_matches.cols/2,img_matches.rows/2));

imshow( "detection", img_out );

waitKey(0);

return 0;

}

/** @function readme */

void readme()

{ std::cout << " Usage: ./SURF_descriptor <img1> <img2>" << std::endl; }