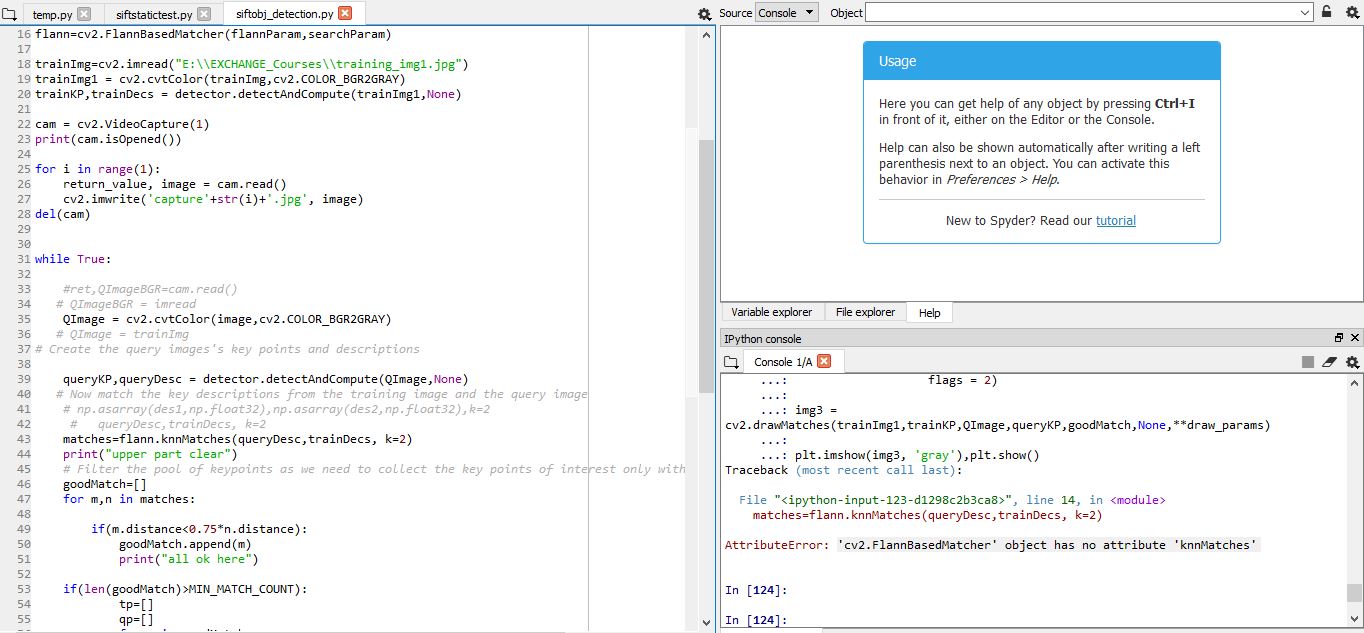

The code is for implementation of a SIFT-based algorithm with FLANN matcher on a captured image from webcam. The error for some reason is in the knnMatch where we deal with the captured image. The attached image link shows the error causing line. It would be great if someone could provide some solution to this issue, please comment below for specific details.

import cv2

import numpy as np

MIN_MATCH_COUNT = 30

detector = cv2.xfeatures2d.SIFT_create()

FLANN_INDEX_KDITREE = 0

flannParam = dict(algorithm=FLANN_INDEX_KDITREE,tree=5)

searchParam = dict(check = 50)

flann=cv2.FlannBasedMatcher(flannParam,searchParam)

trainImg=cv2.imread("E:\\EXCHANGE_Courses\\training_img1.jpg")

trainImg1 = cv2.cvtColor(trainImg,cv2.COLOR_BGR2GRAY)

trainKP,trainDecs = detector.detectAndCompute(trainImg1,None)

cam = cv2.VideoCapture(1)

print(cam.isOpened())

for i in range(1):

return_value, image = cam.read()

cv2.imwrite('capture'+str(i)+'.jpg', image)

del(cam)

while True:

QImage = cv2.cvtColor(image,cv2.COLOR_BGR2GRAY)

queryKP,queryDesc = detector.detectAndCompute(QImage,None)

# Now match the key descriptions from the training image and the query image

# np.asarray(des1,np.float32),np.asarray(des2,np.float32),k=2

# queryDesc,trainDecs, k=2

matches=flann.knnMatches(queryDesc,trainDecs, k=2)

print("upper part clear")

# Filter the pool of keypoints as we need to collect the key points of interest only with the object in mind

goodMatch=[]

for m,n in matches:

if(m.distance<0.75*n.distance):

goodMatch.append(m)

print("all ok here")

if(len(goodMatch)>MIN_MATCH_COUNT):

tp=[]

qp=[]

for m in goodMatch:

tp.append(trainKP[m.trainIdx].pt)

qp.append(queryKP[m.queryIdx].pt)

tp,qp = np.float32((tp,qp))

H,status = cv2.findHomography(tp,qp,cv2.RANSAC,3.0)

h,w=trainImg.shape

trainBorder = np.float32([[[0,0],[0,h-1],[w-1,h-1],[0,w-1]]])

queryBorder = cv2.perspectiveTransform(trainBorder,H)

# changed QImageBGR to image

cv2.polylines(QImage,[np.uint8(queryBorder)],True,(0,255,0),3)

else:

print("Not enough matches - %d/%d" %len(goodMatch),MIN_MATCH_COUNT)

cv2.imshow('results',QImage)

#print ("Not enough matches are found - %d/%d" % (len(goodMatch),MIN_MATCH_COUNT))

#matchesMask = None

#draw_params = dict(matchColor = (0,255,0), # draw matches in green color

# singlePointColor = None,

# matchesMask = matchesMask, # draw only inliers

# flags = 2)

#img3 = cv2.drawMatches(trainImg1,trainKP,QImage,queryKP,goodMatch,None,**draw_params)

#plt.imshow(img3, 'gray'),plt.show()

if cv2.waitKey(10)==ord('q'):

break

#cam.release()

#cv2.destroyAllWindows()