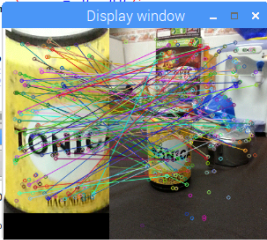

I have been trying both Surf and Brisk and none of them seem to recognize a single object in a photo.

I have tried with a lot of photos like these:

I also have tried with books and other sort of objects but with no result!

Code of brisk and surf implementations below:

BRISK

const char * PimA="image1.jpg"; // object

const char * PimB="image2.jpg"; // image

cv::Mat GrayA = getMatByName(PimA);

cv::Mat GrayB = getMatByName(PimB);

std::vector<cv::KeyPoint> keypointsA, keypointsB;

cv::Mat descriptorsA, descriptorsB;

//set brisk parameters

int Threshl=10;

int Octaves=4;

float PatternScales=1.0f;

//declare a variable BRISKD of the type cv::BRISK

cv::BRISK BRISKD(Threshl,Octaves,PatternScales);//initialize algoritm

BRISKD.create("Feature2D.BRISK");

BRISKD.detect(GrayA, keypointsA);

BRISKD.compute(GrayA, keypointsA,descriptorsA);

BRISKD.detect(GrayB, keypointsB);

BRISKD.compute(GrayB, keypointsB,descriptorsB);

cv::FlannBasedMatcher matcher(new cv::flann::LshIndexParams(20,10,2));

std::vector<cv::DMatch> matches;

matcher.match(descriptorsA, descriptorsB, matches);

std::vector<cv::DMatch> goodMatches;

int min = 1000, max = 0;

for(int i=0; i<matches.size(); i++){

if(matches[i].distance < min)

min = matches[i].distance;

if(matches[i].distance > max)

max = matches[i].distance;

}

printf("min - max %d %d\n", min, max);

for(int i=0; i<matches.size(); i++)

if(matches[i].distance < min*3)

goodMatches.push_back(matches[i]);

cv::Mat all_matches;

cv::drawMatches( GrayA, keypointsA, GrayB, keypointsB,

goodMatches, all_matches, cv::Scalar::all(-1), cv::Scalar::all(-1),

vector<char>(),cv::DrawMatchesFlags::DEFAULT );

showImage(all_matches, 1);

std::vector<Point2f> obj;

std::vector<Point2f> scene;

for( int i = 0; i < goodMatches.size(); i++ )

{

//-- Get the keypoints from the good matches

obj.push_back( keypointsA[ goodMatches[i].queryIdx ].pt );

scene.push_back( keypointsB[ goodMatches[i].trainIdx ].pt );

}

Mat H = findHomography(obj, scene, CV_RANSAC);

std::vector<Point2f> obj_corners(4);

obj_corners[0] = cvPoint(0,0);

obj_corners[1] = cvPoint( GrayA.cols, 0 );

obj_corners[2] = cvPoint( GrayA.cols, GrayA.rows );

obj_corners[3] = cvPoint( 0, GrayA.rows );

std::vector<Point2f> scene_corners(4);

perspectiveTransform( obj_corners, scene_corners, H);

//-- Draw lines between the corners (the mapped object in the scene - image_2 )

line( GrayB, scene_corners[0] + Point2f( GrayA.cols, 0), scene_corners[1] + Point2f( GrayA.cols, 0), Scalar(0, 0, 255), 4 );

line( GrayB, scene_corners[1] + Point2f( GrayA.cols, 0), scene_corners[2] + Point2f( GrayA.cols, 0), Scalar( 0, 0, 255), 4 );

line( GrayB, scene_corners[2] + Point2f( GrayA.cols, 0), scene_corners[3] + Point2f( GrayA.cols, 0), Scalar( 0, 0, 255), 4 );

line( GrayB, scene_corners[3] + Point2f( GrayA.cols, 0), scene_corners[0] + Point2f( GrayA.cols, 0), Scalar( 0, 0, 255), 4 );

showImage(GrayB, 1);

SURF

Mat img_object = imread( "image1.jpg", CV_LOAD_IMAGE_GRAYSCALE );

Mat img_scene = imread( "image2.jpg", CV_LOAD_IMAGE_GRAYSCALE );

if( !img_object.data || !img_scene.data )

{ std::cout<< " --(!) Error reading images " << std::endl; return -1; }

//-- Step 1: Detect the keypoints using SURF Detector

int minHessian = 400;

SurfFeatureDetector detector( minHessian );

std::vector<KeyPoint> keypoints_object, keypoints_scene;

detector.detect( img_object, keypoints_object );

detector.detect( img_scene, keypoints_scene );

//-- Step 2: Calculate descriptors (feature vectors)

SurfDescriptorExtractor extractor;

Mat descriptors_object, descriptors_scene;

extractor.compute( img_object, keypoints_object, descriptors_object );

extractor.compute( img_scene, keypoints_scene, descriptors_scene );

//-- Step 3: Matching descriptor vectors using FLANN matcher

FlannBasedMatcher matcher;

std::vector< DMatch > matches;

matcher.match( descriptors_object, descriptors_scene, matches );

double max_dist = 0; double min_dist = 100;

//-- Quick calculation of max and min distances between keypoints

for( int i = 0; i < descriptors_object.rows; i++ )

{ double dist = matches[i].distance;

if( dist < min_dist ) min_dist = dist;

if( dist > max_dist ) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist );

printf("-- Min dist : %f \n", min_dist );

//-- Draw only "good" matches (i.e. whose distance is less than 3*min_dist )

std::vector< DMatch > good_matches;

for( int i = 0; i < descriptors_object.rows; i++ )

{ if( matches[i].distance < 3*min_dist )

{ good_matches.push_back( matches[i]); }

}

Mat img_matches;

drawMatches( img_object, keypoints_object, img_scene, keypoints_scene,

good_matches, img_matches, Scalar::all(-1), Scalar::all(-1),

vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS );

//-- Localize the object

std::vector<Point2f> obj;

std::vector<Point2f> scene;

for( int i = 0; i < good_matches.size(); i++ )

{

//-- Get the keypoints from the good matches

obj.push_back( keypoints_object[ good_matches[i].queryIdx ].pt );

scene.push_back( keypoints_scene[ good_matches[i].trainIdx ].pt );

}

Mat H = findHomography( obj, scene, CV_RANSAC );

//-- Get the corners from the image_1 ( the object to be "detected" )

std::vector<Point2f> obj_corners(4);

obj_corners[0] = cvPoint(0,0); obj_corners[1] = cvPoint( img_object.cols, 0 );

obj_corners[2] = cvPoint( img_object.cols, img_object.rows ); obj_corners[3] = cvPoint( 0, img_object.rows );

std::vector<Point2f> scene_corners(4);

perspectiveTransform( obj_corners, scene_corners, H);

//-- Draw lines between the corners (the mapped object in the scene - image_2 )

line( img_matches, scene_corners[0] + Point2f( img_object.cols, 0), scene_corners[1] + Point2f( img_object.cols, 0), Scalar(0, 255, 0), 4 );

line( img_matches, scene_corners[1] + Point2f( img_object.cols, 0), scene_corners[2] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[2] + Point2f( img_object.cols, 0), scene_corners[3] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

line( img_matches, scene_corners[3] + Point2f( img_object.cols, 0), scene_corners[0] + Point2f( img_object.cols, 0), Scalar( 0, 255, 0), 4 );

//-- Show detected matches

showImage(img_matches, 1);

waitKey(0);

return 0;

Thanks in advance!