Stereo calibration fails

I am trying to properly calibrate a multi-stereo system. For this, I need to first calibrate camera intrinsic parameters, then the stereo extrinsic parameters for each pair, then the extrinsics between each stereo pair.

The cameras that I have don't have very good lenses for this. These are Xiaomi Yi sport-action cameras, each one currently has 155-degree lens (alternative lenses ordered, on the way). More on that in the appendix.

I'm using the calibrateCamera function to calibrate intrinsics. I use the rational model with 6 radial coefficients from the latest OpenCV build (see 'Detailed Description' here). Neither tangential coefficients nor the thin-prism coefficients seem to improve the reprojection error significantly, so I don't use them. I calibrate each camera on around 200 images, and I'm getting re-projection error within 0.35 to 0.40 pixels. I have no idea as of yet what gets rejected by RANSAC and what doesn't, but I use alternative sets of images to verify each calibration, which yields about the same reprojection error (the intrinsics & distortion coefficients being fixed during testing).

I start seeing a problem at the stereo stage. I lock the intrinsics (incl. distortion coefficients) during stereo calibration. I get reprojection errors in the range of 2.5-4.0 pixels. Again, I have no idea what's being rejected by RANSAC as of yet.

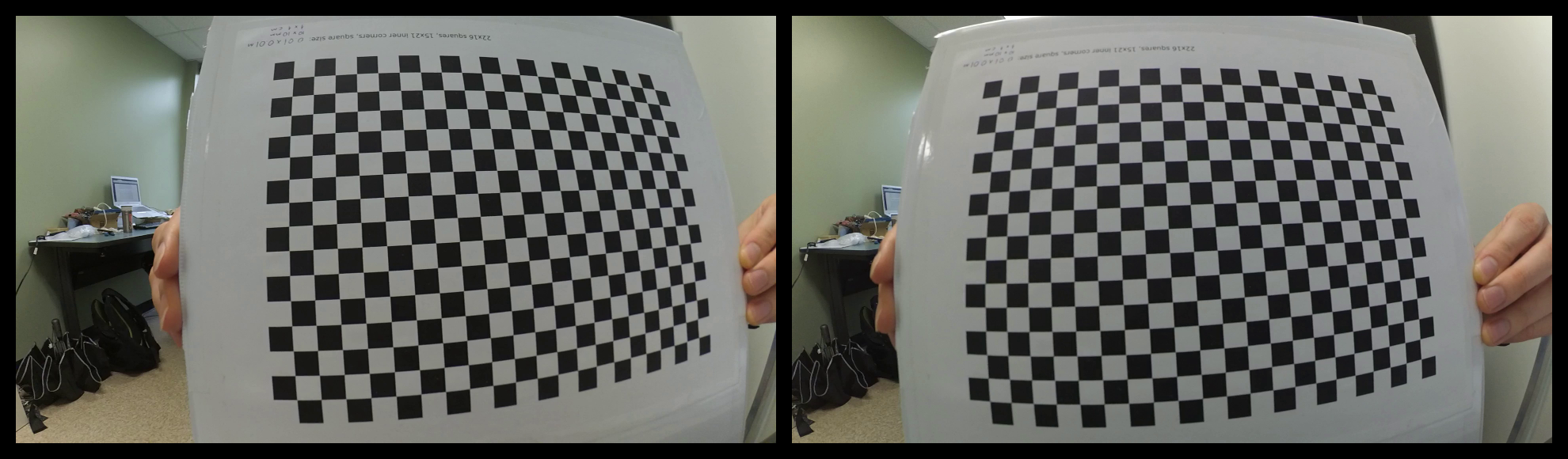

Here are sample left & right images (with distortion), scaled down:

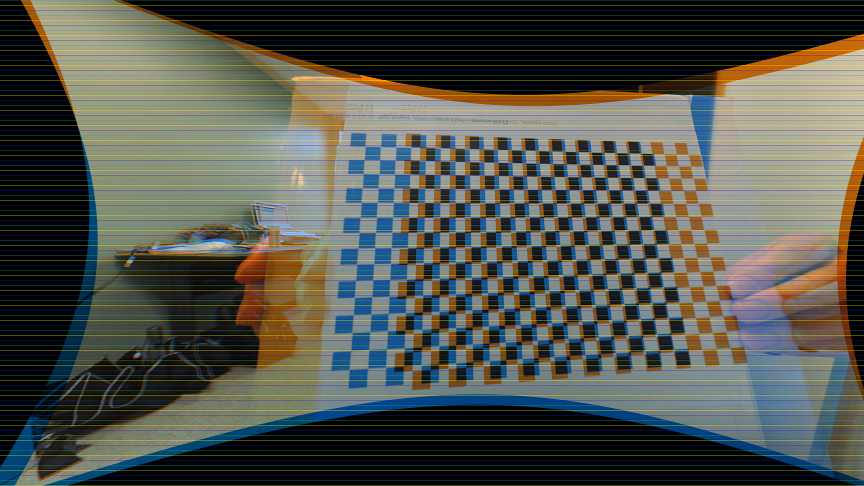

Here is an overlay of the same images after stereo-rectification (blue=left, orange=right), with horizontal lines depicting where epipolar lines are supposed to be:

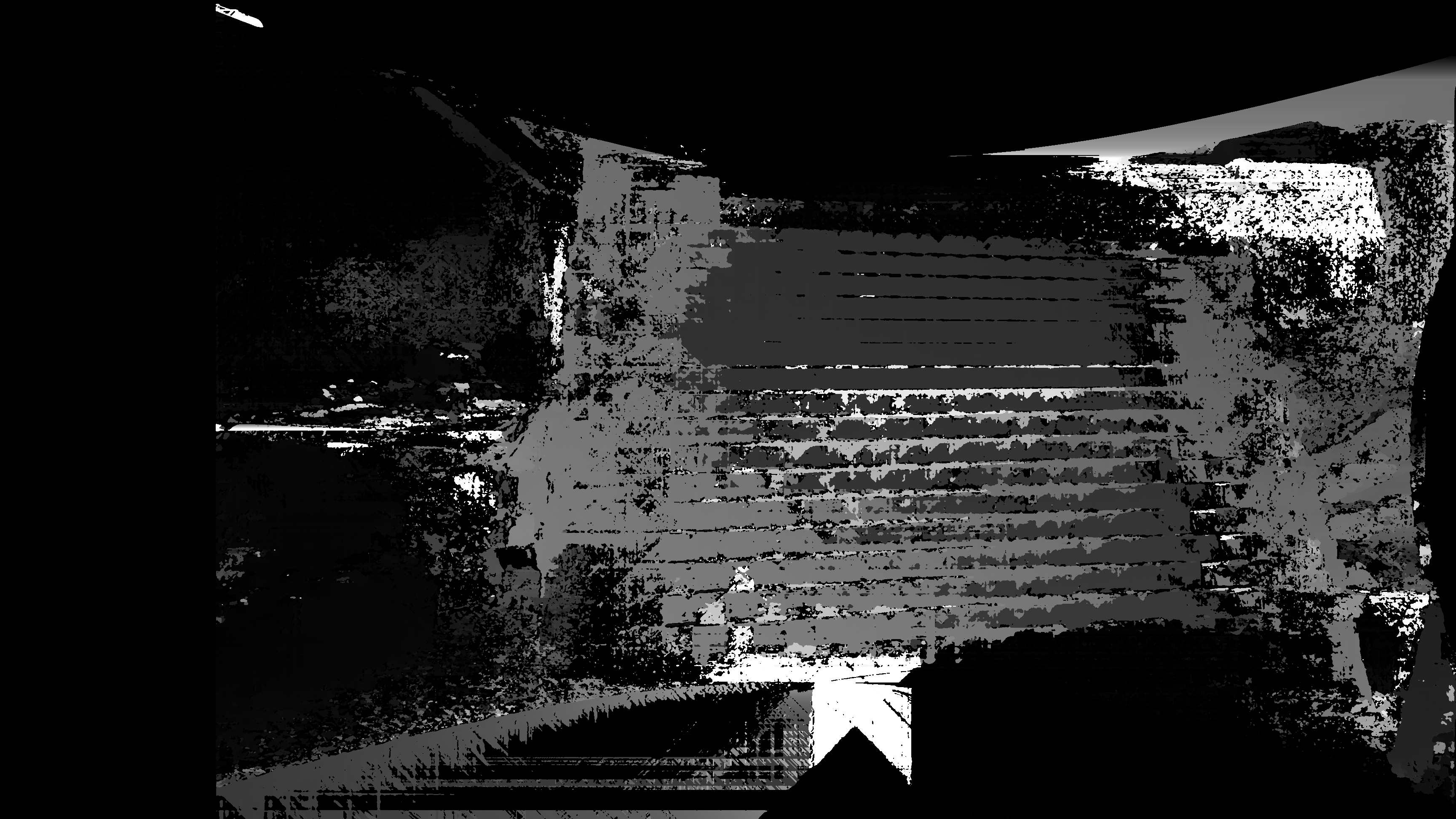

Here is the resulting disparity using the StereoSGBM algorithm (tuning its parameters in any way doesn't yield any dramatic improvements over this):

Conclusion: stereo calibration is not accurate enough to yield appropriate depth-from-stereo. What can I do to fix that?

[Edit] I have been long under suspicion that high re-projection error during calibration results from cameras not being perfectly synced. Currently, I can only synchronize them in post-processing, which means of sync accuracy within 1/2 frame. The cameras run at 60 fps. I have yet to verify this by shooting a still scene, but it seems like epipolar "lines" don't come out horizontal & straight for still objects either....

Appendix (on lenses)

Cameras' manufacturer claims the lenses are 155 "fish-eye" lenses, where DFOV = 155 degrees and focal length is 2.73 mm +-5%. The sensor's diagonal is 7.77 mm. If you plug the focal length & diagonal into the most basic formula for calculating DFOV, DFOV = 2*arctan( sensor_diagonal/(2f)), you get about 110 degrees. Hence I feel the lens is a compound lens with some weird configuration, and hence does not adhere to the formula (most camera lenses are compound nowadays...) Manually I measured the HFOV to be about 120 degrees, which does not work out math-wise with 155 degrees either. It may be that the camera is not using the width of the entire sensor in video mode, but all of this is guesswork.