Coordinate axis with triangulatePoints

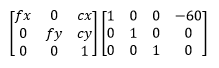

So, I have the projection matrix of the left camera:

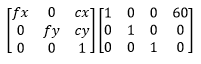

and the projection matrix of my right camera:

And when I perform triangulatePoints on the two vectors of corresponding points, I get the collection of points in 3D space.

All of the points in 3D space have a negative Z coordinate. I assume that the initial orientation of each camera is directed in the positive Z axis direction.

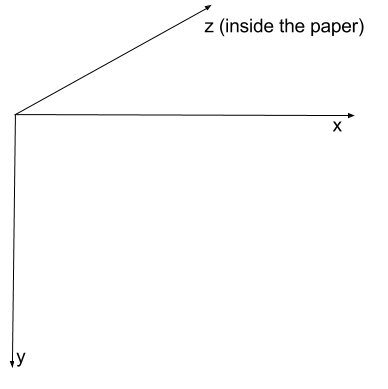

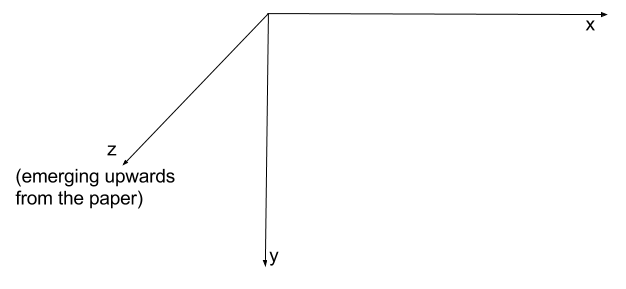

My assumption was that OpenCV uses Right Hand coordinate system like this:

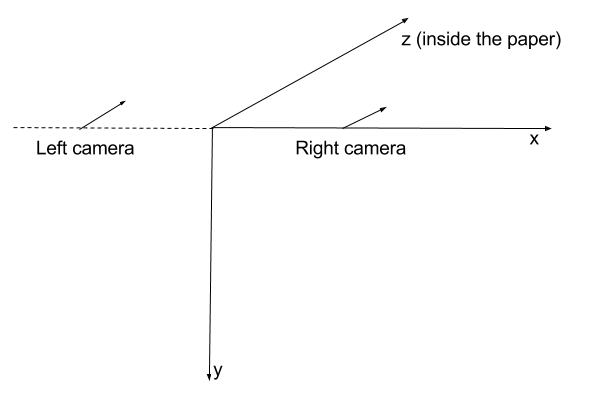

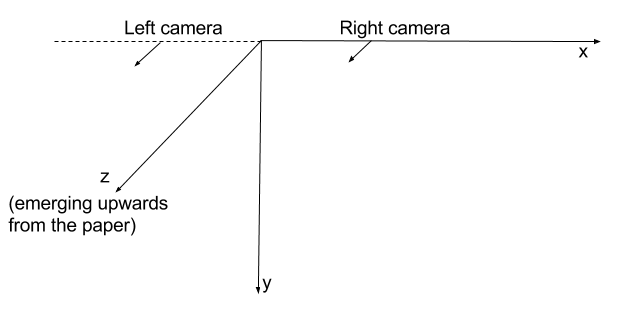

So, when I positioned my cameras with projection matrices, the complete picture would look like this:

But my experiment leads me to believe that OpenCV uses Left Hand coordinate system:

And that my projection matrices have effectively messed up the left and right concept:

Is everything I've said correct? Is the latter coordinate system really the one that is used by OpenCV?

If I assume that it is, everything seems to work fine. But when I want to visualize things using viz module, their WCoordinateSystem widget is right handed.

Is the question unclear in some way? I would consider this to be basic stuff... I just cannot find a definite answer.

Specifically, when I use

triangulatePointsto get 3D point coordinates. And immediately after that I pass these 3D point coordinates tosolvePnP. What I expect to get is a camera pose around zero 3D coordinate and zero rotation.Instead I get some wild values, indicating that my camera moved about 5 meters in some random direction.