SVM error when input data size is too large (calc_non_rbf_base)

Hello everyone,

I'm facing some trouble while training an SVM and I'm wondering if it has to do with the size of each of the input vectors. As usually, I'm computing some descriptors to be passed into the classifier (vector<float> descriptors). Everything goes alright (the SVM is trained without problems) until the vector I use goes too big (around 220000 in length - just an approximation, haven't tested/found exact amount yet) when it arises an exception (error at memory location whatever position)

So... is there any know maximum size for those input vectors? I haven't found anything on the docs, and neither on LibSVM docs (as OpenCV implementation is based on it).

Just to clarify: 1. the exception does not point running out of memory, there's plenty of memory available, and 2. I'm using OpenCV 2.4.10 on a Windows 7 64 bits computer with 16GB RAM

UPDATE 1:

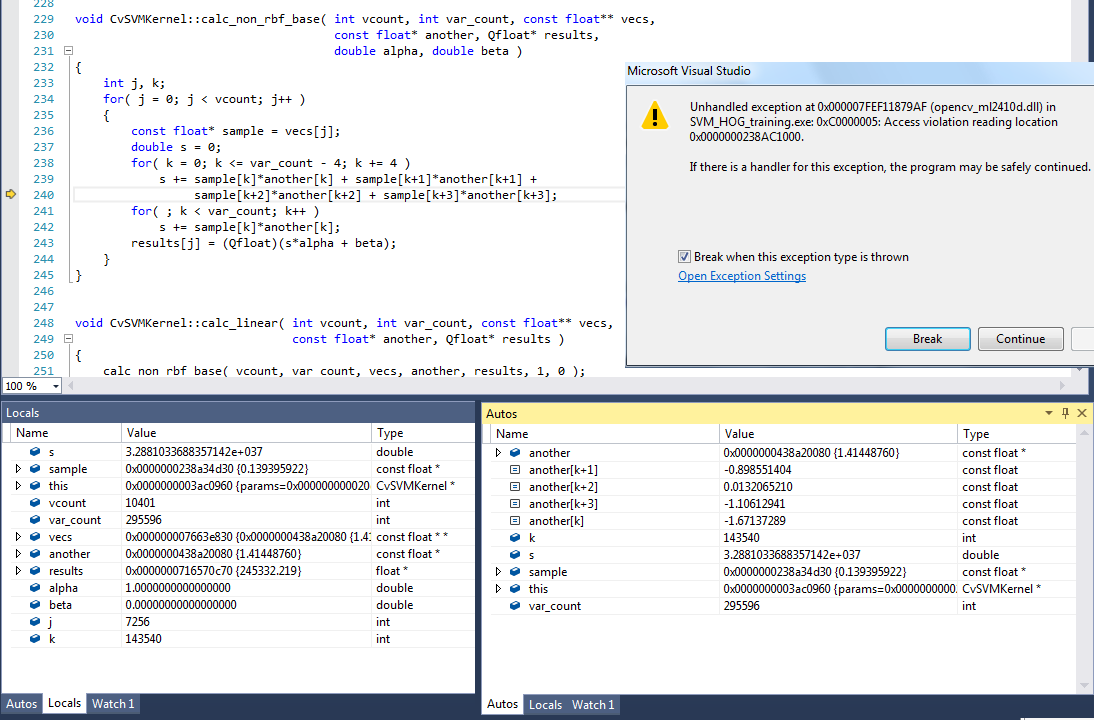

I've been debugging the program and the error arises at the calc_non_rbf_base function in svm.cpp. I still don't know the reason of the crash, it seems like something is happening at the low-level programming, but my skills at that level are not enough to understand what's going on.

I'm posting one screen capture with the values of all variables at the exception point, and also the exception error, as I think it's the best way to show all relevant info. It seems something's happening while accesing the samples variable.

More details about my code:

- The capture has been taken while using 10400 samples, each represented by a descriptor of size 295596. However as you can see, the exception arises while using sample number 7256 (j variable) and component number 143540 (k variable). That's quite interesting and unexpected as I have succesfully trained the SVM using 10400 samples x ~185000 descriptor size

- I've sucessfully trained a SVM with dummy data of size 50 samples x 1,000,000 descriptor size. So, I guess it has to do not only with descriptor size but with a combination of both sizes.

- Using OpenCV 2.4.10. I haven't tested the issue with 2.4.11 nor 3.0.0

I really hope some of the gurus of the forum can take a look and give some insights on the issue, because it could be a quite huge hiccup in my research. Of course, ask whatever extra info you want. I'll be incredibly thankful for all help

(EDIT: changed title of question to better address issue)

UPDATE 2:

I've been doing more tests and I've come across new findings. First of all, I tested on the same code as Update 1 different lenghts of the features vector (all with 10401 number of samples), with these results: (screenshots available is someone needs them)

- Features length 295596 -> crashes at j variable = 7256

- Features length 238140 -> crashes at j variable = 9008

- Features length 220000 -> crashes at ...

Interesting question, will follow this ;) No idea however why it goes wrong ... never reached descriptors that large :D

Update 2 has new interesting findings and code to reproduce issue. Tested on OpenCV 2.4.12

I got a system with 32 GB RAM running at work, might check if I run into similar problems with other numbers somewhere this week!

@StevenPuttemans thank you so so much, this is driving me crazy. I'll also check with version 3.0.0 this week

Tested with OpenCV 3.0.0 and had no errors. However, there are other problems in this version too. @StevenPuttemans: should I mention some of the devs so they can have a look at this question or is it better to directly open an issue ticket? Do you think is it likely to be solved as the ml module was rewriten for the new version?

I think it is better to have a call to @mshabunin because he rewrote the largest part of the ML module. That being said, your slower processing is probably due to the T-API backend which is causing OpenCL problems for the moment. Disabling OpenCL before calling your functions solves the problem in most cases. Similar topic here but then for Sobel filtering. Try adding

#include <opencv2/core/ocl.hpp>andcv::ocl::setUseOpenCL(false);at the top of your program.I think it is better to open an issue with the link to minimal reproducing code and some system information.

Done: #5137. Thank you very much both @StevenPuttemans@mshabunin