Mat rotation gets wrong result

I want to rotate an image by 90 degrees. My code is like following:

void rotate90(Mat & src, Mat & dst, int direction) { int src_width = src.cols, src_height = src.rows; cout << src_width << " " << src_height << endl; Point center(src_width / 2.0f, src_height / 2.0f); double angle = 0; if(direction > 0) angle = 90.0; else angle = -90.0; Mat rot_mat = getRotationMatrix2D(center, angle, 1); warpAffine(src, dst, rot_mat, Size(src_height, src_width)); } int main(int argc, const char *argv[]) { Mat img = imread("/Users/chuanliu/Desktop/src4/p00.JPG"); resize(img, img, Size(1024, 683)); rotate90(img, img, 1); imwrite("/Users/chuanliu/Desktop/roatation.jpg",img); return 0; }

But the result is like following:

Before rotation:

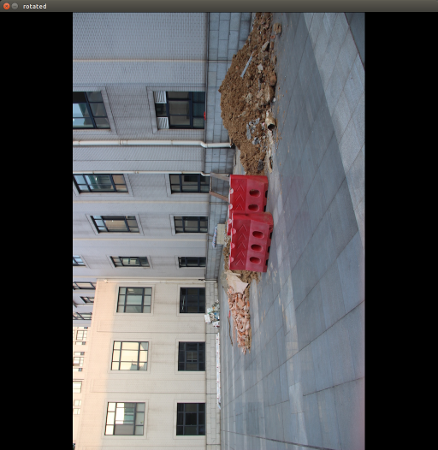

After rotation:

It seems that the center of rotation has sth wrong. But I don't think I set a wrong center. Is there anyone can tell me what is wrong?

hmm, isn't the center point determined in dst-coords ? you also flip w/h there, if i rotate it around the src center, i get exactly your result.

So could you tell me the right way to do this? Which point should I choose to be the rotation center?