Image matching problem

Hello

I want to amtch images of banknotes of witch i have photos and compare them to the image taken recently, so that I can determin witch kinde of banknote I took a photo of.

I am loading the banknotes into a vector<mat> and iterate thru them and compare each to the photo just taken. I have used the FLANN based amtching example as a starting point, but the matching done there has a lot of errors points are matched utterly wrong, and that messes up my matching process. The images are kinda similar, as in the banknotes have some similarities, but that shoudn't be the problem, as the example shows the points are matched exactly. So my question would be is there an optimal way to match banknotes? Maybe there is a flaw in my matching settings.

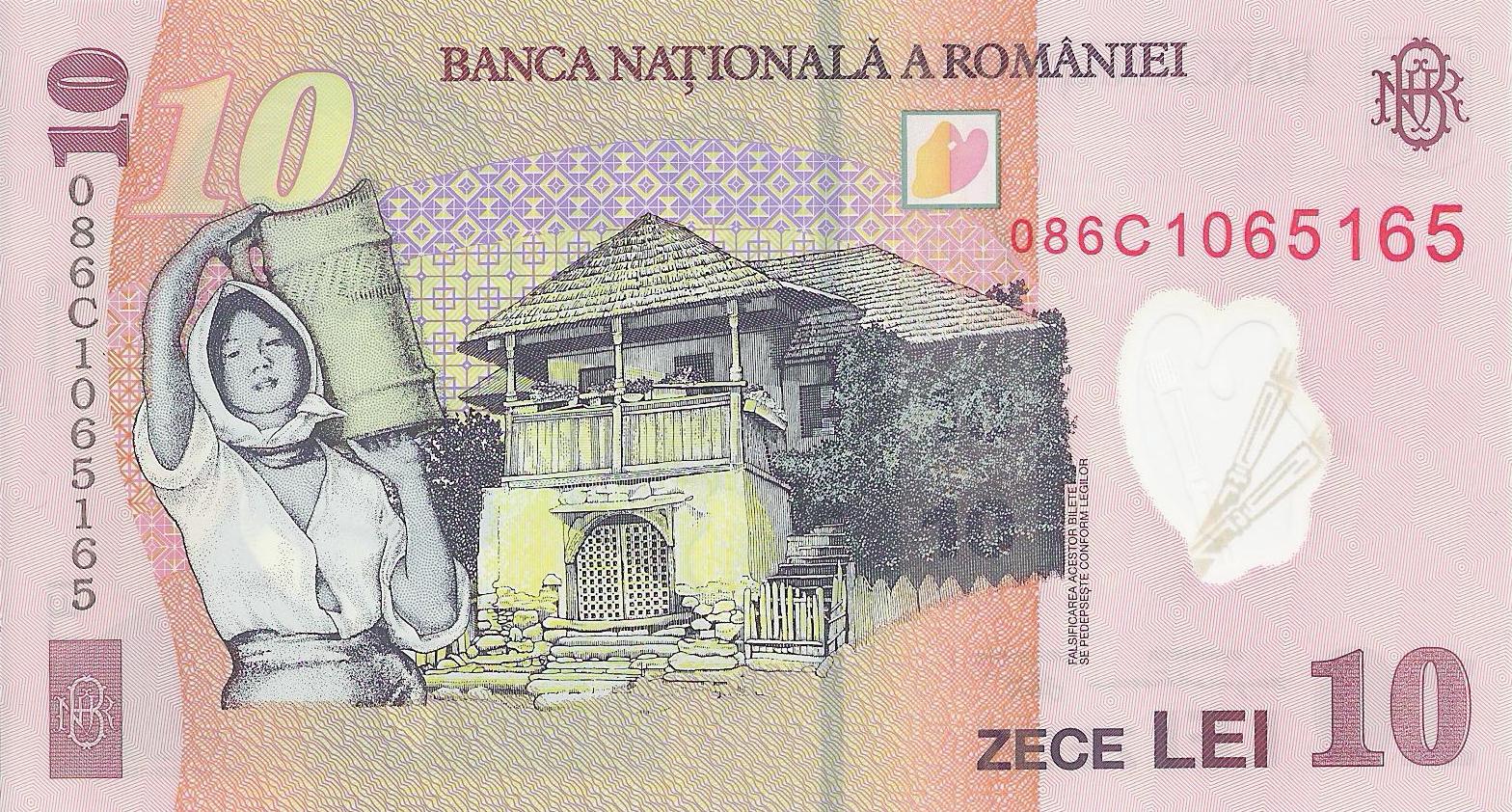

These are some of my sample Images:

And these are the two images that I want to compare them to:

with this code i load all my files:

for(std::set<string>::iterator Name = ListOfFileNames.begin() ; Name != ListOfFileNames.end() ; ++Name)

{

string path = "ron/";

string name = *Name;

if(name.length()>4)

{

path.append(name);

IplImage *img=cvLoadImage(path.c_str());

cv::Mat tempImage(img,CV_LOAD_IMAGE_GRAYSCALE);

cvtColor(tempImage, tempImage, CV_RGB2GRAY);

loadedImages.push_back(tempImage);

loadedImagesNames.push_back(name);

}

}

And with the Orb matcher I compare the new files to the dataset:

int compareOrb(cv::Mat *img_1t, cv::Mat *img_2t){

int minHessian = 800;

Mat img_1 = *img_1t;

Mat img_2 = *img_2t;

OrbFeatureDetector detector( minHessian );

std::vector<KeyPoint> keypoints_1, keypoints_2;

detector.detect( img_1, keypoints_1 );

detector.detect( img_2, keypoints_2 );

//-- Step 2: Calculate descriptors (feature vectors)

OrbDescriptorExtractor extractor;

Mat descriptors_1, descriptors_2;

extractor.compute( img_1, keypoints_1, descriptors_1 );

extractor.compute( img_2, keypoints_2, descriptors_2 );

//-- Step 3: Matching descriptor vectors using FLANN matcher

if(descriptors_1.type()!=CV_32F) {

descriptors_1.convertTo(descriptors_1, CV_32F);

}

if(descriptors_2.type()!=CV_32F) {

descriptors_2.convertTo(descriptors_2, CV_32F);

}

FlannBasedMatcher matcher;

std::vector< DMatch > matches;

matcher.match( descriptors_1, descriptors_2, matches );

double max_dist = 0; double min_dist = 100;

//-- Quick calculation of max and min distances between keypoints

for( int i = 0; i < descriptors_1.rows; i++ )

{ double dist = matches[i].distance;

if( dist < min_dist ) min_dist = dist;

if( dist > max_dist ) max_dist = dist;

}

/*

printf("-- Max dist : %f \n", max_dist );

printf("-- Min dist : %f \n", min_dist );

*/

//-- Draw only "good" matches (i.e. whose distance is less than 2*min_dist )

//-- PS.- radiusMatch can also be used here.

std::vector< DMatch > good_matches;

for( int i = 0; i < descriptors_1.rows; i++ )

{ if( matches[i].distance < 2*min_dist )

{ good_matches.push_back( matches[i]); }

}

//-- Draw only "good" matches

Mat img_matches;

drawMatches( img_1, keypoints_1, img_2, keypoints_2,

good_matches, img_matches, Scalar::all(-1), Scalar::all(-1),

vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS );

//-- Show detected matches

imshow( "Good Matches", img_matches );

for( int i = 0; i < good_matches.size(); i++ )

{ printf( "-- Good Match [%d] Keypoint 1: %d -- Keypoint 2: %d \n", i, good_matches[i].queryIdx, good_matches[i].trainIdx ); }

waitKey(0);

return good_matches.size();

}

And with this loop I check them all:

int best = 0;

int bestId = -1;

int t;

for(int i=0;i<loadedImages.size();i++)

{

t =compareOrb(&loadedImages.at(i),&image5);

//t=compareSurf ...

I don't know the time constraint of your application, but for example you can switch (temporaly) to the BruteForce method and impose the crossCheck flag, so that you always have two features that match in a reciprocal way. This may be helpful to see if there are some problem in your code. Also if u can share part of the code where u do the matching it would be helpful to see if everything is done correctly. Here is the doc for the BruteForce approach http://docs.opencv.org/modules/features2d/doc/common_interfaces_of_descriptor_matchers.html#bfmatcher-bfmatcher

In order to give you good advice we will need to see some images you are trying to match. Hundreds of matching approaching exist, but approach that is good for one problem may be completely inappropriate for another.

I have added the images and the code.