how to prune lines detected by houghtransformp ?

I am trying to detect quadrilateral and going to do a perspective correction based on http://opencv-code.com/tutorials/automatic-perspective-correction-for-quadrilateral-objects/ by using probabilistic hough transform.

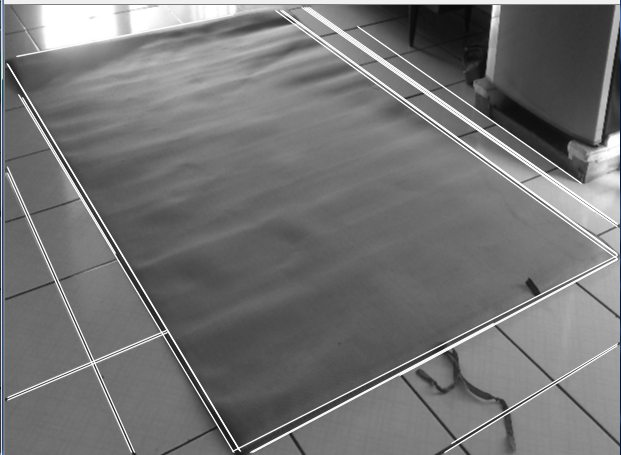

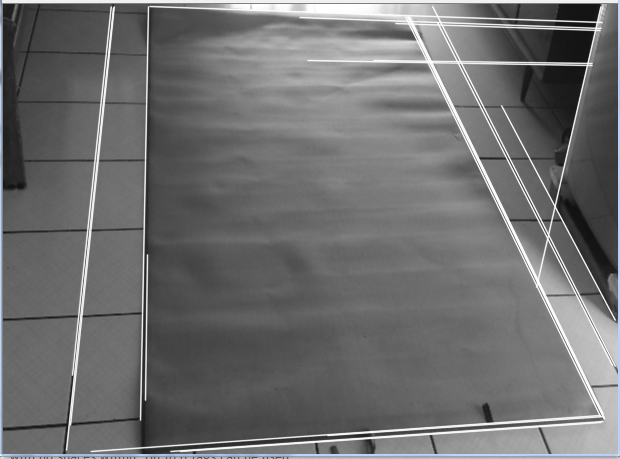

I only need 4 lines, but i ended up with other things (as you can see in the picture)

it's on purpose that i take the object of interest with other square environment ( to see if my program is adaptive)

i tried to play with the threshold and other parameter but these 2 images are the best that i can end up with.

can anyone help me to prune other lines except the 4 lines that i need (the ones that make a quadrilateral) thanks.

int main()

{

//initialize the mat and variables

cv::Mat bw,rsz,roi,circleroi,result,dst,cannyimg;

int t1min =109;

//we want to adjust the size to 800x600

Size size(800,600); //the dst image size,e.g.800x600

//load the image

cv::Mat src = cv::imread("D:\\image\\perspective.jpg");

if (src.empty())

return -1;

//create windows

cvNamedWindow( "houghlinep", CV_WINDOW_AUTOSIZE );

while(1)

{

//create trackbars

char TrackbarName1[50]="t1min";

cvCreateTrackbar(TrackbarName1, "houghlinep", &t1min, 260 , NULL );

if (t1min<=10)

t1min=10;

//resize image

resize(src,rsz,size);

//adjust the image boundary

roi = rsz(cv::Range(110,560),cv::Range(70,685));

//make the image grayscale to make it easier to canny

cv::cvtColor(roi, bw, CV_BGR2GRAY);

//blur it to make it easier to canny

cv::blur(bw, bw, cv::Size(2,2));

//edge detect with canny operator

cv::Canny(bw, dst, 50,100, 3);

//copy the result to cannyimg

dst.copyTo(cannyimg);

//make lines object

vector<Vec4i> lines;

//use houghlinesp to dst

HoughLinesP(dst, lines, 1, CV_PI/180,t1min, 140, 80 );

//illustrate the line on the black n white image

char count=1;

for( size_t i = 0; i < lines.size(); i++ )

{

Vec4i l = lines[i];

line(bw, Point(l[0], l[1]), Point(l[2], l[3]), CV_RGB(0,255,255), 1, CV_AA);

}

/*for (int i = 0; i < lines.size(); i++)

{

cv::Vec4i l = lines[i];

lines[i][0] = 0;

lines[i][1] = ((float)l[1] - l[3]) / (l[0] - l[2]) * -l[0] + l[1];

lines[i][2] = bw.cols;

lines[i][3] = ((float)l[1] - l[3]) / (l[0] - l[2]) * (bw.cols - l[2]) + l[3];

}*/

//show the images

cv::imshow("canny",cannyimg);

cv::imshow("houghlinep",bw);

cv::imshow("yeah",roi);

if(t1min=t1min) cvWaitKey(0);

if( (cvWaitKey(10) & 255) == 27 ) break;

cvReleaseFileStorage;

}

return 1;

}