opengl Triangulate Points

Hello,

Today we're doing SFM and we want to draw the result in OpenGL.

So we calculate opticalFlow using Farneback. This gives us two sets of 2d points.

Using the camera matrix, we using undistortPoints to tidy things up using the camera matrix.

/* Undistort the points based on intrinsic params and dist coeff */

cv::undistortPoints( left_points, left_points, cam_matrix, dist_coeff );

cv::undistortPoints( right_points, right_points, cam_matrix, dist_coeff );

Using these two sets of points, we create the fundemental matrix and essential matrix.

/* Try to find essential matrix from the points */

cv::Mat fundamental = cv::findFundamentalMat( left_points, right_points, CV_FM_RANSAC, 3.0, 0.99 );

cv::Mat essential = cam_matrix.t() * fundamental * cam_matrix;

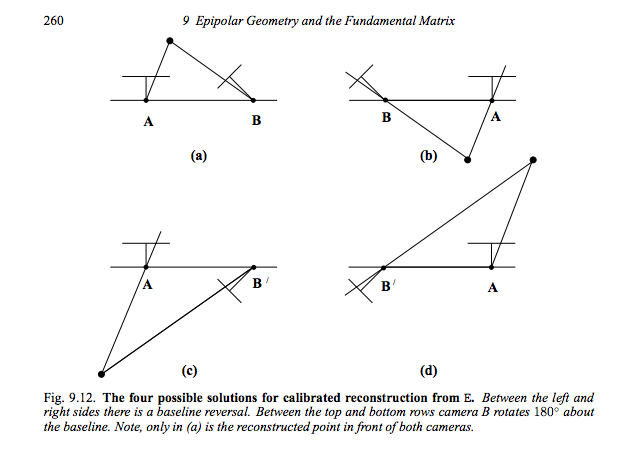

Using SVD on the essential matrix, yields the rotation and translation vectors.

/* Find the projection matrix between those two images */

cv::SVD svd( essential );

static const cv::Mat W = (cv::Mat_<double>(3, 3) <<

0, -1, 0,

1, 0, 0,

0, 0, 1);

static const cv::Mat W_inv = W.inv();

cv::Mat_<double> R1 = svd.u * W * svd.vt;

cv::Mat_<double> T1 = svd.u.col( 2 );

cv::Mat_<double> R2 = svd.u * W_inv * svd.vt;

cv::Mat_<double> T2 = -svd.u.col( 2 );

R1 and T1 are used to create the second projection matrix.

static const cv::Mat P1 = cv::Mat::eye(3, 4, CV_64FC1 );

cv::Mat P2 =( cv::Mat_<double>(3, 4) <<

R1(0, 0), R1(0, 1), R1(0, 2), T1(0),

R1(1, 0), R1(1, 1), R1(1, 2), T1(1),

R1(2, 0), R1(2, 1), R1(2, 2), T1(2));

Triangulate points.

cv::Mat out;

cv::triangulatePoints( P1, P2, left_points, right_points, out );

We can then convert to homogenous points, which are 0 centered, i believe.

/* Triangulate the points to find the 3D homogenous points in the world space

Note that each column of the 'out' matrix corresponds to the 3d homogenous point

*/

std::vector<cv::Point3f> pt_3d;

cv::convertPointsHomogeneous(out.reshape(4, 1), pt_3d);

Using OpenGL we can draw points.

Here are a number of questions that i am still having difficulty with.

- What are R2 and T2? Rotation from P1 back to P0? The inverse rotation?

- How can I use these values in OpenGL?

Since we now have P0 and P1, we can decompose these projection matrices to get euler angles. An old tutorial on POSIT mentioned that euler angles are important for OpenGL.

cv::decomposeProjectionMatrix(InputArray projMatrix, OutputArray cameraMatrix, OutputArray rotMatrix, OutputArray transVect, OutputArray rotMatrixX=noArray(), OutputArray rotMatrixY=noArray(), OutputArray rotMatrixZ=noArray(), OutputArray eulerAngles=noArray() )

- Should I be using the Rodriquez transform here? What does it do?

"In the theory of three-dimensional rotation, Rodrigues' rotation formula (named after Olinde Rodrigues) is an efficient algorithm for rotating a vector in space, given an axis and angle of rotation. By extension, this can be used to transform all three basis vectors to compute a rotation matrix from an axis–angle representation. In other words, the Rodrigues formula provides an algorithm to compute the exponential map from so(3) to SO(3) without computing the full matrix exponent."

Some markup can do wonders :)

@StevenPuttemans I don't understand, markup?

I formatted your code :) you forgot to use the standard makeup for topics ;)

@StevenPuttemans thank you.