Stereo calibration baseline in meters

Hi, I calibrated my stereo webcam with a chessboard and:

stereoCalibrate(object_points, imagePoints1, imagePoints2, CM1, D1, CM2, D2, img1.size(), R, T, E, F, cvTermCriteria(CV_TERMCRIT_ITER+CV_TERMCRIT_EPS, 100, 1e-5), CV_CALIB_SAME_FOCAL_LENGTH | CV_CALIB_ZERO_TANGENT_DIST);

I need to know the baseline of the stereo camera in meters, so I look inside the T matrix. The first element of the T matrix is -1.6952669833501108e+00, the problem is that when I measure physically the distance between sensors with a ruler is approximately 4 cm, that is 0.04 m, what is the metrics of the elements in the T matrix? Therefore, I don't know the physical size of the pixels, because I don't know the sensor used.

%YAML:1.0

CM1: !!opencv-matrix

rows: 3

cols: 3

dt: d

data: [ 6.7035296112450442e+02, 0., 3.0366775716427702e+02, 0.,

6.7334702239218382e+02, 2.3446212435423504e+02, 0., 0., 1. ]

CM2: !!opencv-matrix

rows: 3

cols: 3

dt: d

data: [ 6.7035296112450442e+02, 0., 3.0235229497623436e+02, 0.,

6.7334702239218382e+02, 2.3428252297251004e+02, 0., 0., 1. ]

D1: !!opencv-matrix

rows: 1

cols: 5

dt: d

data: [ -2.7243204402554655e-01, 6.7461566940160000e-01, 0., 0.,

-1.1338340048806972e+00 ]

D2: !!opencv-matrix

rows: 1

cols: 5

dt: d

data: [ -2.9489669516506523e-01, 8.7704813783022018e-01, 0., 0.,

-1.7809117056153765e+00 ]

R: !!opencv-matrix

rows: 3

cols: 3

dt: d

data: [ 9.9994998218891662e-01, 1.8032584564876427e-03,

-9.8377527578461001e-03, -1.7978260373390045e-03,

9.9999822653927839e-01, 5.6101678905229378e-04,

9.8387469692470843e-03, -5.4330216016358439e-04,

9.9995145076190473e-01 ]

T: !!opencv-matrix

rows: 3

cols: 1

dt: d

data: [ -1.6952669833501108e+00, -8.3183862355057793e-04,

1.6553031717676806e-02 ]

E: !!opencv-matrix

rows: 3

cols: 3

dt: d

data: [ 2.1575221682576832e-05, -1.6552550421804146e-02,

-8.4108476712251815e-04, 3.3231506665764049e-02,

-8.9119281968270958e-04, 1.6950218347962700e+00,

3.8795921397114311e-03, -1.6952624768406708e+00,

-9.5925666229836515e-04 ]

F: !!opencv-matrix

rows: 3

cols: 3

dt: d

data: [ 6.5486525518936608e-08, -5.0017984753281888e-05,

9.9960823121244876e-03, 1.0041793870914565e-04,

-2.6810044647830857e-06, 3.4036589992213000e+00,

-1.5652165011118380e-02, -3.4182603874751876e+00,

9.9999999999999989e-01 ]

R1: !!opencv-matrix

rows: 3

cols: 3

dt: d

data: [ 9.9980525157248468e-01, 2.2991356175347437e-03,

-1.9600329167972070e-02, -2.2937864080306968e-03,

9.9999732564029009e-01, 2.9539157402834107e-04,

1.9600955894930411e-02, -2.5037507834536546e-04,

9.9980785145963180e-01 ]

R2: !!opencv-matrix

rows: 3

cols: 3

dt: d

data: [ 9.9995221263036305e-01, 4.9065951283155365e-04,

-9.7637958235422401e-03, -4.9332403320461707e-04,

9.9999984173287226e-01, -2.7049145825487527e-04,

9.7636615590471869e-03, 2.7529524731464261e-04,

9.9995229642492811e-01 ]

P1: !!opencv-matrix

rows: 3

cols: 4

dt: d

data: [ 6.0327398477959753e+02, 0., 3.0294886016845703e+02, 0., 0.,

6.0327398477959753e+02, 2.3173668479919434e+02, 0., 0., 0., 1.,

0. ]

P2: !!opencv-matrix

rows: 3

cols: 4

dt: d

data: [ 6.0327398477959753e+02, 0., 3.0294886016845703e+02,

-1.0227593432896966e+03, 0., 6.0327398477959753e+02,

2.3173668479919434e+02, 0., 0., 0., 1., 0. ]

Q: !!opencv-matrix

rows: 4

cols: 4

dt: d

data: [ 1., 0., 0., -3.0294886016845703e+02, 0., 1., 0.,

-2.3173668479919434e+02, 0., 0., 0., 6.0327398477959753e+02, 0.,

0., 5.8984939980032047e-01, 0. ]

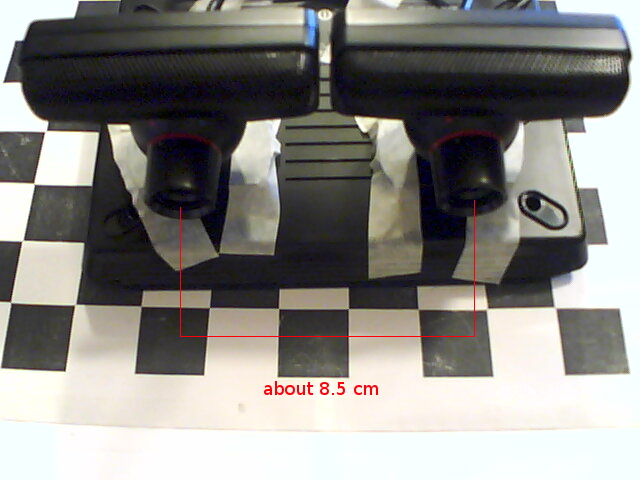

I tried to calibrate another stereo rig with baseline of about 8.5 cm:

in this case the baseline computed is -3.5152250398995970e+00:

T: !!opencv-matrix rows: 3 cols: 1 dt: d data: [ -3.5152250398995970e+00, -3.3355285984050492e-02, -1.1137642414481164e-01 ] how is it possible?

Hi, I got the similar Q as you got. my last two elementsi are 8.5807419247084848e-002, and 0. the baseline is exactly what I have. But one problem bothering me is the 0 --- When I use disparity map and Q matrix to do reprojectImageTo3d, the point cloud it creates looks like just a pyramid where most points gathers at top and I can see nothing from that point cloud. Have you met these situation before? thanks! I've been stuck on this problem so long...