warpPerspective() to a different origin [SOLVED]

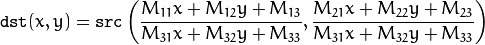

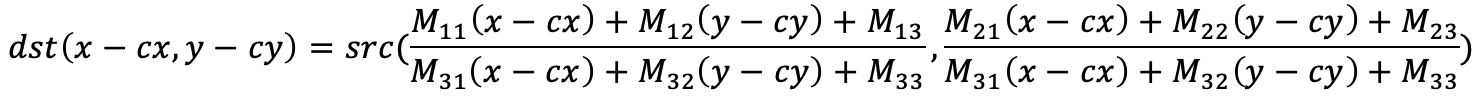

I need to apply a perspective transformation to a pixel that is not the origin of the warped image. The warpPerspective() method is applying :

But I need to apply the following centered perspective transformation :

The perspective transformation that I need to apply is the following ...

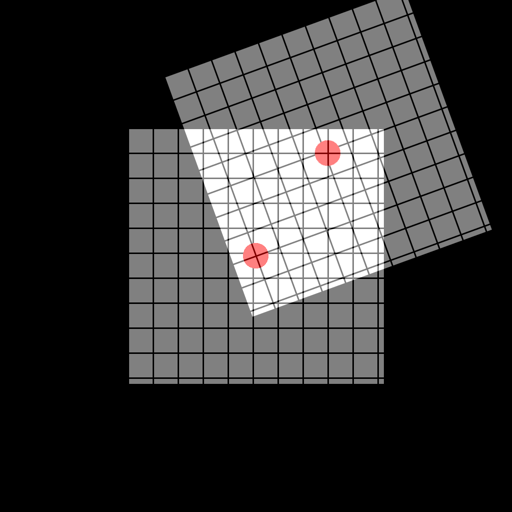

Here it is applied to the center of the squared image (cx,cy)=(0.5,0.5). But if I warp the image from (cx,cy)=(0,0), there's a translation error, like in the following example ...

Here it is applied to the center of the squared image (cx,cy)=(0.5,0.5). But if I warp the image from (cx,cy)=(0,0), there's a translation error, like in the following example ...

The perspective transformation (here it is a rotation) must be applied to the center of the warped image. How can I use OpenCV to perform such a centered perspective transform in C++ ? Thanks for helping.

Best regards,

Dr. F. Le Coat CNRS / Paris / France