Opencv getPerspectiveTransform method gives unclear result

I am simply trying to get rid of perspective of an image, in other words I am trying to get a birds-eye view image from a 2D image in order to make it easy to calculate distance between two points in image. At this point, the result image obtained with the cv2.warpPerspective method of OpenCV requires a size of final image as parameter which is unknown and should be given statically and it results in a cropped image of warped image. So I calculate the corner points of source image with cv2.getPerspective method and calculate a new homography with these points. After producing a new warped image with this homography matrix and with known final image size, I get full image without any crop. I am quite successful with most of my experiences but failing weirdly at some samples.

Here is what I am doing:

- Get 4 points of a known rectangle in real world from an image of a camera (the order of points are: TL(Top Left) -> TR(Top Right) -> BR(Bottom Right) -> BL(Bottom Left))

- Calculate the bounding box of these points and set it as destination of source points obtained with the step above.

- Calculate the homography and get the homography matrix.

- With this matrix, calculate the corners of the source image to be able to know the size of result image (warped image).

- As I know the result coordinate of the corner points of source image, I calculate a new homography matrix and produce the result image with known size.

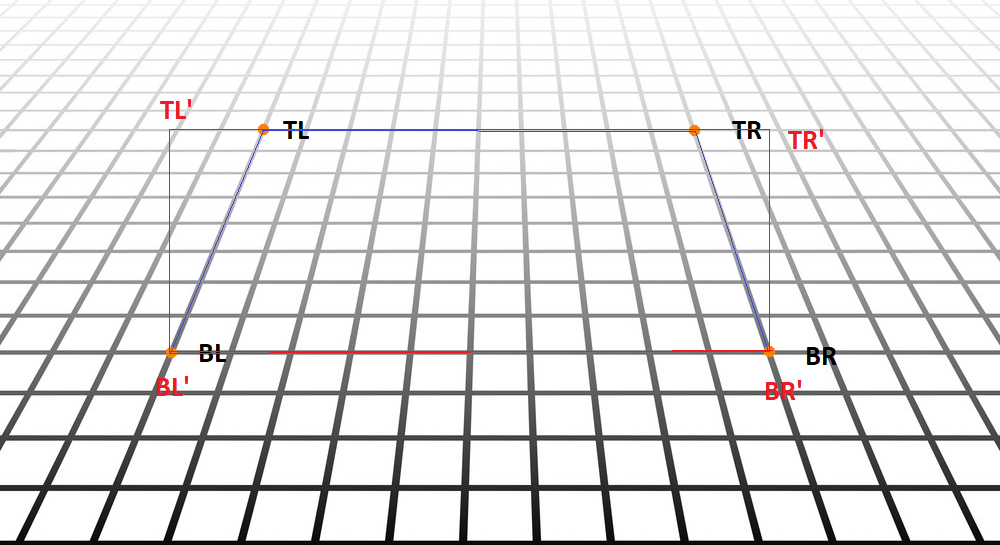

A successful attempt is shown below. The orange points are the points of a real world rectangle and the red rectangle is the destination points.

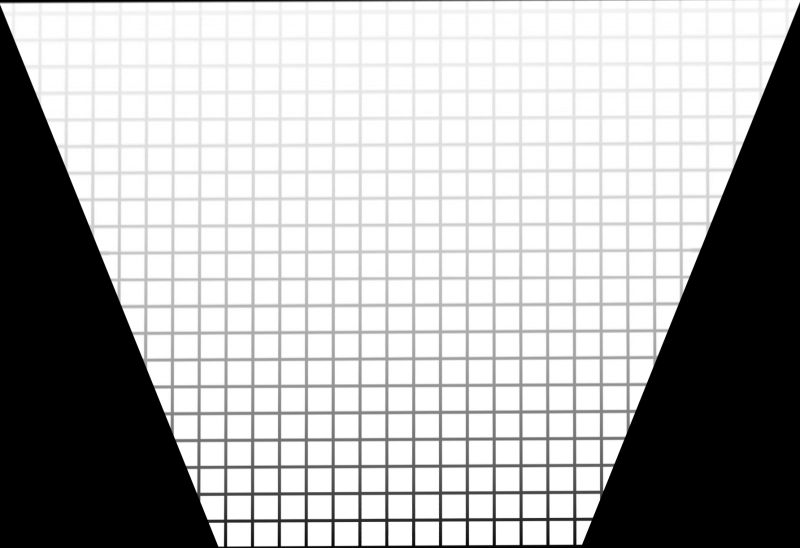

The following image is the result I calculated with above steps and it is quite good.(its resized as being a large image)

The obtained new corner positions which are coherent:

TL:(-385, -308), TR:(1397, -326), BR:(906, 778), BL:(97, 776)

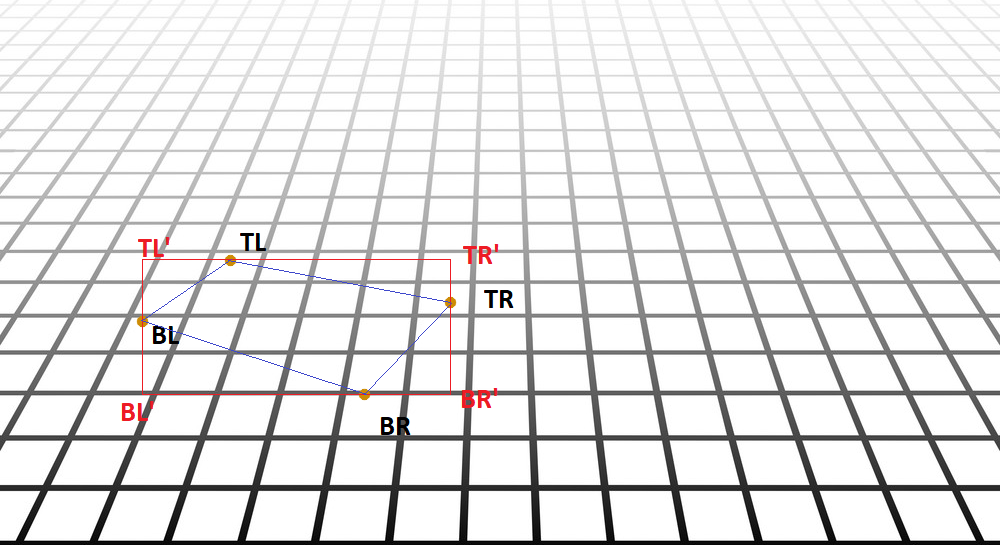

But when it comes to try on different examples, I faced some situations are failing like below:

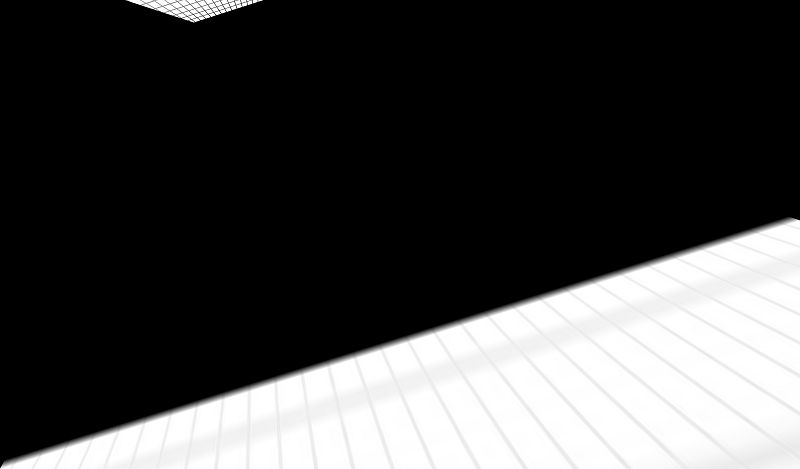

And the resulted new corner points are as below which are not seem to be right: TL: (5415, 2218), TR: (-1314, 4309), BR: (909, 360), BL: (318, 551)

By the way, the homograpy matrix seems completely right. I tested it, the problem is with resulted image. And also there is no intersecting lines with these points (called vanishing point) inside image which may cause this kind of result image.

I am leaving the source image and the code snippet to reproduce the result below. There is a small thing I am overlooking but can not find it for 2 days. Thanks in advance

The source image: grid

The code:

import numpy as np

import cv2

import imutils

def warpImage(image, src_pts):

height, width = image.shape[:2]

min_rect = cv2.boundingRect(np.array(src_pts))

dst = [[min_rect[0], min_rect[1]],

[min_rect[0] + min_rect[2], min_rect[1]],

[min_rect[0] + min_rect[2], min_rect[1] + min_rect[3]],

[min_rect[0], min_rect[1 ...

I don't see nothing wrong. What are you attempting to do?