solvePnP and problems with perspective [closed]

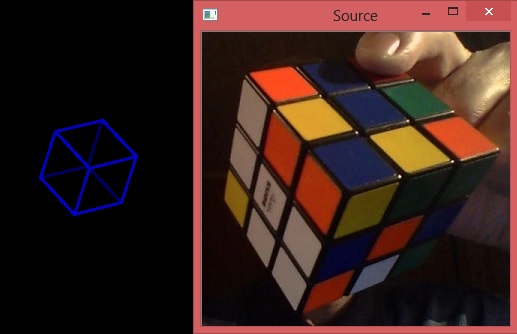

I feed 8 corners of the cube, but after reprojection sometimes (not for all images) the nearest point becomes the farthest and vice versa.

Can anybody explain the reason and how to cope with it?

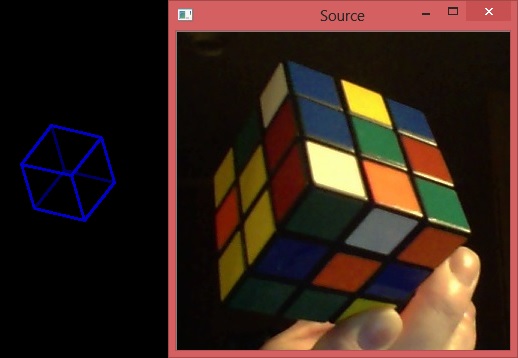

An example of correct case:

The code:

float box_side_x = 6; //Centimetres

float box_side_y = 6;

float box_side_z = 6;

vector<Point3f> boxPoints;

//Fill the array of corners in object coordinates. x to right(view from camera), y down, z from camera.

vector<Vec3d> boxCorners(8);

Vec3d boxCorner;

float x, y, z;

for (int h = 0; h < 2; ++h) {

for (int j = 0; j < 2; ++j) {

for (int i = 0; i < 2; ++i) {

x = box_side_x * i;

y = box_side_y * j;

z = box_side_z * h;

boxPoints.push_back(Point3f(x, y, z)); //For solvePnP()

boxCorners[i + 2 * j + 4 * h] = {x, y, z}; //For calculating output

}

}

}

solvePnP(boxPoints, pointBuf, cameraMatrix, distCoeffs, rvec, tvec, false);

Mat rmat;

Rodrigues(rvec, rmat);

Mat Result;

float S = 1;

for (int h = 0; h < 2; ++h) {

for (int j = 0; j < 2; ++j) {

for (int i = 0; i < 2; ++i) {

boxCorner = boxCorners[i+2*j+4*h]; //In centimetres

Result = S * (rmat * Mat(boxCorner) + tvec);

ObjPoints[i + 2 * j + 4 * h] = (Vec3f)Result; //In centimetres

}

}

}

In different file ObjPoints -> BoxCorners

vector<float> X(8), Y(8), Z(8);

for (int i = 0; i < 8; i++) {

BoxCorner = BoxCorners[i]; //In centimetres

X[i] = K * (BoxCorner[0] + Lx);

Y[i] = K * (BoxCorner[1] + Ly);

Z[i] = K * (BoxCorner[2] + Lz);

}

Scalar color = CV_RGB(0, 0, 200), back_color = CV_RGB(0, 0, 100);

int thickness = 2;

namedWindow("Projection", WINDOW_AUTOSIZE);

Point pt1 = Point(0, 0), pt2 = Point(0, 0);

pt1 = Point(X[7], Y[7]), pt2 = Point(X[6], Y[6]);

line(drawing, pt1, pt2, back_color, thickness);

pt1 = Point(X[7], Y[7]); pt2 = Point(X[5], Y[5]);

line(drawing, pt1, pt2, back_color, thickness);

pt1 = Point(X[7], Y[7]); pt2 = Point(X[3], Y[3]);

line(drawing, pt1, pt2, back_color, thickness);

pt1 = Point(X[0], Y[0]), pt2 = Point(X[1], Y[1]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[0], Y[0]); pt2 = Point(X[2], Y[2]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[0], Y[0]), pt2 = Point(X[4], Y[4]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[1], Y[1]); pt2 = Point(X[3], Y[3]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[3], Y[3]); pt2 = Point(X[2], Y[2]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[2], Y[2]); pt2 = Point(X[6], Y[6]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[6], Y[6]); pt2 = Point(X[4], Y[4]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[4], Y[4]); pt2 = Point(X[5], Y[5]);

line(drawing, pt1, pt2, color, thickness);

pt1 = Point(X[5], Y[5]); pt2 = Point(X[1], Y[1]);

line(drawing, pt1, pt2, color, thickness);

imshow("Projection", drawing);

Can you perhaps post the relevant portion of your code, and ideally, one image that causes it, and a very similar one that does not?

Also, check this bug report and see if it is the same: https://github.com/opencv/opencv/issues/8813

Yes, it is something similar. solvePnP outputs the matrix which converts from object coordinates (attached to the front corner) to camera coordinates which I consider as follows. X goes horizontally from left to right as we see it, Y downwards. Then Z should go backwards behind the screen so Z coordinate of the front corner should be negative. In reality, it comes out negative or positive. Correct case (2 picture) - when it is positive. By the way, Z coordinate of the object points in the opposite direction - off the camera. X too in the opposite. Y - in the same, downwards. Relating my question to your link - solvePnP sometimes places the object not in front of the camera, but behind it (positive Z).

Correction. There is some confusion about correspondence between camera coordinates and screen coordinates. As seen from the description what we see on screen is turned image on camera sensor. So indeed camera X-Y are the same that screen X-Y despite camera and user look in opposite directions. Hence camera Z should go towards the object and normally Z coordinate of the front corner should be positive. If the object is not rotated, all axes of camera and object coordinate systems point to the same directions.

Your issue seems similar to my question here: https://answers.opencv.org/question/2...

If you have any insight into the direction of the cameraspace z axis, I'd much appreciate it!