I wrote a few blog posts earlier this year on how to do some of this. Creating thresholds, canny edge detection, finding contours, etc.

In case my site ever goes down, here is a bit of example code taken from those pages. But I strongly suggest you read through the post if possible prior to blindly trying to use the code.

#include <opencv2/opencv.hpp>

int main(void)

{

cv::Mat original_image = cv::imread("capture.jpg", cv::IMREAD_COLOR);

cv::namedWindow("Colour Image", cv::WINDOW_AUTOSIZE);

cv::imshow("Colour Image", original_image);

for (double canny_threshold : { 40.0, 90.0, 140.0 } )

{

cv::Mat canny_output;

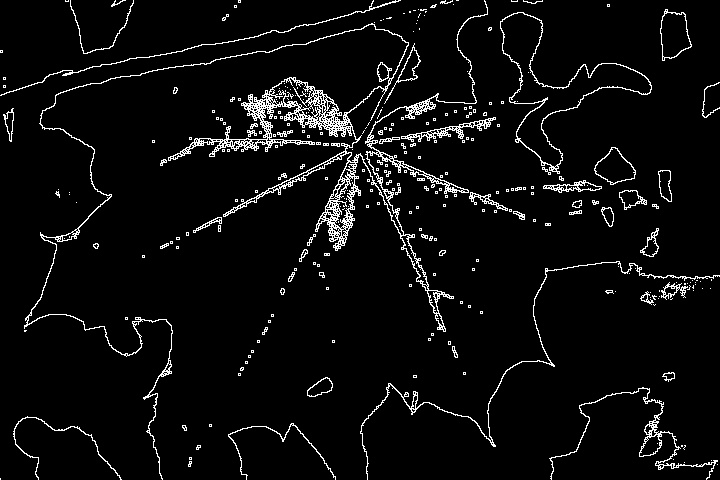

cv::Canny(original_image, canny_output, canny_threshold, 3.0 * canny_threshold, 3, true);

const std::string name = "Canny Output Threshold " + std::to_string((size_t)canny_threshold);

cv::namedWindow(name, cv::WINDOW_AUTOSIZE);

cv::imshow(name, canny_output);

}

// ---------------

cv::Mat canny_output;

const double canny_threshold = 100.0;

cv::Canny(original_image, canny_output, canny_threshold, 3.0 * canny_threshold, 3, true);

typedef std::vector<cv::Point> Contour; // a single contour is a vector of many points

typedef std::vector<Contour> VContours; // many of these are combined to create a vector of contour points

VContours contours;

std::vector<cv::Vec4i> hierarchy;

cv::findContours(canny_output, contours, hierarchy, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_SIMPLE);

for (auto & c : contours)

{

std::cout << "contour area: " << cv::contourArea(c) << std::endl;

}

const cv::Scalar green(0, 255, 0);

cv::Mat output = original_image.clone();

for (auto & c : contours)

{

cv::polylines(output, c, true, green, 1, cv::LINE_AA);

}

cv::namedWindow("Contours Drawn Onto Image", cv::WINDOW_AUTOSIZE);

cv::imshow("Contours Drawn Over Image", output);

// ---------------

cv::Mat blurred_image;

cv::GaussianBlur(original_image, blurred_image, cv::Size(3, 3), 0, 0, cv::BORDER_DEFAULT);

const size_t erosion_and_dilation_iterations = 3;

cv::Mat eroded;

cv::erode(blurred_image, eroded, cv::Mat(), cv::Point(-1, -1), erosion_and_dilation_iterations);

cv::Mat dilated;

cv::dilate(eroded, dilated, cv::Mat(), cv::Point(-1, -1), erosion_and_dilation_iterations);

cv::Canny(dilated, canny_output, canny_threshold, 3.0 * canny_threshold, 3, true);

cv::findContours(canny_output, contours, hierarchy, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_SIMPLE);

cv::Mat better_output = original_image.clone();

for (auto & c : contours)

{

cv::polylines(better_output, c, true, green, 1, cv::LINE_AA);

}

cv::namedWindow("Another Attempt At Contours", cv::WINDOW_AUTOSIZE);

cv::imshow("Another Attempt At Contours", better_output);

cv::waitKey(0);

return 0;

}