detectMultiScale and CascadeClassifier: get better ROI

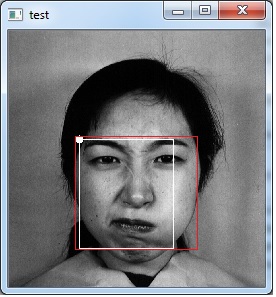

I try to get a better ROI of face because I am not very satisfied, I want to try to get a better ROI. To achieve that I am using landmark (I am using dlib and it predictor). I am trying to make something like that.

Some definitions:

dlib::shape_predictor predictor;

cv::CascadeClassifier faceCascade;

dlib::full_object_detection shape;

then I load cascade:

faceCascade.load(baseDatabasePath + "/" + cascadeDataName2);

Iterate on each image:

std::vector<cv::Rect> faces;

faceCascade.detectMultiScale(output, faces, 1.01, 6, 0, cv::Size(50, 50));

getBetterOverlapRectIndex();

faceROI = output(faces[bestIndex]);

At this point a get a ROI, but I am not satisfied, I wanna something more focus on face.

shape = predictor(cimg, openCVRectToDlib(faces[bestIndex]));

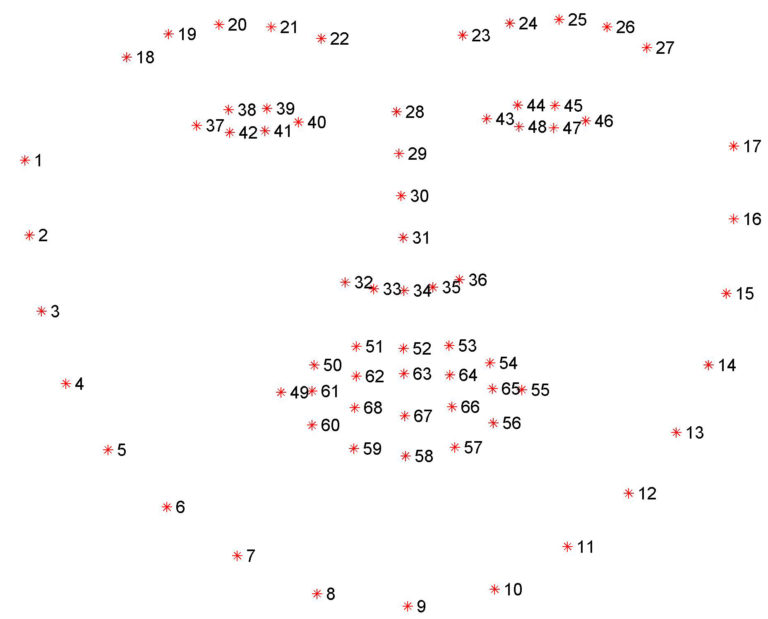

cv::Point centerEyeRight = cv::Point((shape.part(42).x() + shape.part(47).x()) / 2,(shape.part(42).y() + shape.part(47).y()) / 2);

cv::Point centerEyeLeft = cv::Point((shape.part(36).x() + shape.part(41).x()) / 2, (shape.part(36).y() + shape.part(41).y()) / 2);

int widthEyeRight = abs(shape.part(42).x() - shape.part(47).x());

int widthEyeLeft = abs(shape.part(36).x() - shape.part(41).x());

int widthFace = (centerEyeRight.x + widthEyeRight) - (centerEyeLeft.x - widthEyeLeft);

int heightFace = widthFace * 1.1;

faceROIAlt = output(cv::Rect(centerEyeLeft.x - (widthFace / 4), centerEyeLeft.y - (heightFace / 4), widthFace, heightFace));

But I get this (white line), but I want something like red line. Where Am I failing? I think It is so easy, but I am trapped.