using EM of opencv, same training samples, but different results

Sorry for the mistake!! I have update this post? Can you try this and show me the results running on your machine??

Hi everyone,

I'm using the EM module of opencv(tried 2.4.2 and 2.4.3). I wants to try my samples(676*64) which is generated by SIFT+PCA by EM. but I have tried to use the same samples to train the EM to get the Gaussian mixture model. But the results are different.

here is a test code.

cv::Mat samples(50,2,CV_32FC1);

samples = samples.reshape(2,0);

int N=9, n1 = sqrt(9.0);

for(int i=0; i<N; ++i)

{

cv::Mat subSamples = samples.rowRange(i*50/N, (i+1)*50/N );

cv::Scalar mean((i%3 +1)*256/4, (i/3+1)*256/4);

cv::Scalar var(30,30);

cv::randn(subSamples, mean, var);

}

samples = samples.reshape(1,0);

samples.convertTo(samples, CV_64FC1);

std::cout<<"samples"<<std::endl;

for(int j=0; j<samples.rows; ++j){

for(int i=0; i<samples.cols; ++i)

std::cout<<samples.at<double>(j, i)<<" ";

std::cout<<std::endl;

}

std::cout<<std::endl;

CvEM m_emModel1;

CvEMParams params1;

cv::Mat labels1;

params1.covs = NULL;

params1.means = NULL;

params1.weights = NULL;

params1.probs = NULL;

params1.nclusters = 10;

params1.cov_mat_type = CvEM::COV_MAT_SPHERICAL;

params1.start_step = CvEM::START_AUTO_STEP;

params1.term_crit.type = CV_TERMCRIT_ITER|CV_TERMCRIT_EPS;

m_emModel1.train(samples, cv::Mat(), params1, &labels1);

std::cout<<"means1"<<std::endl;

for(int j=0; j<10; ++j){

for(int i=0; i<2; ++i)

std::cout<<m_emModel1.getMeans().at<double>(j, i)<<" ";

std::cout<<std::endl;

}

std::cout<<"weightes1"<<std::endl;

for(int j=0; j<10; ++j)

std::cout<<m_emModel1.getWeights().at<double>(j)<<" ";

std::cout<<std::endl;

CvEM m_emModel;

CvEMParams params;

cv::Mat labels;

params.covs = NULL;

params.means = NULL;

params.weights = NULL;

params.probs = NULL;

params.nclusters = 10;

params.cov_mat_type = CvEM::COV_MAT_SPHERICAL;

params.start_step = CvEM::START_AUTO_STEP;

params.term_crit.type = CV_TERMCRIT_ITER|CV_TERMCRIT_EPS;

m_emModel.train(samples, cv::Mat(), params, &labels);

std::cout<<"means"<<std::endl;

for(int j=0; j<10; ++j){

for(int i=0; i<2; ++i)

std::cout<<m_emModel.getMeans().at<double>(j, i)<<" ";

std::cout<<std::endl;

}

std::cout<<"weightes"<<std::endl;

for(int j=0; j<10; ++j)

std::cout<<m_emModel.getWeights().at<double>(j)<<" ";

std::cout<<std::endl;

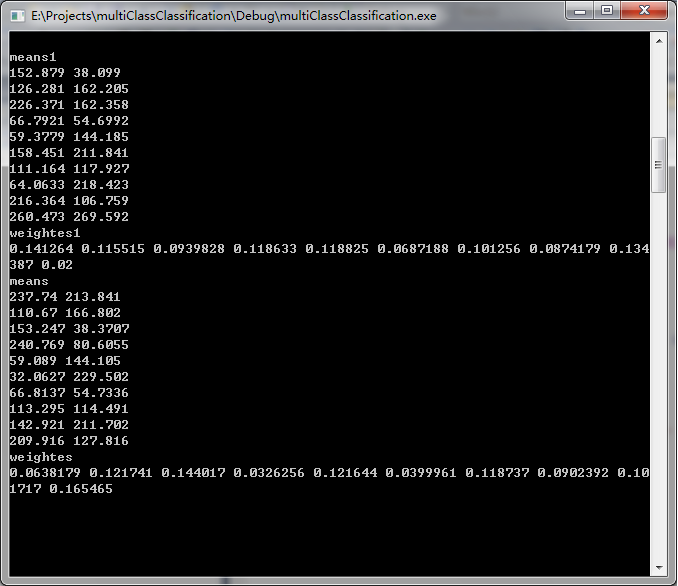

here is a result:

I really hope your replys, thanks your reading.

You train your GMM with two different models and get two different results. So, what exactly is your question?

And your parameters are different (epsilon and start_step). By the way, not related, but I suggest you used the C++ interface (http://docs.opencv.org/modules/ml/doc/expectation_maximization.html#ml-expectation-maximization)

hi Guanta and Mathieu, I have update the code. This time all the parameters are the same. But I still get different results?? any suggestion?