Different undistorting results (first rotate, then undistort OR first undistort, then rotate)

Hello,

i don't know why, but i get different results if i first rotate the image and undistort it, or if i first undistort and then rotate it.

There is a very small difference at both results...

(In the middle of the image it seems to be same, but at the upper and lower borders of the images the difference is higher)

My minimal code is shown below:

Mat camera_matrix, distCoeffs;

char* out_file = "C:\\Users\\Bob\\Documents\\Visual Studio 2013\\Projects\\Samples\\camera.txt";

FileStorage fs(out_file, FileStorage::READ);

fs["K"] >> camera_matrix;

fs["D"] >> distCoeffs;

//Reading the images

Mat image1 = imread("C:\\Users\\Bob\\Documents\\Visual Studio 2013\\Projects\\Samples\\1.jpg");

Mat image2 = imread("C:\\Users\\Bob\\Documents\\Visual Studio 2013\\Projects\\Samples\\2.jpg");

Mat image1_undistorted, image2_undistorted;

Mat image1_rot, image2_rot, image1_flip, image2_flip;

//First workflow (first undistort image, then turn)

///////////////////////////////////////////////////////////////////////

undistort(image1, image1_undistorted, camera_matrix, distCoeffs);

undistort(image2, image2_undistorted, camera_matrix, distCoeffs);

transpose(image1_undistorted, image1_rot);

flip(image1_rot, image1_undistorted, 0);

transpose(image2_undistorted, image2_rot);

flip(image2_rot, image2_undistorted, 0);

imwrite("image1_undistorted_FIRSTWORKFLOW.jpg", image1_undistorted);

imwrite("image2_undistorted_FIRSTWORKFLOW.jpg", image2_undistorted);

//Second workflow (first turn image, then undistort)

///////////////////////////////////////////////////////////////////////

//Adjusting the camera matrix and distortion coefficients

double fx = camera_matrix.at<double>(0, 0);

double fy = camera_matrix.at<double>(1, 1);

double cx = camera_matrix.at<double>(0, 2);

double cy = camera_matrix.at<double>(1, 2);

camera_matrix.at<double>(0, 0) = fy;

camera_matrix.at<double>(1, 1) = fx;

camera_matrix_new.at<double>(0, 2) = cy;

camera_matrix_new.at<double>(1, 2) = image1.size().width - cx;

double p1 = distCoeffs.at<double>(0, 2);

double p2 = distCoeffs.at<double>(0, 3);

distCoeffs.at<double>(0, 2) = p2;

distCoeffs.at<double>(0, 3) = p1;

transpose(image1, image1_rot);

flip(image1_rot, image1_flip, 0);

transpose(image2, image2_rot);

flip(image2_rot, image2_flip, 0);

undistort(image1_flip, image1_undistorted, camera_matrix, distCoeffs, Mat());

undistort(image2_flip, image2_undistorted, camera_matrix, distCoeffs, Mat());

imwrite("image1_undistorted_SECONDWORKFLOW.jpg", image1_undistorted);

imwrite("image2_undistorted_SECONDWORKFLOW.jpg", image2_undistorted);

The results are shown below:

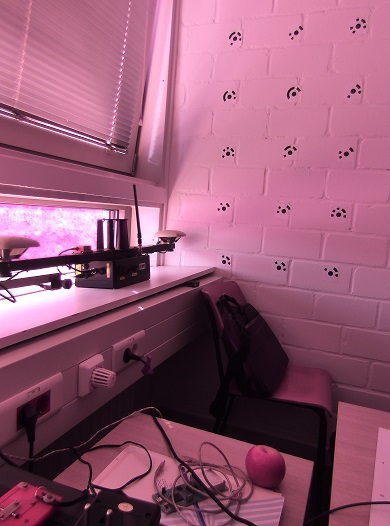

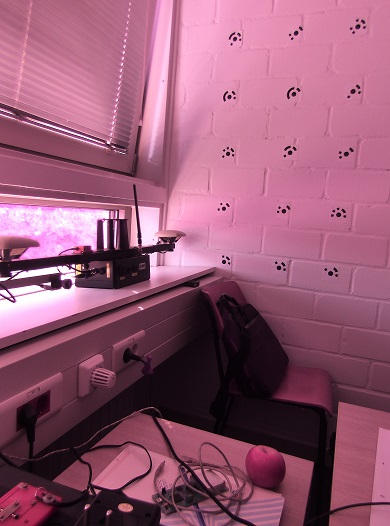

The first image (both workflow results)

The second image (both workflow results)

---------------------------------------------------------------------------------------------------------------------------------------

And if i calculate the differences of the images resulting from the different workflows, then i get these:

image1_undistorted_FIRSTWORKFLOW - image1_undistorted_SECONDWORKFLOW =

image2_undistorted_FIRSTWORKFLOW - image2_undistorted_SECONDWORKFLOW =

You can see the in the middle of the "difference images" it seems to be there is no difference...

But on the up and down side of the "difference image" there is a bigger difference...

Can someone tell me what im doing wrong? Or why i get different results in both workflows?