Hi all

Thank you for your input/suggestion to my question how to determine the LCD/LED display in an image.

The figure 1 below is a scratchcard which we are interested to locate/segment based on the yellowish color part ,which i refer to as the LCD/LED display. After segmenting the LCD/LED area based on the Yellowish color we can extract the digits present

Figure 1 Scratchcard Image

For this this task we will use OpenCV and C++

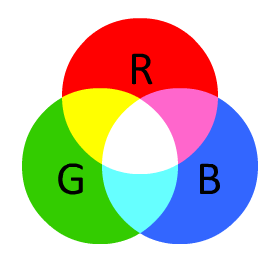

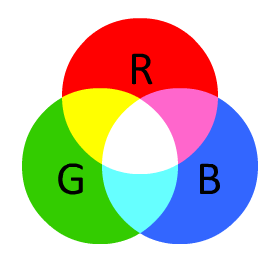

OpenCV captures images in BGR (BLUE,RED and GREEN ) format, and not RGB ( RED, GREEN and BLUE) as one would expect.The captured images are 3 bytes ( 24 bits ) of data .The 3 bytes of data for each pixel is split into 3 different parts which are BLUE,RED and GREEN having 8-bits or 1 byte each. 1 byte can store a value from 0 to 255.This means that each BLUE having 256 variations of Blue , each GREEN having 256 variations of Green and each RED having 256 variations of Red.These primary colors can be mixed with different variations of each to get the desired color in this case yellowish or LCD/LED display color. Figure 2 shows the RBG colorspace.

Figure 2 RBG color space

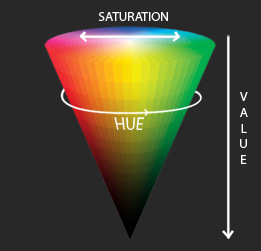

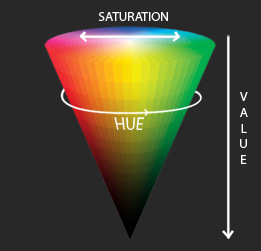

In this task what is needed is to isolate the yellowish color in order to determine the LCD/LED display This is referred to as color based segmentation which is also know as With thresholding.However while OpenCV images are captured in BGR format ,The BGR format falls short for color based segmentation task. It seems using HSV color space shown in figure 3 will be more suitable.

Figure 3 HSV

HSV stands for Hue, Saturation, and Value.The Hue defines the color component ,Saturation defines how strong the color component is in other words how close that color is to white and Value defines the brightness of the color component or how close that color is to black..Therefore unlike RGB, HSV separates brightness in an image from the color information. This is very useful for this task at hand . Further it gives the the upper hand of having a single number color for the color of interest despite multiple shades of that color

in OpenCV the HSV values ranges are different from other application such as Gimp whose HSV ranges are: Hue ranges from 0 to 360, Saturation from 0 to 100 and Value from 0 to 100. while in OpenCV the HSV values ranges are Hue ranges from 0 to 180,Saturation ranges from 0 to 255, and Value ranges 0 to 255.

Gimp Hue values for the colors are :

Orange 0-44 ,Yellow 44- 76, Green 76-150, Blue 150-260, Violet 260-320, Red 320-360

OpenCV Hue values for the colors

Orange 0-22 Yellow 22- 38 Green 38-75 Blue 75-130 Violet 130-160 Red 160-180

For this task, the suitable ranges for the HSV ,after experimenting with different values to get the yellowish part , are Hue from ranges 20 to 70 ... (more)

Please give more information if your LCD/LED display is looking always similarly - maybe post an example image, or if you would like to detect each possible LCD/LED display.

Hi Guanta thank you so much for your reply i have edited my initial post to attached the images i would like to read. they are not LCD per say but identifying the region where the value is will require to identify the LCD like region first and the read the individual segments for the digits this is where i would like some help on how to identify them dynamcically as i have to process a number of them