This forum is disabled, please visit https://forum.opencv.org

| 1 | initial version |

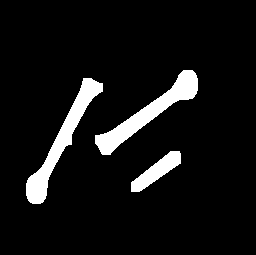

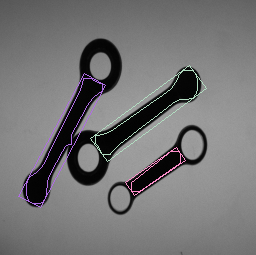

A basic idea based on morphological operations and distance transformation can be seen in the example below. For sure you will need to test it further in order to see how much it fits to all of your use cases. My basic assumption is that in order to extract an object you need to use a feature that is quite significant in each object. The feature that I used is the trunk of each object which I find it to be the strongest. Moreover, you can use it for getting the orientation of each object as well. So, at the end you do not need to detect the full object in order to obtain the information that you want, but only part of it.

#include <iostream>

#include <opencv2/opencv.hpp>

using namespace std;

using namespace cv;

int main()

{

// Load your image

cv::Mat src = cv::imread("blobs.png");

// Check if everything was fine

if (!src.data)

return -1;

// Show source image

cv::imshow("src", src);

// Create binary image from source image

cv::Mat gray;

cv::cvtColor(src, gray, CV_BGR2GRAY);

// cv::imshow("gray", gray);

// Obtain binary image

Mat bw;

cv::threshold(gray, bw, 40, 255, CV_THRESH_BINARY_INV | CV_THRESH_OTSU);

cv::imshow("bin", bw);

// Erode a bit

Mat kernel = Mat::ones(3, 3, CV_8UC1);

erode(bw, bw, kernel);

// imshow("erode", bw);

// Perform the distance transform algorithm

Mat dist;

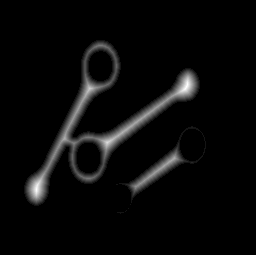

distanceTransform(bw, dist, CV_DIST_L2, 5);

// Normalize the distance image for range = {0.0, 1.0}

// so we can visualize and threshold it

normalize(dist, dist, 0, 1., NORM_MINMAX);

imshow("distTransf", dist);

// Threshold to obtain the peaks

// This will be the markers for the foreground objects

threshold(dist, dist, .5, 1., CV_THRESH_BINARY);

// Dilate a bit the dist image, this can be optional since in

// other use case might cause problems. Here though it works quite well

Mat kernel1 = Mat::ones(5, 5, CV_8UC1);

dilate(dist, dist, kernel1, Point(-1, -1), 2);

imshow("peaks", dist);

// Create the CV_8U version of the distance image

// It is needed for findContours()

Mat dist_8u;

dist.convertTo(dist_8u, CV_8U);

// Find total markers

vector<Vec4i> hierarchy;

vector<vector<Point> > contours;

findContours(dist_8u, contours, hierarchy, CV_RETR_TREE, CV_CHAIN_APPROX_SIMPLE);

// Find the rotated rectangles

vector<RotatedRect> minRect( contours.size() );

for( size_t i = 0; i < contours.size(); i++ )

{

minRect[i] = minAreaRect( Mat(contours[i]) );

}

RNG rng(12345);

for( size_t i = 0; i< contours.size(); i++ )

{

Scalar color = Scalar( rng.uniform(0, 255), rng.uniform(0,255), rng.uniform(0,255) );

// contour

drawContours( src, contours, static_cast<int>(i), color, 1, 8, vector<Vec4i>(), 0, Point() );

// rotated rectangle

Point2f rect_points[4]; minRect[i].points( rect_points );

for( int j = 0; j < 4; j++ )

line( src, rect_points[j], rect_points[(j+1)%4], color, 1, 8 );

}

/* From here you can extract the orientation of each object by using

* the information that you can extract from the contours and the

* rotate rectangles. For example, the center point, rectange angle, etc...

*/

cv::imshow("result", src);

waitKey(0);

return 0;

}

I do not know how close is this to what you want but I hope that it could help. For the orientation you can also use the information from the contours in addition with a PCA (i.e. Principal Component Analysis) procedure as it is described here.