I'm moving this to an answer so I can write longer.

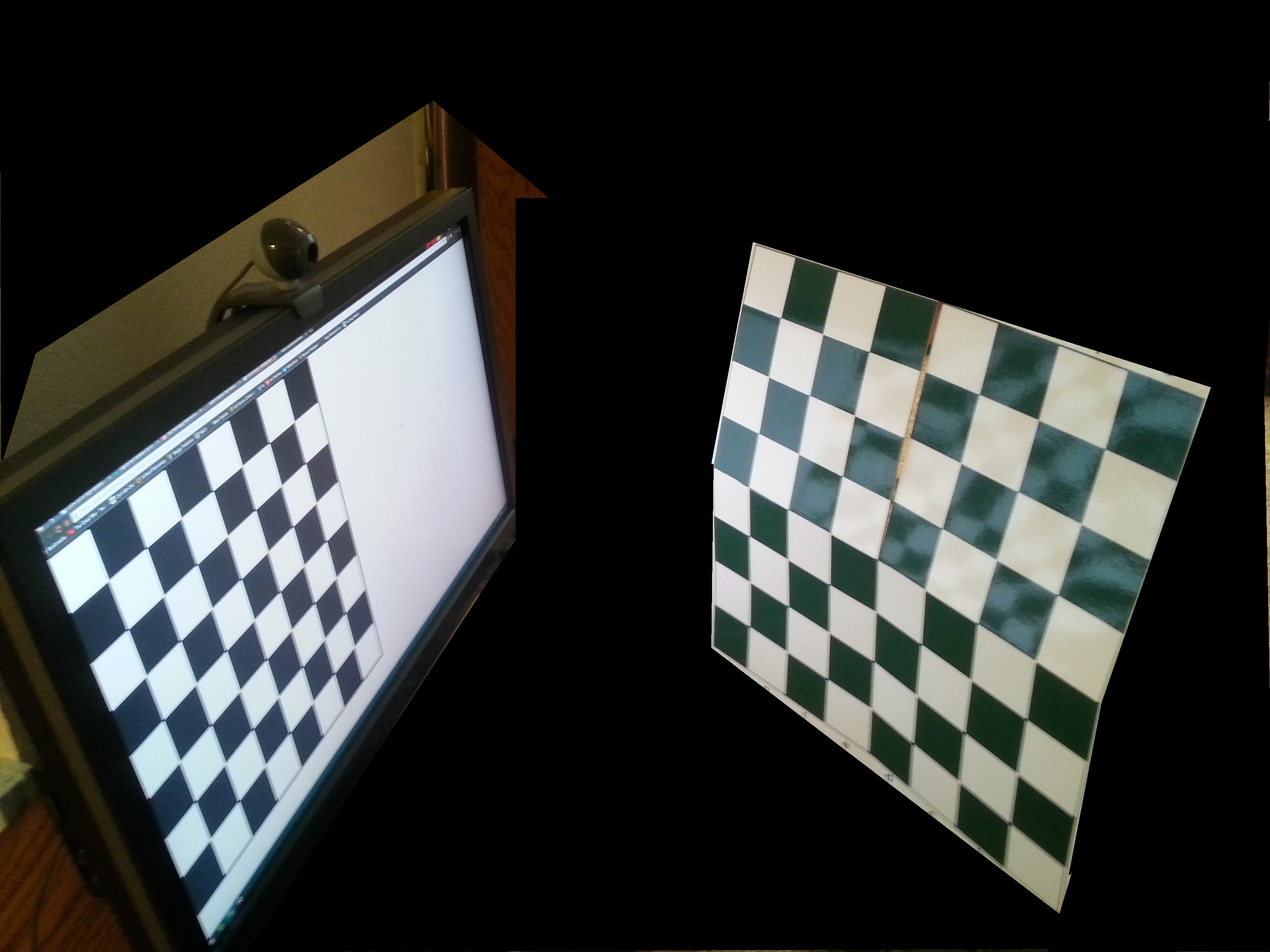

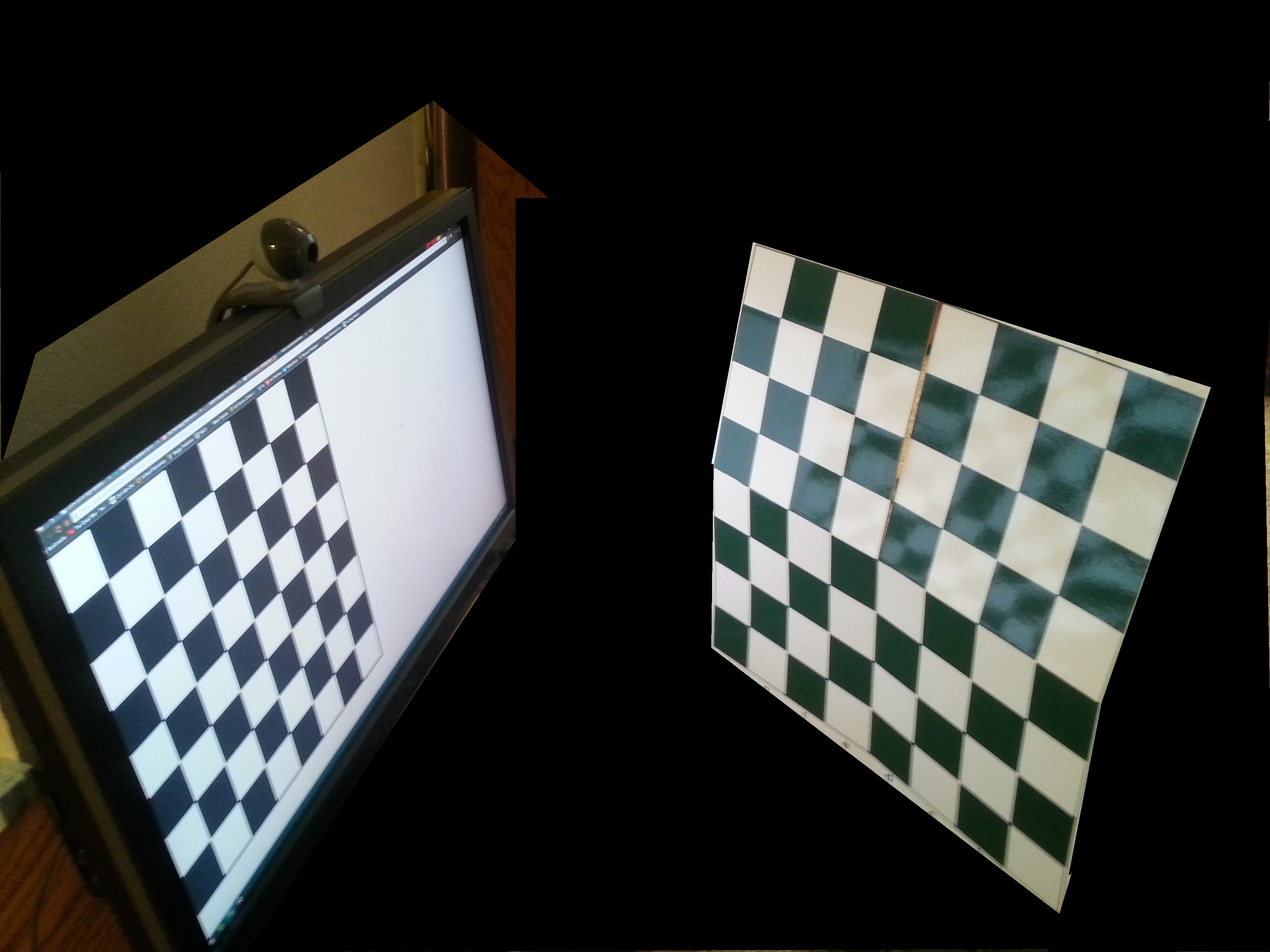

Here is the setup you should be using. With the "real" chessboard being flatter than that one, I just grabbed it. Oh, and the picture on the screen should be shown in full screen mode so the corner of the board and screen match.

The reason you need to do it this way is because when you find the location of the chessboard, it finds the position of the top left corner of the chessboard and the orientation of the plane of the chessboard. Since you are trying to find the top left corner of the screen and the plane of the screen, you need them to match up. So you put the screen chessboard flat on the screen with it's corner in the corner of the screen.

So you have two cameras and two chessboards. You should take a picture from both cameras with both chessboards in the same spot. For better results, move the secondary camera (the one taking this picture) around and average the results.

From these pictures you will get three transformations.

- From primary camera to real chessboard.

- From secondary camera to real chessboard.

- From secondary camera to screen chessboard.

What you want to find is from primary camera to screen chessboard. So you need to reverse the transformation from secondary camera to real chessboard, and now you have the three you need. primary->real->secondary->screen. And from that, obviously, you can get the inverse, if you need it.

EDIT: Code I used.

//Secondary Camera to Space Chessboard

<Snip Calculation>

Rodrigues(rvecs[0], R2);

tvecs[0].copyTo(T2);

//Secondary Camera to Screen Chessboard

Rodrigues(rvecs[0], R3);

R3 = R3.t();

T3 = -R3*tvecs[0];

//Primary Camera to Space Chessboard

flip(image, image, 1);

Rodrigues(rvecs[0], R1);

R1 = R1.t();

T1 = -R1*tvecs[0];

Mat translation(3, 1, CV_64F);

translation.setTo(0);

translation = R3*translation + T3;

translation = R2*translation + T2;

translation = R1*translation + T1;

This gives this, which is a little off, but probably because I only used one image for secondary camera and got bad camera matrix and distortion. The primary camera needs more images too, but I mentioned that below.

[-96.78414539354907;

-471.6594258771706;

16.61072172695242]

Could you add a little more information? Do you mean you are displaying an image and what to know where in the image the ray is? Or is the ray coming from something in front of the screen like an eye? Or what?

I have a video of a person and a gaze vector originating from that person's eye. The center of the eye and gaze vector are in 3d world coordinates. I want to figure out where the user is looking, so consequently where the gaze ray intersects with the computer screen..

Alright, first step, do you know the coordinates of the corners of the screen in world coordinates? That's step one.

No, that's where I'm struggling. I'm trying to figure out how to get those so that I can construct a plane and intersect the gaze vector (or ray) with it. Any ideas/pointers would be much appreciated!

Ok, Where is the origin of your world system? The camera? There are a couple of ways to do this. One is to carefully align the camera with the screen and measure the distance to the corners.

If you have another camera, you can do something more precise. Place a chessboard at a forty five degree angle in front of your primary camera and find the transformation from world coordinates to chessboard coordinates. Then use the second camera to take a picture (or pictures) with the first chessboard, and a chessboard pattern on the screen. You can then find the location of the screen in the chessboard coordinates and translate that to world coordinates.

Are you comfortable enough with the transformations to do that?

The camera is the origin of my world system. If I'm using a laptop with a built in webcam, the z coordinate of the screen corners would be 0, correct?

For the second method, I'm assuming you're referring to the findChessBoardCorners() function OpenCV has. But I'm confused as to how the second camera's picture would help determine the location of the screen.

If you're using a laptop, then yes, you can likely assume zero. At least for the top corners. If the focal plane of the camera is tilted with respect to the screen the bottom corners could be pretty far off.

The calibrateCamera function returns the rvec and tvec of the camera with respect to the chessboard. So once you use multiple cameras and a chessboard in space and on the screen you have Primary Camera -> Chessboard in Space and Secondary Camera -> Chessboard in Space and Secondary Camera -> Chessboard on screen. Just invert the second one, and then apply them all in sequence to get Primary Camera -> Chessboard on Screen.

Thanks! Could you just clarify how I would get the bottom corners using the first method? I have the intrinsic camera matrix.

Honestly, I can't think of a way without simply carefully measuring.

Wait, I have an idea. Get a mirror and set it up so that the camera is looking straight back into itself. Then show a chessboard on the screen and get the rotation and translation. Then measure the distance from mirror to camera, and you have everything you need. This is a bit hard to hold the mirror in place, but not impossible. Set the laptop in front of the bathroom mirror and carefully arrange the screen.

How would getting the rvecs and tvecs help with the screen corners? I'm still a bit confused

One other point came up. I have fx, fy in pixel units. Is there a conversion to mm? I've looked this up but the answers have been inconsistent.

You have the rvecs, which is the rotation from the camera matrix to the plane of the chessboard, where world Z is straight out of the camera and Z' is up from the chessboard (which is on the screen, so out of the screen).

You have tvecs which is the translation to one corner of the screen (use drawAxis to figure out which), and you know the size of the screen because you measure it with a ruler or tape measure. So the rvecs tells you which direction the other corners are in, and you just translate the appropriate distance to get the other corners.

You have to know the size of the focal plane array in mm, then it's a straight pixels/mm thing. You shouldn't need it for this.

Hi, I wanted to bump this because I was hoping you could help me further. I haven't been able to make progress and I think I need a walkthrough.

I ran opencv's calibrate_camera.py program to get a more accurate camera matrix, but I had a few questions about the method you proposed:

"If you have another camera, you can do something more precise. Place a chessboard at a forty five degree angle in front of your primary camera and find the transformation from world coordinates to chessboard..."

I am a bit new at this. How would I use the calibrateCamera function to do this. In particular, where would I get the objectPoints and ImagePoints values from?

Next, would I augment the rvecs and tvecs together to do the transform. Or do I mutiply by the matrix of rvecs and add the tvecs after?

Take a look at the tutorials for the Camera Calibration module. Specifically this one.

Hi Tetragramm, I've taken a look at the tutorials and I've made good progress. However, I have pictures of the secondary camera looking at both the chessboard in space and on screen (of the primary camera). How can I use OpenCV to detect 2 chessboards in the same image (on screen and in space)? Currently it only sees the one in space. Secondly, once I have the transformation, primary camera -> chessboard on screen, I want to verify that I'm understanding this correctly, and I pass (0,0) into the transformation to get the first corner of the screen, and so on?

Once the first chessboard has been detected, find the bounding rectangle of the image points and black it out. That gives you a chessboard on the screen, and a big black rectangle, so it should find the one on the screen.

And you almost have that correct, but you should be passing (0,0,0), since you're working in three dimensions.

Wouldn't (0,0,0) be the camera, not the screen, as the camera is the origin of my world system?

Also, does the chessboard need to take up the whole screen when taking a picture with the second camera? I'm unable to do that currently.

Thanks for all your help so far!

(0,0,0) is the camera. But then you rotate and translate and the new coordinates that come out are the coordinates of the screen corner relative to the camera.

It doesn't need to take up the whole screen, but you do need to know how many pixels. That way you can figure out the pixel to unit ratio, and scale your translation appropriately. Then you can just get the pixel location directly instead of having to do additional math.

Ah, so my understanding is that I would have to use the pixel location to figure out the location of the other corners, once I had the first?

On the second point, here is a picture of some of the images I took: http://imgur.com/a/6oMEy The chessboard isn't angled straight at the camera, so the pixel/mm ratio is different for each square. Is that a problem? Which pixel amount do I use?

Ok, so the real chessboard doesn't matter. The one you display on the screen should be an image that is nothing but black and white squares flat on the screen. Otherwise you'll get strange orientations and locations that don't represent the screen.

I'm confused, are you saying the chessboard on screen and the chessboard in space are different? Otherwise if not, as you can see by the images: http://imgur.com/a/6oMEy it may be impossible to you use the secondary camera to take an image of both the chessboard on screen and the chessboard in space without it being angled.

But if I'm understanding you correctly, the first image I took was correct, but on the second the chessboard on screen should have been a chessboard image, not the actual chessboard in space as captured by the primary camera.