Detecting regions of same colour

Hello, Looking to talk through my approach. I want to allow a user to colour dolls using a greyscale photo. To do this, I need to identify the regions that could be a single colour: eg a shirt being separate to pants or face.

At first I thought I could run it through a Bilateral blur & Canny edge detection to separate different regions (Assuming there is a clear line between different areas), but due to imperfections in the photograph / lighting, I am not getting a perfect line for floodfilling, and starting to question if there's another way. I have experimented with many sets of Canny parameters and levels of morphology dilations / erosions, but struggling when it only takes a single pixel error to break the floodfill. Also, as dilations increase, it starts to 'bridge' across the corners.

I'm starting to experiment with contours - and thinking if I could extend the line formed by the last 2 points of a contour to see if they meet another contour within a certain distance or similar slope (if a long section is screened out by lighting).

Instead, I'm wondering if there's a way to implement this that doesn't rely on strong lines, maybe looking at 'textures' in each region. Or as an alternative - combine the edge detection with user input - eg user scribbles over areas of interest and program tries to combine that with edges to find the most likely region of interest (though not sure if there's an easy way to do that).

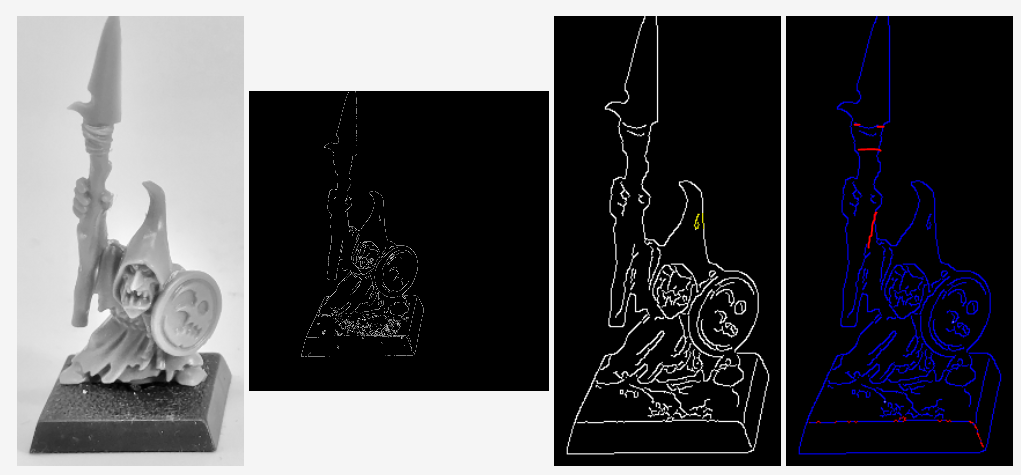

See below: left is source image, middle images show canny detection and final blue image shows contours. As an example of undesirable features: The canny has highlighted a specular shine on the hat (see yellow in 3rd image), and missed important edges eg the red borders of the spear I have highlighted in image 4.